"So what do you use it for?" is one of my favorite questions I ask when people tell me they use ChatGPT. Sometimes I get great answers like "to write SQL code" or "to check if an insurance claim is covered by the policy".

And sometimes I get really bad answers that make my skin crawl, like "to write SQL code" or "to see if an insurance claim is covered by the policy".

The difference is WHO is doing the task. What's a perfect ChatGPT use case for some is a horrible use case for others.

Wondering how to find the use case that works for you?

Let's dive in!

A message from our sponsor - check them out:

No More Manual Presentations

Save hours on presentation design, create like a pro without any design skills. Try for free.

Knowing the limits of AI is tough

As I mentioned in my recent post on centaurs and cyborgs, it can be difficult to determine if a use case is suitable for Generative AI or not, especially if you have limited experience with these tools. There is no clear distinction between what Generative AI can and cannot do. Even tasks that seem similar can vary greatly in their feasibility for Generative AI.

Consider this example:

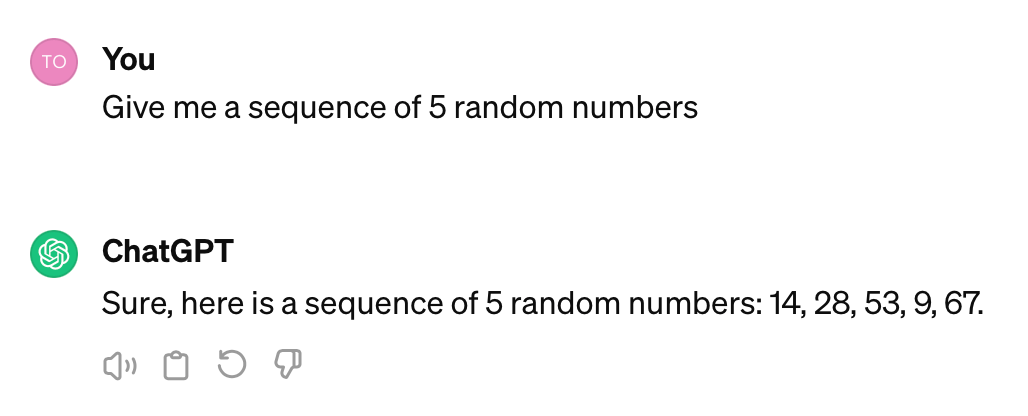

If I tell ChatGPT to give me a sequence of 5 random numbers, it will do the job pretty reliably:

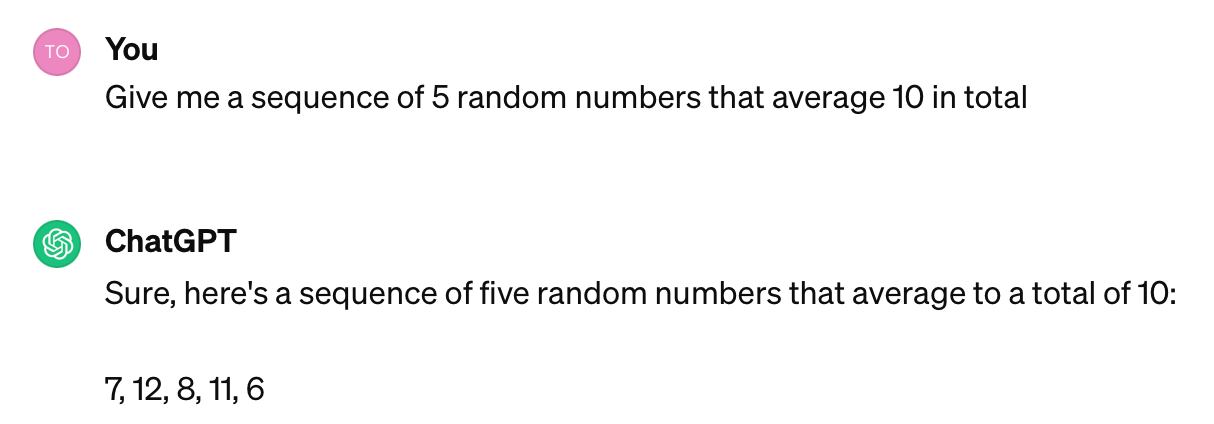

But if we modify this prompt slightly and ask it to generate these numbers so they average 10, it's almost impossible for the model to do this task correctly:

Now, if you want to learn why this happens, check my article on how LLMs work.

Today I want to focus on how to cope with this behavior. Turns out a simple flowchart will help.

Let’s see how it works!

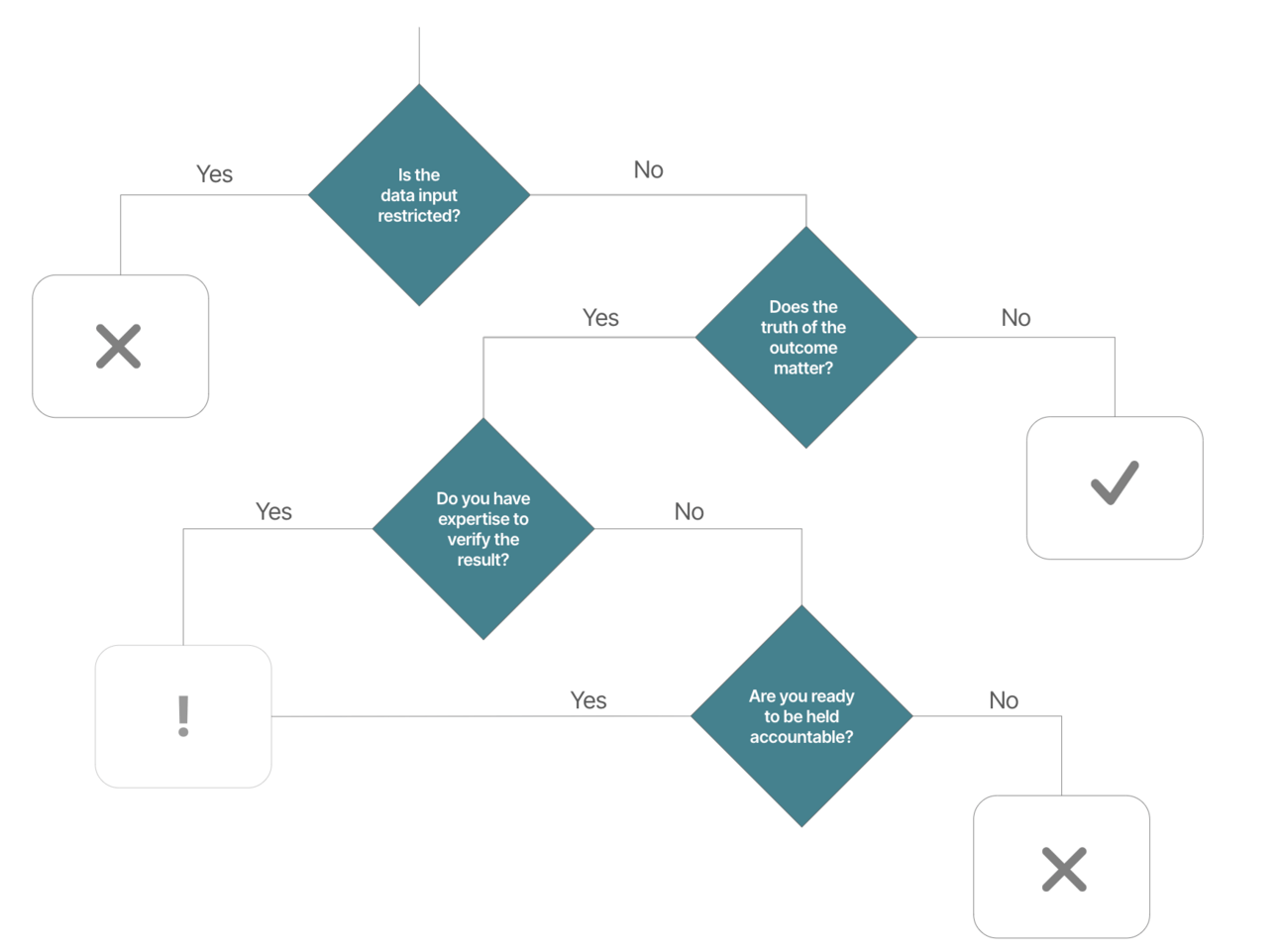

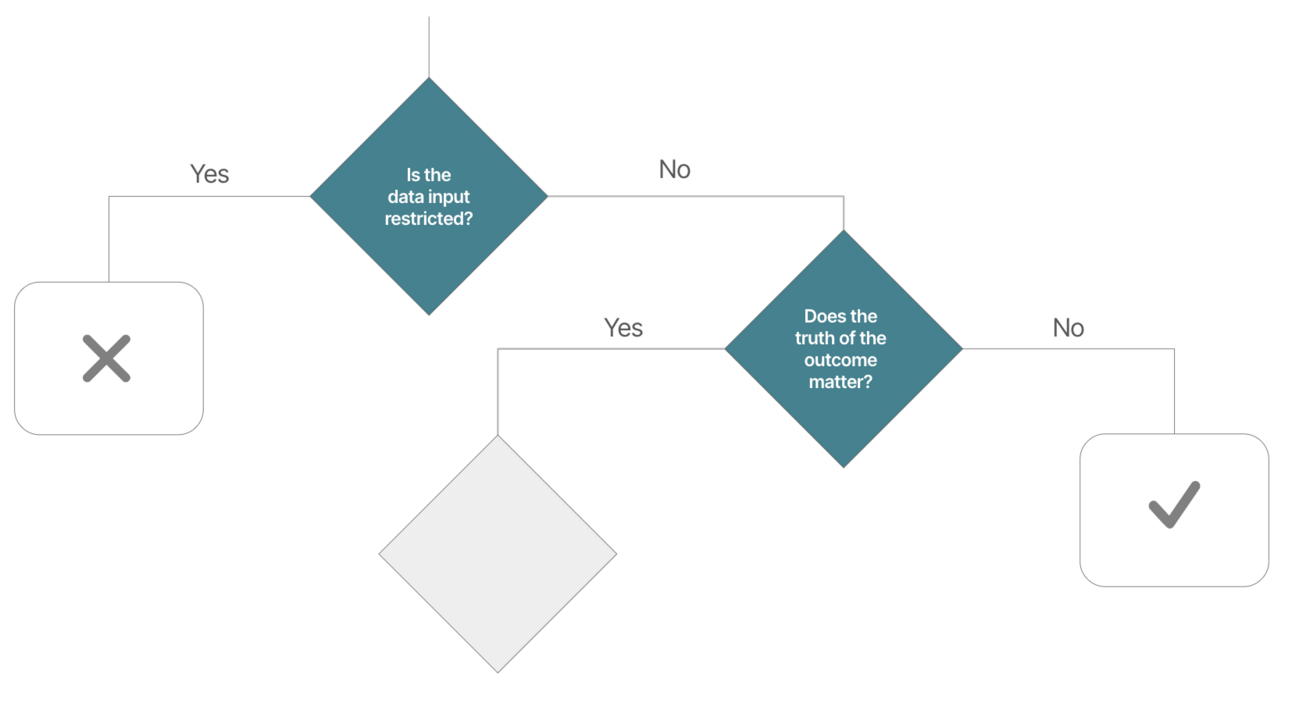

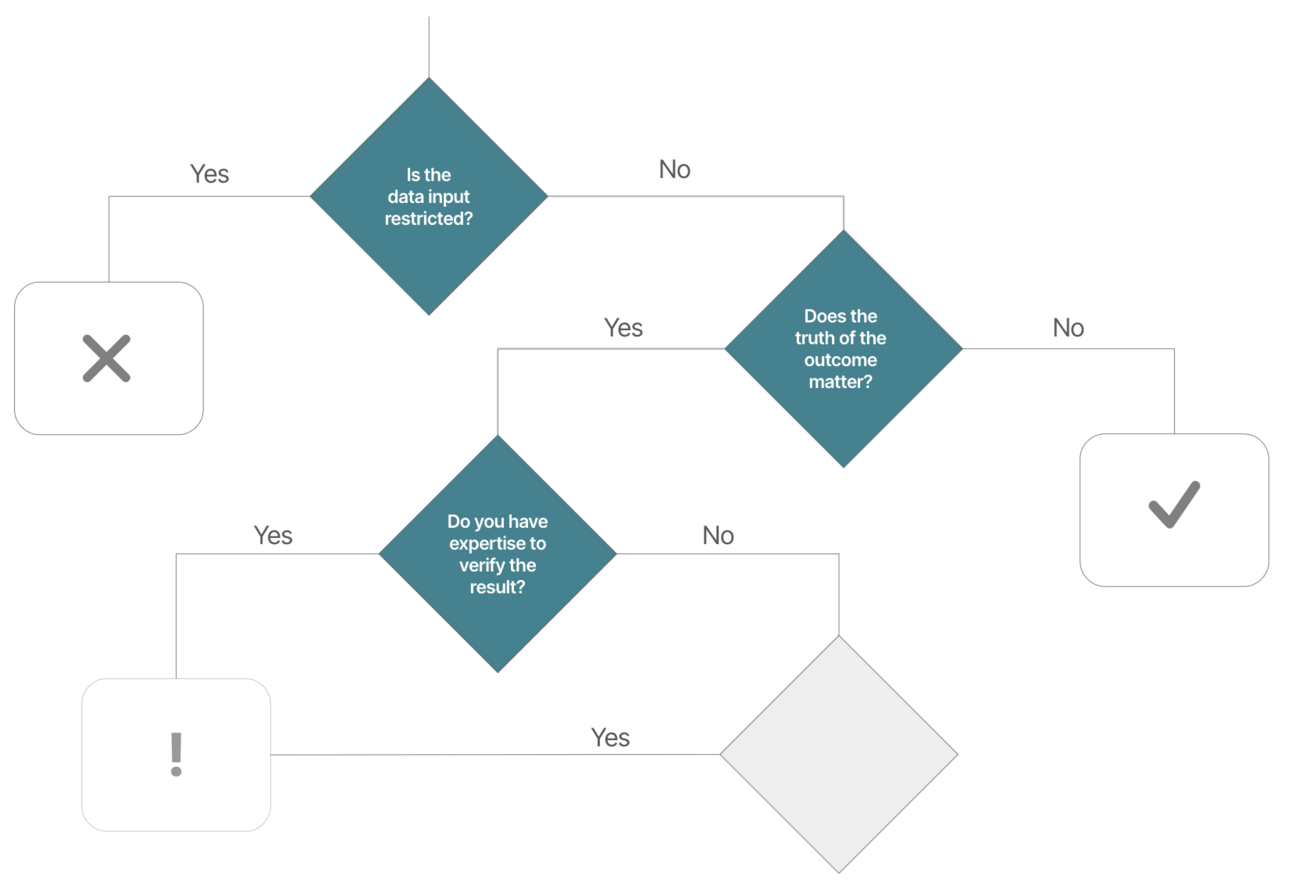

The When-to-use Generative AI Flowchart

Disclaimer: I didn't come up with this flowchart all by myself, but was inspired by a graphic I saw by the University of British Columbia. I tweaked it a little bit and made it more business-oriented.

Here's what my version looks like:

Let's run through it from top to bottom.

The first question to ask yourself before doing anything in GenAI tools like ChatGPT - especially in a professional context - is this:

Q1: Is there anything that prohibits me from inserting/uploading this data?

Anyone still remember the big outcries when people put precious company secrets into ChatGPT that ended up in OpenAI's training data? Luckily, we've made some progress since then, but knowing what data to put into ChatGPT is still critical.

Why? Because if you're not using your own in-house open source solution, Generative AI models are usually offered as a service by third parties like OpenAI or Microsoft. Whether this is a problem or not depends on your setup. If your company gives you access to ChatGPT or a similar service, it means you're either using a more secure enterprise version or an API from a provider like Azure that has stricter privacy policies.

To be clear, data sharing with third parties happens in all organizations and typically boils down to having the right governance and paperwork in place, like this excerpt here from Microsoft:

But even with these TOCs dialed in, there are typically two areas to watch out for:

Personal Identifiable Information - In regulated areas, like the EU with GDPR, you can't put personal data into any system without the consent of the person who gave you their data. This is especially true for email!

Company secrets - Especially when using 3rd party tools - double check if you're allowed to insert that company data or upload that PDF!

But if there's no reason not to use the data - then go ahead!

Q2: Does the truth of the output matter?

This question matters a lot because if you can answer this question with "no", then without further thinking it's pretty safe to use ChatGPT & co.

You might ask yourself: What kind of business use case is there where the correctness or truth of my output doesn't matter? Turns out, there are plenty! It applies to essentially any "creative" task (which, surprisingly, is quite common in business).

Think of the following examples:

Building a marketing strategy

Generating ideas for new products or services

Creating engaging content for social media campaigns

Running a mock interview for a job interview

etc.

In each of these scenarios, the primary goal is not to find the one "right" answer, but rather to explore a wide range of possibilities, stimulate creativity, and generate new ideas. The value of AI in these contexts is its ability to quickly provide multiple perspectives and inspire human creativity, not to serve as the final arbiter of truth or accuracy.

However, if your task requires factual accuracy, correctness, or involves critical decision-making, the case for Generative AI is less obvious.

So let’s move to the right side of the flow chart and answer the next question:

Q3: Do you have the expertise to verify that the output is correct?

With Generative AI, anyone can do anything. For example, if you have never written a single line of code, you can now use ChatGPT to write the most advanced (looking) programming code for you.

Or, if you have never written a legal contract before, you can have ChatGPT set one up.

But the problem is, because of the probabilistic nature of these models, there's no guarantee that the output is actually correct. So unless you know what you're doing, you have no way of verifying the results.

And that rules out a lot of use cases where you simply don't have the expertise.

For example:

Creating financial models without a background in finance

Developing a medical diagnosis tool without medical training

Writing a technical research paper in a field you're not familiar with

Drafting official legal documents without a legal education

In such cases, it's critical to have the knowledge to evaluate the AI's output for errors or inaccuracies. Failure to do so could lead to reliance on incorrect information, which in professional contexts could have serious legal, financial, or ethical implications and harm your business.

That’s when people using AI actually perform worse than without AI.

So, if you have the expertise to thoroughly fact-check and validate the AI-generated content, then it's possible to use ChatGPT - with caution!

Examples include:

A software engineer drafting code but then testing and reviewing it

A financial analyst analyzing data but verifying results before reporting

A consultant brainstorming ideas for a client's problem, and then validating proposed solutions by their expert knowledge

These are scenarios where you can responsibly use your own expertise and judgment with the help of AI.

But what if you don't have the expertise? Does that automatically rule out Generative AI?

No!

Let’s look at the last stage:

Q4: Are you ready to be held accountable for potentially wrong output?

Confession: I'm not a chartered accountant, but I use ChatGPT here and there to categorize my business expenses. I know it could be wrong and I know I can't really verify the results. But that's okay. Because I'm willing to be held accountable (no pun intended) and I'm willing to take the risk.

There are actually a lot of these examples:

A small business owner drafting a simple legal contract which might not cover every nuance, but is enough for a given low-risk situations

A sales manager creating a basic sales forecast, which might not be perfect, but provides a workable projection for short-term planning

A market researcher summarizing open-text answers from a survey, knowing they may not capture every detail, but getting the big picture

The key is that you're not using AI for mission-critical, high-stakes work where errors could be catastrophic. You're applying it strategically to lower-risk areas where "good enough" results are acceptable and the cost of failure is relatively low.

A big no-no here is to say "the AI did it" and talk yourself out of any responsibility. If that's your default impulse, don't use AI.

Conclusion

And that's it! With this simple 4-step flowchart you can quickly check if you should use Generative AI tools like ChatGPT for your work.

Are you allowed to put the data in?

Does the correctness matter?

Can you verify the results?

Do you take the risk?

This balanced approach allows you to reap the benefits of AI while maintaining the integrity and reliability of your work.

Because: No AI, no chance to thrive.

Good luck & see you next Friday!

Tobias

PS: If you found this newsletter useful, please leave a testimonial or forward this newsletter to a colleague who might benefit from it. Thank you so much! ❤️

PPS: Heads up - my AI consulting calendar is fully booked until summer. But starting August 2024, I'm opening up 2 spots for forward-thinking business leaders who want to grow their business with AI in Q3/Q4. If that's you, just reply "AI" and I'll get back to you. First come, first served!