I recently saw a magician make a coffee table fly across the room. It was a perfect illusion.

But here's the thing: if the magician could truly do magic, he could make any table fly. In reality, his trick is likely limited to that specific table.

AI, particularly large language models (LLMs), can be a lot like that magician. If you don't know how their "trick" works, you might easily overestimate their capabilities.

But once you understand the mechanics, you can harness its power effectively to augment and empower your team.

Ready to peek behind the curtain?

Let's dive into the world of LLMs!

Want to dive deeper? Join my workshop!

LLMs: Fancy Autocomplete with a Spark of Magic

"Large Language Models are the path to general artificial intelligence, with capabilities even their creators don't fully grasp."

"Large Language Models are just fancy autocomplete."

Both opinions are controversial, yet correct in their own way. I like to think of LLMs as fancy autocomplete with a dash of magic.

Just like the magician I watched, there's a simple explanation (no real magic or AGI), but also an impressive skill (crafting a powerful LLM).

Today, I aim to demystify the principles of LLMs - a subset of Generative AI that produces text output. Understanding how tools like ChatGPT generate both magical and sometimes oddly dumb responses will set you apart from the average user, allowing you to utilize them for more than just basic tasks.

Be aware: This may get a little technical here and there, but stick with me! The insights you'll gain from this article will set you apart and allow you to use the tool for more than just shallow tasks.

Now, let's get into the nitty-gritty of how LLMs actually work.

Don't worry, I'll make it as painless as possible!

LLMs 101: The Basics

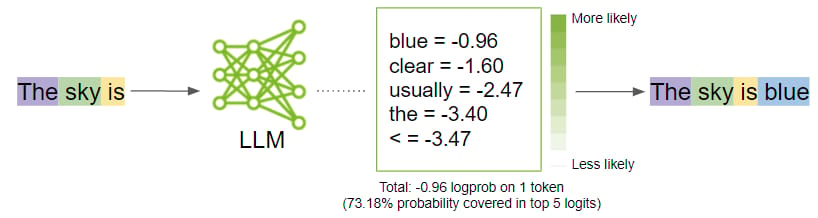

Like any AI, LLMs are essentially performing a prediction task. LLMs essentially predict the next word (technically, a "token" - part of a word) in a sequence.

Given an input sequence or "context," the LLM calculates a probability distribution of the most likely next word.

For example, if the input is "The sky is", the word "blue" has a high probability of being next, based on what the model has seen in it's extensive training data:

The Key to Prediction: Attention

But how exactly does the AI know which word to predict?

In 2017, Google researchers published "Attention is All You Need", explaining that to accurately predict the next word, machines should focus on certain parts of the input sequence more than others. This approach, known as attention, is now a core component of modern LLMs.

Understanding attention is crucial:

If you clearly explain what you want to an LLM, you'll get a better response. The details you provide help the LLM determine the best answer.

This type of instruction is called a prompt.

LLMs don't automatically improve with use. They always start fresh, without remembering preferences or past conversations. To get better outputs, you must adapt to the LLM, not the other way around!

Let's walk through an example to see how the LLM "thinks."

LLM Step-by-Step Walkthrough

Let's prompt our LLM like in ChatGPT with: "What is the capital of Germany?"

Internally, the LLM calculates probabilities for the next likely words, given the input sequence (context). This could be "Until, Berlin, The, What, A, For, etc."

Here's the catch:

This probability distribution for the next word is not deterministic. Every time you give the same prompt, you'll get a slightly different distribution.

Why this randomness? I won't dive too deep, but certain random processes in the model ensure that the outputs are "novel," not just repeating the training data. This built-in feature allows LLMs to generate original outputs.

Without it, they'd just parrot the same text from their training, making them far less useful.

It really is this randomness that allows for the "generative" in generative AI.

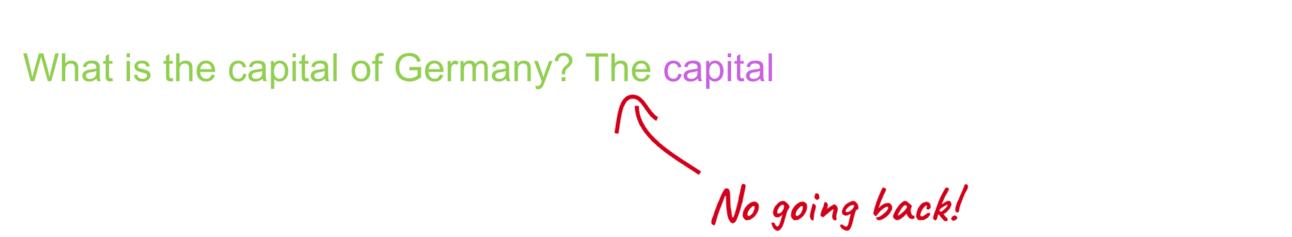

No Turning Back

Let's say the model chooses "The" as the next word.

Now an interesting thing happens: Once the model generates a prediction, it can't go back:

When the LLM selects "The", it becomes part of the input sequence. The new context is now "What is the capital of Germany? The", and the LLM must predict the next word based on this.

There's no undoing the choice of "The"!

The cycle continues, and the LLM produces the output sentence word by word:

And so on…

And so forth…

Until…

The model decides the prediction is complete or reaches the maximum number of tokens to predict.

You see this behavior when ChatGPT "types" its response in real-time.

That's essentially how LLMs work.

Next word prediction.

This is also why tools like ChatGPT can find their own mistakes when asked to double-check their output. As they write, they can only look forward, not backward. To "review" their work, you must explicitly ask them, and they'll likely catch their errors (although that's not guaranteed).

The 'Secret Sauce' of LLMs

There's a secret sauce that makes this whole process work so well, generating astonishing results that stretch the definition of "fancy autocomplete."

To understand it, let's look at an LLM that has just completed training on a large corpus of data (e.g., the internet, a whole library of books, and more).

This is what AI people call a "base model" or "foundation model".

If you gave this base model two questions, it would return a third question, because it's trained to predict/repeat the input sequence pattern.

The first GPT models up to GPT-3 worked this way.

But that's not very useful.

When we ask an LLM two questions, we want two (ideally correct) answers.

So, OpenAI researchers came up with a trick.

They took a smaller dataset of question-answer pairs and used it to "fine-tune" their base model. Instead of giving another question, it would give an answer. On top of that, to improve the answers, they hired people to manually vote on the best one responses.

As a result, Open AI generated an "instruction" model - a large language model that does not just autocomplete, but actually follows instructions. So if we ask two questions, we get two answers:

This was the breakthrough moment for ChatGPT. It worked so well that people often forgot they were interacting with an AI doing "next word prediction."

This is where the magic happened - and where many people got the wrong idea about AI systems.

Because ChatGPT follows instructions so well and behaves confidently, you might think it "knows" what it's doing. In practice, this can lead to disappointment and pitfalls. ChatGPT doesn't really "know" anything. It has no self-awareness or consciousness.

This is super critical to remember.

So instead you should think of the model as "dumb", but at the same time incredibly useful when applied to the right things.

The bottom line: LLMs predict the next word in a sequence. Your main control over the output is the prompt.

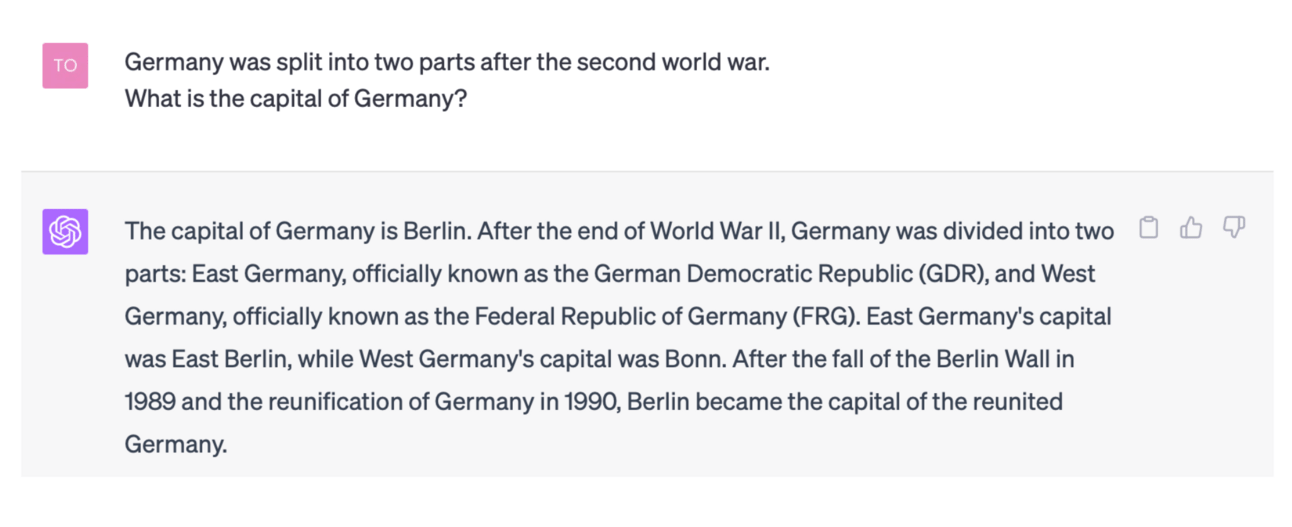

As you can see below, when we provide historical context in addition to asking for the capital, ChatGPT's answer is more verbose compared to just outputting the capital's name.

That's the essence of prompt engineering. Be very specific about what you want. The way to control the behavior of an LLM is to control the prompt you give it.

Conclusion

LLMs are powerful tools, but they're not magic. They predict the next word in a sequence based on patterns in their training data. The key to getting the most out of them is understanding how they work and crafting effective prompts.

But the bigger picture is this: Anyone can learn to use AI, not just experts. And in today's world, that's not optional. It's a necessity.

Because if you don't, someone else will. And they'll be the ones with the advantage. So, the choice is yours. Embrace AI and augment your potential. Or risk being left behind.

In the age of AI, there's a new reality: No AI, No Chance.

The future is here. Make sure you're part of it.

Until next Friday,

Tobias

Want to learn more? Here are 3 ways I could help:

Check my books: AI-Powered Business Intelligence (O'Reilly) and Augmented Analytics.

Join my LLM Principles workshop featuring a mix of theory and practical, hands-on exercises and use cases.