After Example 1 and Example 2, here’s another case of profitable AI – today from insurance compliance.

Same disclaimer applies:

The characters and events described here are fictitious. Any similarity to actual companies or events is purely coincidental.

So don’t treat this as an actual case study, but more of a realistic example based on my consulting work.

The starting point:

"Every year we spend months producing regulatory reports. AI can write documents. So let's have it draft them for us."

Is this profitable AI?

Let's find out!

The Situation

We're at a mid-sized commercial insurer in Germany, regulated by BaFin under Solvency II.

As in any finance (and especially insurance) business, there's a lot of reporting load – especially in Europe. The big ones include SFCR (a public annual report, 50+ pages), RSR (a confidential supervisory report), and dozens of QRTs (standardized data templates submitted quarterly and annually). On top of that, there's a big bowl of alphabet soup incl. ORSA, SFDR, and ad-hoc requests.

Reports typically mix quantitative data – tables, ratios, KPIs – with qualitative explanations. The process works roughly as follows:

Each department writes their sections in Word or fills in their QRT templates in Excel.

They send their inputs to a central contact person (who spends most of their time following up with everyone).

Someone stitches everything together, makes sure page 47 doesn't contradict page 12, and flags inconsistencies back to the authors.

Repeat until the deadline.

(For everyone outside finance, it's actually crazy that this works.)

The SFCR alone often starts months before the filing deadline. The email chain involves more people than a soccer team. Everyone agrees the process is painful and "we could do better".

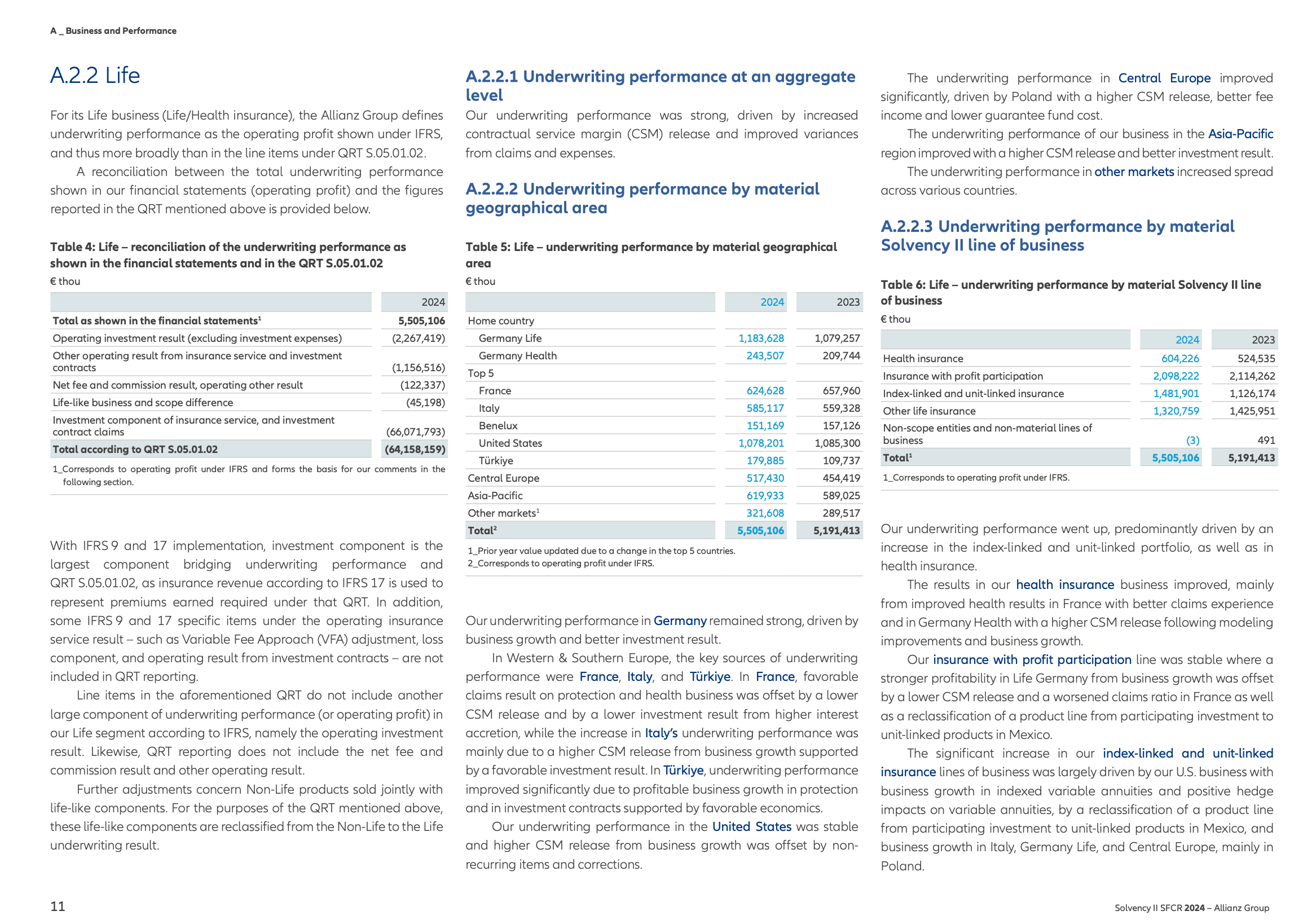

Page 11 of 240. (Example from Allianz Group SFCR, publicly available)

The First Idea

The company started buying Microsoft Copilot licenses a few months ago. It took approximately 5 seconds until the first person involved in the process above uploaded a document with the source information and another document that needed to be filled out, asked Copilot to do the work, and was amazed by the first result.

They pitched the idea to their manager: "Let's use AI to do our reports!"

The team tries it with the SFCR.

The Problem

Three things go wrong almost immediately:

1. Numbers can't be fully trusted. Copilot generates plausible-looking financial figures. Very often the numbers are right, but sometimes they're not – especially when something needs to be recalculated or reformatted (did I hear someone say currencies?). In a document that goes to BaFin, "mostly right" is the same as wrong.

2. Formatting issues. The reporting documents require a specific format, but Copilot just didn't "get" it. Sometimes the formatting was perfect, sometimes it was close, and sometimes Copilot refused to work with the provided template altogether. Figuring out whether Copilot actually created a new document from scratch that resembled the original or whether it filled in the provided one overwhelmed non-technical users.

3. Non-cohesive narrative. Regulatory writing follows a specific tone – precise, yet cautious. Copilot's output oscillates between generic and useful. Consistent use of internal terminology often doesn't work. The team spends weeks tweaking prompts that grow longer and longer. They encounter the Whack-a-Mole effect: once some parts work, others stop working.

As a result, the "AI draft" creates at least the same amount of work – if not more – than it saves. Someone still has to line-edit 60 pages of generated text while also verifying every single number.

This is why Productivity AI doesn't scale for structured, high-stakes documents. The tool can write, but it can't be held accountable for what it writes. And in compliance reporting, accountability is the whole point.

The Pivot

When I saw this use case, I encouraged the team to stop thinking of their reports as "documents to generate" and start thinking of them as "templates to fill".

Most regulatory reporting follows a fixed structure:

The SFCR has five section, each with defined subsections.

QRTs follow strict templates down to the cell.

Even the narrative RSR follows a predictable structure year over year.

So instead of asking AI to write a report from scratch, the team decomposed their entire reporting suite into discrete blocks.

Each block falls into one of three categories:

Block A: Data blocks – pulled directly from source systems. No AI needed. A deterministic pipeline queries the database, formats the numbers, and drops them into the template. These are never generated, but extracted.

Block B: Narrative blocks – such as risk profile descriptions, or methodology explanations. These are generated by AI, but with tight constraints: the prompt includes last year's approved text, the current data points that must be referenced, and explicit formatting rules. During report generation, AI-written content is flagged so a human needs to approve it.

Block C: Consistency checks – an automated layer that runs after all blocks are assembled. Are numbers consistent? Is every number in a narrative section traceable to a number in a data table? Ideally, this layer runs across documents, not just within them. But ensuring consistency within one document alone is already helpful (and replaced a lot of fragile Excel macros).

Example workflow: Each block is generated independently, then assembled.

The key shift is that there’s not one AI generating a whole report. It's multiple AI systems (Autopilots) that each work on one block at a time, within strict boundaries. The solution stitches the blocks together. A rule-based validation layer catches inconsistencies before a human ever reviews the assembled document.

Once the approach worked for one report, adapting it to other reports would be straightforward because the team could reuse the same architecture and apply it to different templates.

The Value on Paper

Let’s estimate how the theoretical value stacks up across the full reporting suite:

Core reporting team | 5 people |

Contributors from actuarial, finance, risk | 3 people |

Hours spent on report production per year | 1,000+ |

Hours spent on review, cross-referencing, triple-checking | 500+ |

Total hours per year | 1,500+ |

Blended internal hourly rate | $90 |

Theoretical annual value | $135,000 |

That 500 hours deserves a closer look. In a regulated environment, no one signs off on a document they haven't personally verified. Triple-checking entries is the majority of the stress. So the real cost isn't just writing, but the anxiety tax of making sure that the assembly of different documents from different people and different systems across different timelines actually worked out.

But even here, $135K is the value ceiling, not the actual business case.

The Business Case

Applying the 10K Threshold: would this solution deliver a recurring $10K value – and if so, how often?

The team landed on a conservative estimate of $10K per quarter (= $40K per year). The reasoning:

Automated consistency checks would eliminate a big chunk of the triple-checking.

Report production time could be reduced by up to 70%.

The "central coordination" role would be transformed – the person stitching inputs together, following up, and flagging errors. The organization could free up 0.5 FTE (~$75K salary cost) for other valuable work.

That's how $40K per year became the economic floor, $135K the economic ceiling, and 0.5 FTE the targeted goal.

One important caveat: most of these savings only fully materialize once the data blocks (Block A) are reliably automated. And that's not an AI, but a data engineering project (which often gets deprioritized because there's no visible business case for it).

This is where the AI piece becomes an enabler. By building the narrative architecture and consistency layer first, the team creates something the data platform team can deliver into. So "Clean up the data exports" isn't an abstract IT initiative but a concrete requirement with a clear destination. The reporting template is waiting.

In other words: the AI project pulls the data project forward.

For now, the AI project would focus on Blocks B and C.

The Cost Cap

With a floor of $40K per year, the running costs must stay well below that. We set a cap of $10,000 per year – covering API costs for processing narrative blocks across multiple report cycles throughout the year, and regular maintenance without new feature development. That leaves $30K in real value earned every year.

With a 24-month payback target, the development budget becomes $60K.

Napkin Math

Minimal impact | $40,000 per year |

Development (template decomposition, narrative prompt engineering, consistency checker) | $50,000 |

Buffer | $10,000 |

Monthly running cost | ~$800/month |

Annual running cost | ~$10,000 |

Year 1 total | ~$70,000 |

Year 2+ annual cost | ~$10,000 |

ROI | ~2 years after go-live |

What Must Be True

1. The report structures must be genuinely stable. If the regulator overhauls the template every year, the decomposition work resets.

2. The data projects must follow. The narrative architecture and consistency layer deliver real value on their own, but they're not the end state. The full savings only materialize once the data blocks (Block A) are automated too. If the data platform work stalls, the AI project becomes a nice improvement instead of a transformation.

3. The reporting team must own the prompts. If the prompt for the risk profile section says "describe the risk profile," you'll get generic output. If it says "compare this year's underwriting risk concentration to last year's, reference the table in block C.2, and flag any deviation above 5%" – you'll get something useful. These constraints come from domain expertise, not IT.

The Prototype

Take the most recent published SFCR. Decompose it into blocks. Pick 5 representative narrative blocks and build the pipeline for those 5 only. Run the consistency checker across the whole report.

Then put the output next to the original and ask: would this have saved time or created more work?

If the narrative blocks need only light editing, you're on the right track – extend to the remaining blocks. If the output requires heavy rewriting, refine the prompts and constraints before scaling.

The Verdict

With a clear scope, conservative value threshold and defined cost caps, this is a solid profitable AI case.

Decision: Spend 20% of the development budget and build the first prototype.

By the way, this approach of course isn't limited to insurance. Any organization that regularly produces structured reports from multiple contributors can apply the same Block A/B/C architecture.

I hope you enjoyed this example.

If you found it useful, leave a note or reply to this email and I'll make sure to share more examples like this in the future.

See you next Saturday!

Tobias

P.S. I wrote a book on how to find and evaluate AI opportunities like this one. It’s called The Profitable AI Advantage.