Today I want to share another example of a profitable AI case – this time in private equity.

Again, this isn't an actual case study, but a "based on a true story" article grounded in my own consulting work.

In other words:

The characters and events described in this article are fictitious. Any similarity to actual companies or events is purely coincidental.

The starting point: "We get 100 investment proposals per month. AI can read PDFs. So let's have it summarize them for us."

Profitable AI?

Let's find out!

The Situation

This is a small private equity firm (about 50 employees). They’ve been in the business for quite a while and have earned a good reputation in their industry.

Their deal flow is roughly 100 investment proposals per month – meaning the team receives about 20-30 pitches per week where each is a PDF that could have anywhere between 20-50 pages. (These are just the “short” versions.)

Every proposal needs an initial assessment: is this worth a closer look or not?

Currently, five investment managers split this work. Reading, skimming, prioritizing. The total effort is hard to quantify, but the team estimates that each manager spends about 3 hours per week just on initial screening — before any real evaluation begins.

That's ~15 hours per week of highly paid people doing what is essentially sorting.

The First Idea

After a quick brainstorming of whether and how AI could help, the team came up with the following:

"ChatGPT can already read long PDFs. Let's build a solution that generate executive summaries in our inbox, so our managers can scan 100 proposals in an afternoon instead of a week."

Sounds like the perfect AI use case!

The Problem

With the summarization approach, 3 problems showed up almost immediately, even before we did the first prototype:

1. You still can't trust it 100%. While Generative AI is good at summarizing documents, it’s still far from perfect – especially when the input documents vary widely and include financial metrics. Getting even just a “small” detail wrong in the summary like a financial projection has massive impact.

2. Losing intuition. PE firms have trained investment managers over decades to develop a "feel" for proposals by reading them. A summary strips all of that and exposes the company to the risk of losing this skill as they roll out the AI solution.

3. Most is marketing content anyway. Summarizing a lot of marketing language gives you a shorter version of marketing language. Which in many cases isn’t really that helpful.

So the summary idea died fast during the Concept phase. Not because AI can't summarize – it can. But because the output doesn't survive the "would you get any real value from this?" test.

The Pivot

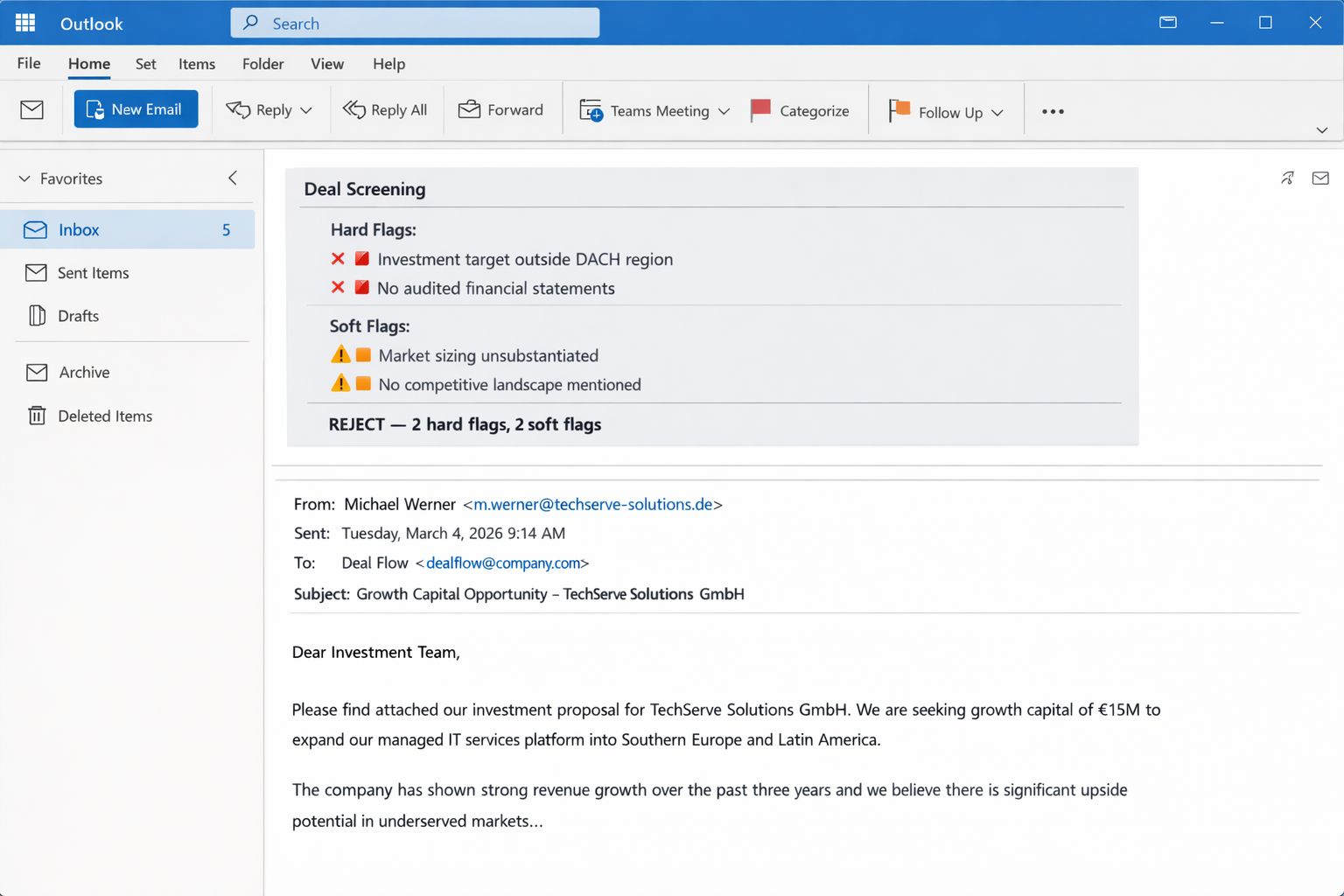

The light bulb moment was a flip in the solution approach: Instead of building something that tells us what's IN the proposal, what if we built something that tells us what's WRONG with it?

So instead of summarizing, the AI would scan every incoming proposal against a red flag checklist.

Some flags were hard criteria that would automatically disqualify a proposal, such as:

Investment target outside a defined geographic region

Deal size outside the investment range

Others would be softer signals that warrant closer scrutiny:

Vague or unsubstantiated market sizing

No mention of competition

Reason of sale

Solution Mockup

Why This Worked

In this case, the AI solution wouldn’t judge. It flags. The investment manager will still be the one to decide – but now they’ll read 35 surviving proposals per month instead of 100. The other 65 were filtered out with clear reasons attached. And this would actually sharpen their pattern recognition skill because cognitive load isn’t spent on low-value proposals where the disqualifier is mentioned somewhere on page 47 in the footnote.

The Value on Paper

I promised $100,000 in the title. So here’s how that number came up:

Proposals per month | ~100 |

Avg. time to screen per proposal | ~40 minutes |

Total screening hours per year | ~800 |

Blended hourly rate (investment managers) | ~$125 |

Theoretical annual value | $100,000 |

But here's the problem: we can't guarantee those 800 hours turn into $100K of extra output. Managers won't bill those hours elsewhere. The time dissolves into other work.

The Business Case

Using the 10K Threshold approach we figured out if the "Pitch Filter" would be worth more than $10K per year – which would justify exploring an Engineered AI solution.

Through that discussion, we landed on a conservative estimate of $10,000 per quarter (~$40,000 per year). The assumption was that if the filter would only help to surface one more lucrative investment opportunity per year without spending more time, the financial gain could easily be justified.

So $100,000 was our ceiling and $40,000 the economic floor.

The Cost Cap

When your conservative value estimate is $40K+ per year, the running costs of this solution must stay well below that. We set a cap of ~$5,000/year incl. maintenance fees – leaving $35K in real value earned.

This would give us $35K of development budget if we wanted this solution to be profitable within the first year.

I wanted 6 months. So the actual development budget became $17.5K.

Napkin Math

Here's the rough economics of the solution:

Minimal impact | $40,000 per year |

|---|---|

3 months development (build red flag checklist, PDF processing pipeline, integration with deal flow) | $15,000 |

Buffer | $2,500 |

Monthly API + managed solution | ~$400/month |

Annual running cost | ~$5,000 |

Year 1 total | ~$21,000 |

Year 2+ annual cost | ~$5,000 |

ROI | ~6 months after go-live |

What Must Be True

Besides tech and financials, a few other parameters need to be true in order for this case to work:

Volume must justify it. At 100 proposals/month, the math works out. If the business is expecting to get less proposals in the future, this case would become difficult. I.e., if you get just 10 proposals per month, just read them.

The red flag checklist must exist. If the firm can't articulate what a bad proposal looks like, the AI can't screen for it. The checklist comes from deal experience, not from AI.

The team must trust the filter. If managers re-read every filtered proposal "just in case" the savings disappear. The hard criteria (like region filter) build trust fast because they're binary. The soft flags take longer to earn confidence.

The Prototype

We took 50 recent proposals the firm already assessed – 25 that went forward, 25 that were rejected. Ran them through the AI manually with the red flag checklist. Did the AI's filtering match the firm's actual decisions?

If we get 90%+, we’d build. 70-90% and we’d refine the checklist. Below 70%, we’d refine the checklist, prompts and document processing. The problem is usually the criteria, not the AI.

The Verdict

To summarize, here’s the profitable AI case for this AI Deal Screener:

Theoretical value | $100,000/year |

Minimal impact | $40,000/year |

Running cost | $5,000/year |

Implementation budget | $17,500 |

Payback | ~6 months |

Decision | Build the prototype |

I hope you enjoyed this example.

If you found it useful, leave a note or reply to this email and I’ll make sure to share more examples like this in the future.

See you next Saturday!

Tobias

P.S. I wrote a book on how to find and evaluate AI opportunities like this one. It’s called The Profitable AI Advantage.