As the US celebrates Independence Day, I can't help but think about another kind of independence: the freedom to control your own AI destiny.

While big tech is busy building walled gardens around their AI models, the world of open source AI is booming. It's a movement that promises to free businesses from vendor lock-in, cut costs, and tailor AI to their unique needs.

Want to discover how open source AI could be your ticket to AI independence?

Then let's dive in!

Hot off the press! The first batch of my new book, co-authored with Willi Weber, is about to ship. The book is currently on sale at Amazon and only 15 copies left in stock - be quick and get yours now!

The Open Source AI Revolution

Open Source Large Language Models (LLMs) are an essential part of the open source AI stack. To understand what open source LLMs are all about, let's start with some basics.

What is an open source LLM anyway?

When we talk about open source LLMs, we're usually referring to models with open weights. This means the actual parameters of the model - the secret sauce that makes it tick - are freely available for anyone to inspect, modify, or build upon. It's like having a blueprint of the AI's brain.

But open weights don't mean open data.

In fact, most open source models are quite opaque about the data used to build them.

Why does this matter?

Well, the training data can significantly influence a model's behavior, biases, and capabilities. So while you have the freedom to tinker with the model itself, you might not have full insight into what shaped its "knowledge."

That said, the open source LLM movement is still a game-changer.

Let’s find out why!

Why Open Source LLMs Matter for Businesses

There are three key reasons why open source LLMs should matter to you and your business.

Benefit 1: Independence

It's no coincidence that independence is the theme of this newsletter. With open source LLMs, you're not tied to a single vendor. No more worrying about sudden API changes, price hikes, or service discontinuations. You're in the driver's seat - with all the responsibilities that come with it.

That's why I typically recommend open source LLMs only to organizations that have already gained some initial experience and identified a proven LLM use case (see my article on AI Make or Buy). Otherwise, you run the risk of tinkering too much with technology and losing focus on the business use case.

Benefit 2: Cost

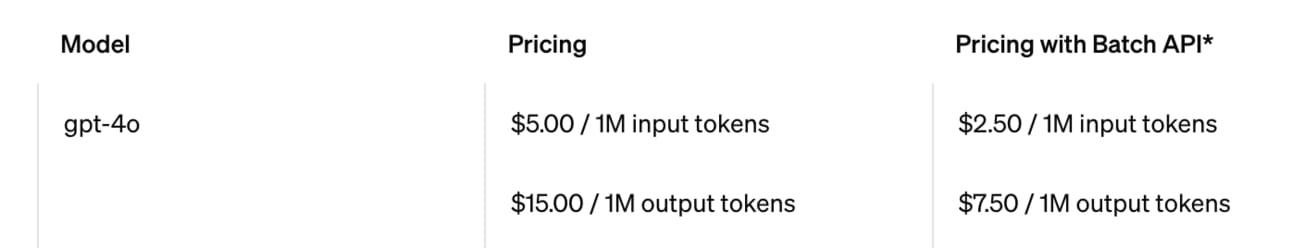

While pricing for modern off-the-shelf LLMs is cheap at the beginning (GPT-4o costs start from $2.50 / 1M input tokens), the cost will quickly add up as your use case scales.

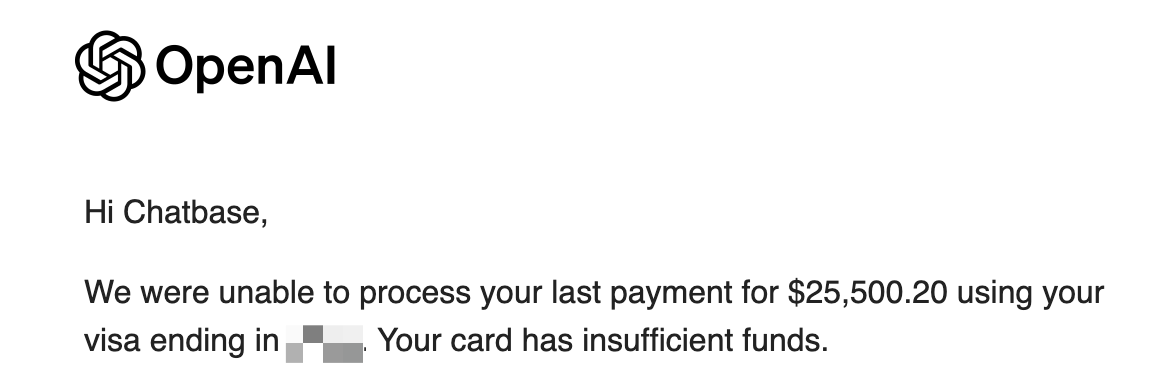

And this is what it looks like when $25,000 could not be charged to your credit card because of a payment issue:

If you hit this scale, running open source models can be incredibly cost-effective.

There are three options:

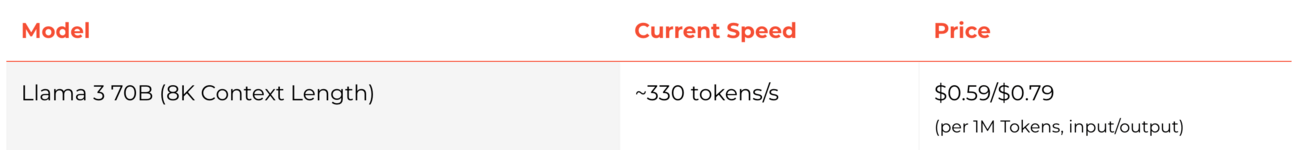

Cloud-hosted open source models, like Llama-3-70b on Groq, can be up to 10x cheaper than GPT-4, while also offering faster performance:

Self-hosting open source LLMs eliminates the per-token cost, leaving only the hardware fees. In most cases, you won't buy the necessary GPUs, which start at around $4,000 each (if you can get them), but instead rent the infrastructure on a platform like AWS.

Lastly, you can run open source models offline on consumer devices. This works well with modern computers such as Apple Silicon Macbooks and software like LMStudio. This is a game-changer for scenarios where data privacy is paramount or network connectivity is unreliable.

Benefit 3: Customization

Want an AI that speaks your industry's jargon? Or one that follows your brand voice? With open source LLMs, that's all within reach.

While it may be tempting, don't start fine-tuning open source LLMs right away. It can get very complicated very quickly. Start with a few short learning (provide relevant examples in the prompt) and other prompting techniques. And if you choose to fine-tune, be sure to allocate plenty of time and some budget for expert help here and there.

Bottom line: It's not just about having an AI - it's about having an AI that really works for you. Open source LLMs offer a level of freedom, flexibility, and control that's hard to match with proprietary solutions. Assuming you've found the right use case.

So which open source LLMs are currently the best choice?

Top 5 Open Source LLMs in 2024

The open source LLM market is booming. Here's a quick overview of the current frontrunners – in no particular order:

Llama 3 (Meta)

With over $100 billion spent on its AI initiative, Llama-3 is probably the most expensive open source model ever developed. And it is a powerhouse! The available 8B and 70B parameter versions show comparable performance to GPT-4-Turbo, and the 400B version hasn't even been released yet. Llama-3 is a great all-round model, although it is quite resource intensive and has some license restrictions, including a use case cap of 700 million monthly active users.

8B, 70B – What are these numbers?

The parameter size in an LLM essentially measures the model's complexity and potential capability. For example, 8B means the model has 8 billion adjustable elements (weights) that it uses to process and generate outputs. Think of it as the model's knowledge and processing units combined.

Phi 3 (Microsoft)

Phi is a so-called Small Language Model (SLM) that comes with "only" 3.8B parameters. It runs on cheap hardware, beats significantly larger models at tasks like logic, reasoning, natural language understanding, and is pretty good at coding, too, thanks to highly curated training data. Also, it comes with one of the largest context windows of open source LLMs - up to 128K token.

Granite (IBM)

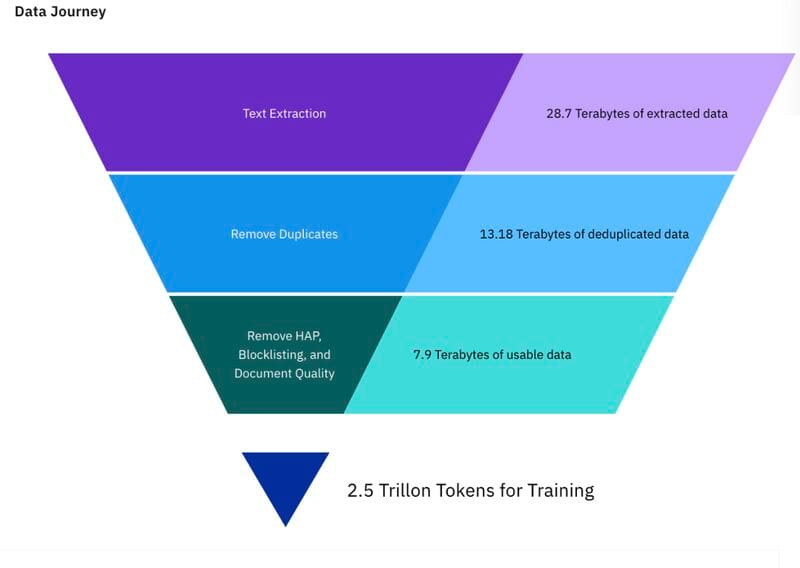

IBM's Granite family of models also put a lot of emphasis on training data curation. On top of that, IBM has been very clear about the training data used, see this LinkedIn post by Armand Ruiz for a great breakdown. This makes the models a great fit for enterprise needs that require transparency, security, and compliance. There are different Granite models for different domains and they all come under a fully permissive Apache 2.0 license. Give them a try!

Mixtral (Mistral)

When French AI startup Mistral announced its first open source LLM of the same name, the AI world was impressed. When they unveiled Mixtral, the AI world was blown away. Mixtral uses a Mixture-of-Experts (MoE) architecture to achieve strong performance in everyday tasks and fluency in English, French, Italian, German, Spanish. Coming with a decent 64k context window and Apache 2.0 license. Made in Europe.

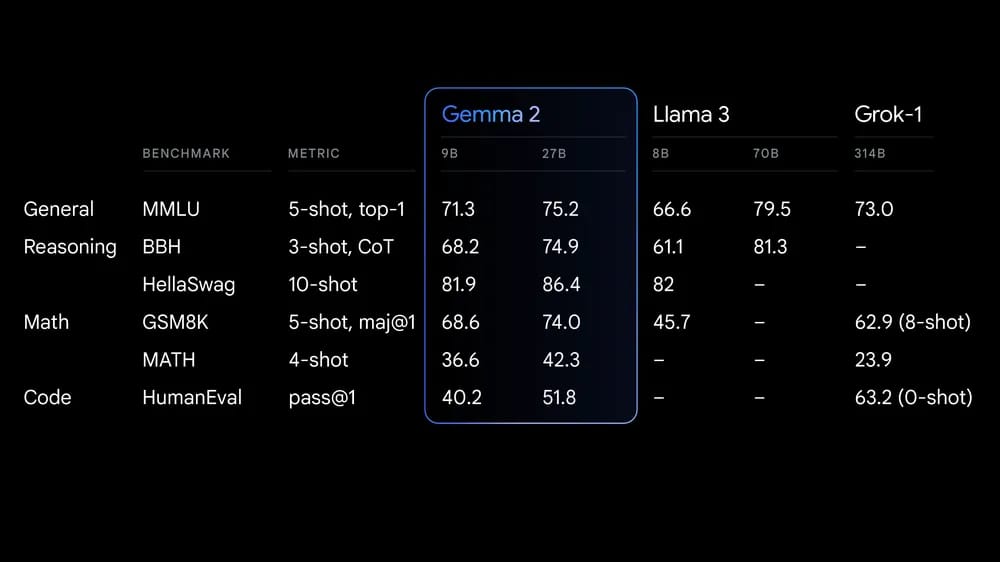

Gemma 2 (Google)

Backed by Google's AI expertise, Gemma-2 comes in two versions - 9B and 27B. The 27B version often achieves similar performance like Llama-3, while being much smaller and therefore less hardware hungry. Compared to Llama, the community is still relatively small, but growing.

Here is a little table comparing the most important aspects of the top 5 models (click to enlarge):

Remember, the "best" model isn't always the most powerful one that grabs the headlines, but the one that strikes the right balance for your specific needs.

Tips on Getting Started with Open Source LLMs

Here are three essential tips to help you get started on your open source AI journey.

Tip 1) Don't reinvent the wheel. Platforms like Titan ML offer a convenient way for deploying and managing open source LLMs locally.

Tip 2) Don't obsess over public benchmarks. What matters is benchmarks on your data!

Tip 3) Try them out: Platforms like Poe.com (for Llama, Gemma, and Mixtral) or Huggingface (virtually all available open source models) let you quickly get hands-on.

Conclusions

Once you've found a solid use case for LLMs with an off-the-shelf solution, consider open source LLMs for further rollout and deployment across your organization.

The ecosystem is thriving, so keep an eye on the emerging landscape. But don't get too caught up in public benchmarks. What really matters is how well the LLM works within your organization, given your specific needs. The model that's overlooked by others may be the perfect fit for you.

If you need help figuring this out, don't hesitate to reach out.

I'll be happy to help you navigate this complex landscape.

Until then, happy experimenting!

See you next Friday,

Tobias

PS: If you found this newsletter useful, please leave a testimonial! It means a lot to me!