Read time: 6 minutes

Hey there,

Today you'll learn how Large Language Models (LLM) like chatGPT can help you do data analysis faster - and what their current limitations are.

As I mentioned in one of my previous posts, LLMs have the potential to revolutionize the way we interact with data and do analytics.

Unfortunately, many people still underestimate their full potential or use them in the wrong way.

Let's make sure you get it right after reading today's edition!

Want to improve your data analytics skills? Then join my upcoming Business Analytics Bootcamp - LIVE with O'Reilly!

Get the insights you want - faster

Many people struggle with the concept of LLMs in the context of analytics and dashboards for the following reasons:

They don't know where to start.

They're afraid to send their data to a remote AI service.

They think the technology is still too young to be used in production.

While all of these are valid concerns, I'll help you overcome points 1 and 2 today.

Today you'll learn how to use chatGPT to analyze data without even sharing data with the AI service!

Let's get started step by step:

Step 1: Copy your database schema

Here's the most important concept to understand:

We don't want to share our actual data with the AI service, but only how our data is stored and organized.

This information is stored in the so-called (database) schema.

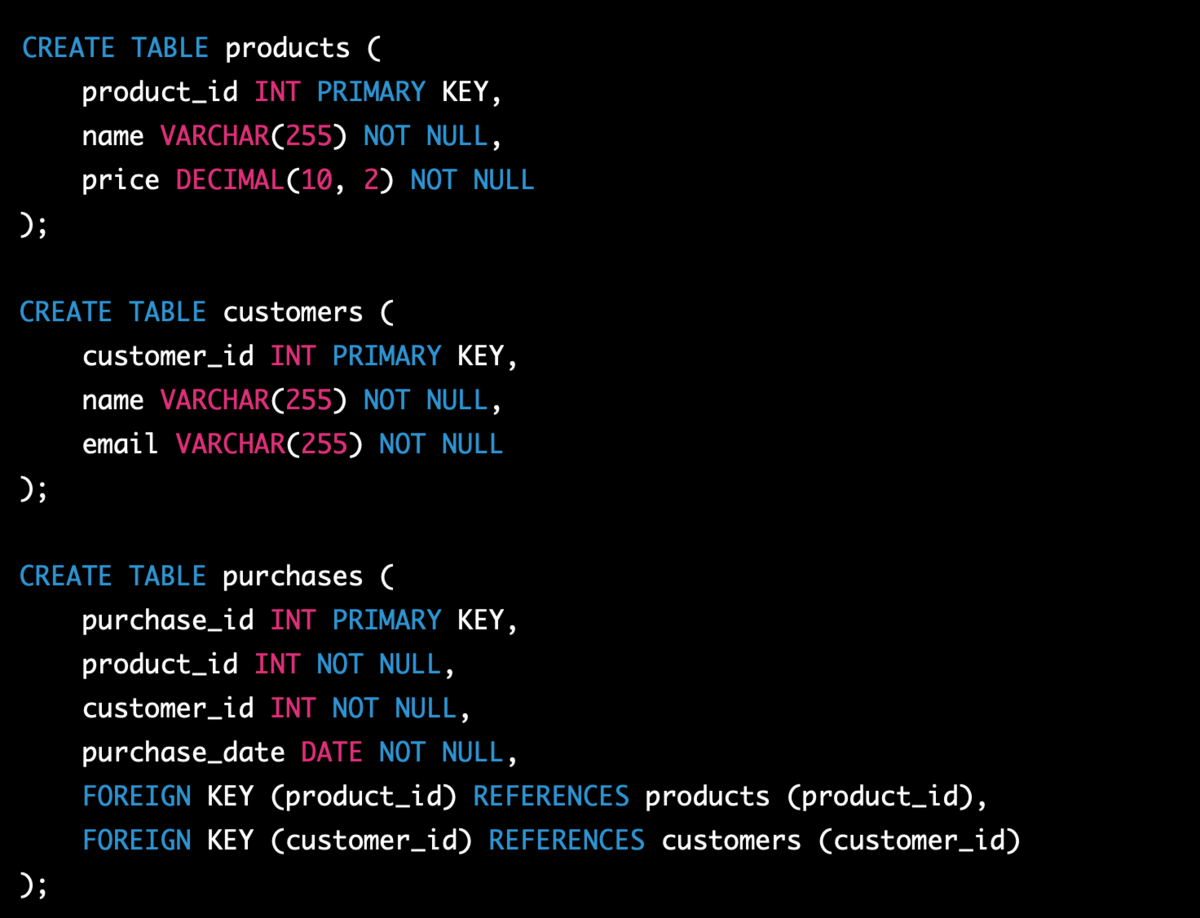

For example, a simple retail database schema could look like this:

You can find these schema definitions in any relational database management system (RDMS). The schema says nothing about the actual data stored, but only about how this data is organized and how the tables relate to each other.

For example, our toy schema includes three tables: products, customers, and purchases.

According to our schema, these tables are linked through the following primary and foreign keys: customer_id, purchase_id and product_id.

(If we actually wanted to enter data into our database, we'd need some INSERT statements like below. But we don't want to share that with the AI service)

Feel free to use a tool like PostgreSQL online and create this mini database yourself to reproduce the following examples. Just click on the image below, copy the code and paste it into PostgreSQL online. In less than 5 seconds you'll have a demo database set up with some dummy products:

Step 2: Paste the schema into the chatGPT prompt

Now let's share this database schema with chatGPT.

We can use the following prompt to give chatGPT the context of our schema:

Notice the "verify it's correct" part in the prompt.

This allows us to check the output and make sure that the schema was entered correctly and that we haven't missed anything critical here.

Step 3: Ask a question

Thanks to chatGPT's context awareness, we can now ask any question about this database using the schema we specified.

Note that it doesn't return the actual data (because it doesn't know it and we didn't tell it!), but only the corresponding SQL query.

This is powerful stuff.

If you know the right SQL query, you can get any information you want from the database (if you have access to it).

Let's start with something simple.

We ask:

Which customers bought the product "Banana"? Return the SQL query.

Here is the answer from chatGPT:

Is this query correct?

We never know for sure!

That's one of the (current) drawbacks of LLMs like chatGPT.

ChatGPT does not give an indication of whether its answer is actually correct of if it just made it up (aka it hallucinated).

So let's quickly verify the code by running it in our SQL sandbox:

And voilà! This is correct.

When you double check our original INSERT statement above (which chatGPT never saw), we can confirm that it was indeed Jane Smith and John Doe who ordered "Bananas".

Let's take this one step further.

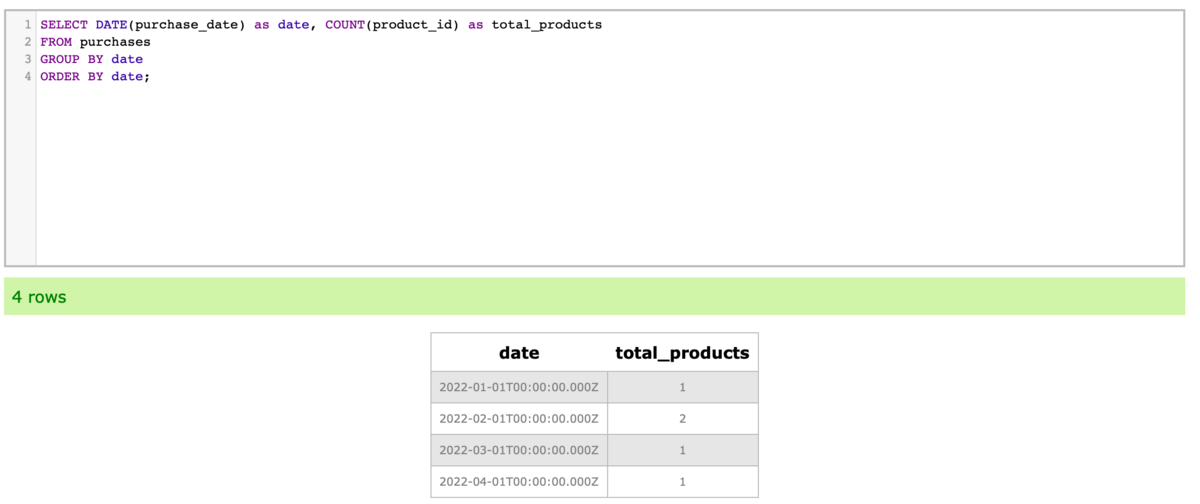

Let's try to fetch the total number of products sold by day.

We can simply ask chatGPT:

Note the following things here:

First, in our database schema, the terms "Sold" or "Sale" do not appear. There's just a "purchases" table.

Second, our schema does not have a field "Day". However, according to the schema there's a field called purchase_date with DATE type.

ChatGPT will try to map database fields to your query, no matter if they exist or not!

That's both a curse and a blessing.

If you ask for “Sales” it will make the assumption that the purchases table contains the information you want. (In this case that’s right!)

If you asko for "Day" it will go ahead and look for the DATE field which seems most likely for that.

However, if we had multiple DATE fields within the purchases table or ambigious namings (such as P_DATE and O_DATE) then chatGPT could never figure it out (unless you do fine-tuning which currently is not possible yet).

But to be fair, most human analysts wouldn't be able to figure it out either.

That's where either good naming conventions or (even better) consistent meta descriptions for your columns can become really practical.

(Now we finally got the proof that metadata actually does matter.)

To finish this off, let's quickly verify if the query works:

And indeed, the query works as expected and returns the correct information.

Step 4: Try more complex questions

"OK, that's all well and good," I hear you say, "but every school kid could write simple queries like this".

Good point!

So let's take this to another level and approach the nightmare of every data analyst:

Window functions!

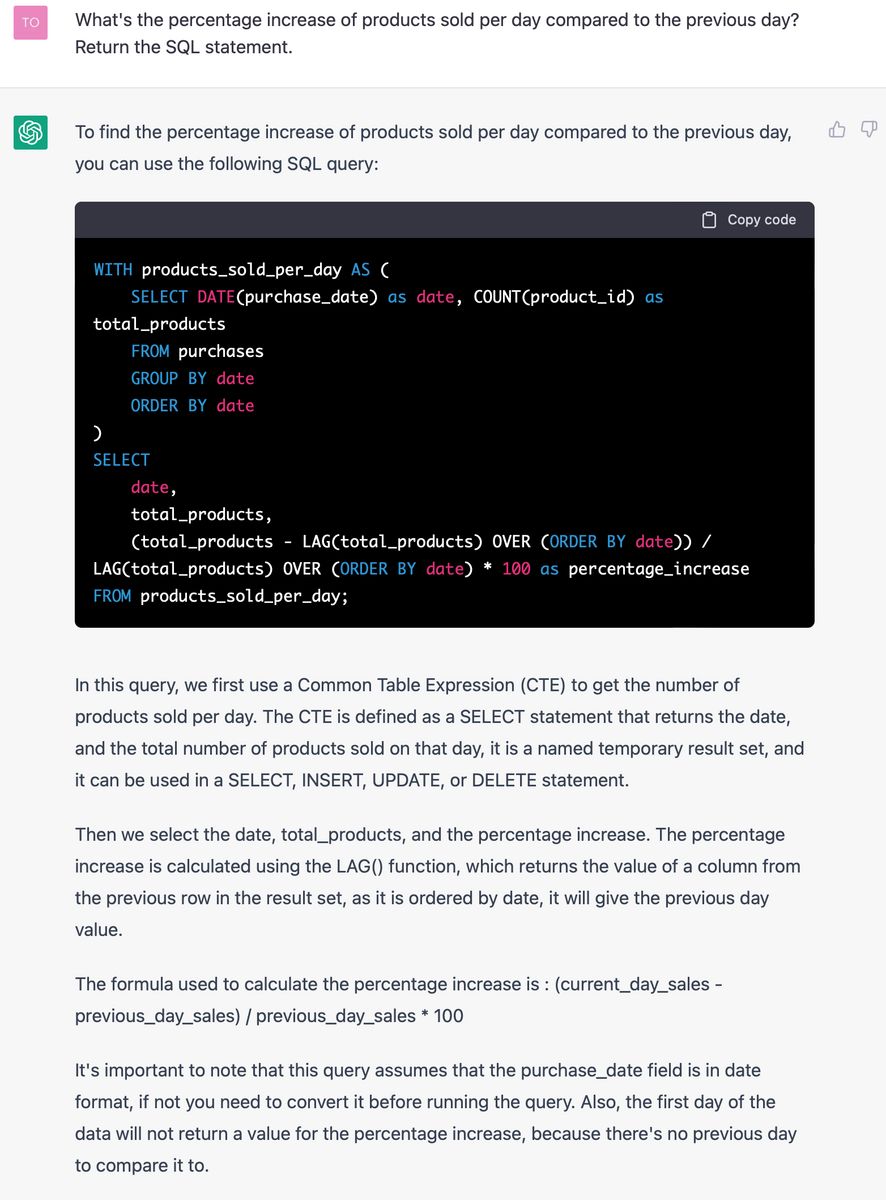

So take this, chatGPT:

Before we worry too much about the output and its explanation, let's be a copy-paste monkey and just throw the query into our SQL sandbox:

Wow - the SQL query was correct!

From the results we can confirm that:

The number of total products per day is correct (we knew these before)

The percentage increase is calculated correctly (easy to fact check)

The first date comparison has no start value (which is correct)

No matter how experienced a data analyst is, typing out a query like this is much slower than having it generated by chatGPT and quickly double-checking the results.

As you see, it's not just about simple counts and filters - chatGPT (and any other capable LLM for that sake) can help us get to the insights we want faster.

Time to wrap up!

Conclusions

As we have seen, LLMs like chatGPT can help us translate human language into SQL code, making it easier for us to gain insights from data.

However, there are still some caveats:

We never know if the query is actually correct.

Complex database schemas with ambiguous terms can be difficult or even impossible for the LLM to understand.

chatGPT has a limited short-term memory (3,000 words or 4,000 tokens to be exact). This means that if we make many queries, it'll eventually "forget" our database schema and we'll have to re-enter it.

So what does that mean for us right now and in the future?

Right now, when someone has at least basic SQL knowledge, they can use chatGPT to greatly expand their data analysis capabilities. The key is: You must be able to interpret the SQL output and fact-check the results for correctness. Only if this is faster than writing from scratch, it'll be useful for you!

In the future, we could increase the performance using a fine-tuning process. This would involve pairing questions from business users with handwritten SQL statements from human analysts. Assuming your data model doesn't change frequently, this should significantly increase the reliability of the model.

And who knows, at some point you could maybe even add a Q&A widget to your BI frontend.

But there's still a long way to go until then.

However, it might be faster than you think!

I hope you enjoyed today's edition!

Any question left?

Hit reply — I'll answer every email!

See you next Friday,

Tobias

Resources

Want to learn more? Here are 3 ways I could help:

Read my book: If you want to further improve your AI/ML skills and apply them to real-world use cases, check out my book AI-Powered Business Intelligence (O'Reilly).

Book a meeting: If you want to pick my brain, book a coffee chat with me so we can discuss more details.