Hey there,

In today's newsletter we'll explore how your business can leverage AI-powered chatbots to automate a good part of your first-level customer support.

“Chatbots!?” - I hear you say. They typically don't have a great perception. The first wave of customer support chatbots which appeared 10 years ago were expensive to maintain and… well… sucked.

But thanks to modern Large Language Models (LLMs) and new architectural paradigms, things have changed and made customer support chatbots even attractive for smaller, non-technical businesses.

Want to find out how? Then let’s dive in!

"Chatbots Cost Too Much And Don't Work"

Early generations of chatbots were clunky and unintelligent, frustrating users more than helping them. Plus, they were expensive.

But today, things are different. What happened?

Large language models like GPT-3 and GPT-4 have changed the game. These models have a strong understanding of context, can facilitate natural conversations, and dynamically respond to users' questions.

With the right implementation, chatbots today are so good that users often don't even realize they're chatting with an AI!

(Which is why we should always disclose when there’s an AI at work.)

Besides working really well, the technology has become so commoditized, that you can get up and running quickly with a v1 of your chatbot that has access to relevant data sources such as your website, PDF manuals, or internal documents - knowledge needed to answer common support questions.

For example, I recently helped a client to migrate from a $70,000/year chatbot solution with mediocre performance to a solution that runs even better at just about $700 per month.

Let's walk through a sample architecture that I typically use for this:

Common Challenges with First-Level Support

Some context before we start. Even for small businesses, handling first-level support can be a huge pain. Handling these support requests takes time and binds internal resources.

Fielding these repetitive inquiries keeps support staff from more important work and leads to slow response times.

And on top of that, most support queries could have been avoided by self-service support.

That’s where chatbots come in.

For who is this relevant?

Chatbots excel at basic support queries in both B2C and B2B contexts. However, I’ve found out they provide particular value for B2B scenarios where:

Information needs are more complex than simple FAQs.

There are fewer overall customers compared to large B2C businesses, so the volume of queries is lower, leading to fewer running cost for the chatbot service.

For such use cases, the ROI on an AI-powered chatbot is very attractive.

Solution Overview

Let’s find out how we are solving this technically.

We will use the RAG (Retrieve, Augment, Generate) architecture, which has emerged as a popular way to build chatbots powered by large language models. Here is a diagram showing how the different components fit together:

At a high level, RAG involves four components:

Interface: This is what the user sees and where user input is collected. In our case, it will be a chat widget embedded on the company website and a Whatsapp channel.

Database: This includes the knowledge sources we want the chatbot to leverage. For first-level support, we can connect it to materials like FAQs, product manuals, troubleshooting guides, and internal documentation.

LLM: The large language model acts as the main tool to handle the conversations. We could use LLMs like GPT-4 hosted by OpenAI or in own instance within Microsoft Azure.

Backend: That’s the layer to pull everything together.

An architecture like this makes LLM-powered chatbots attractive even for very niche industries.

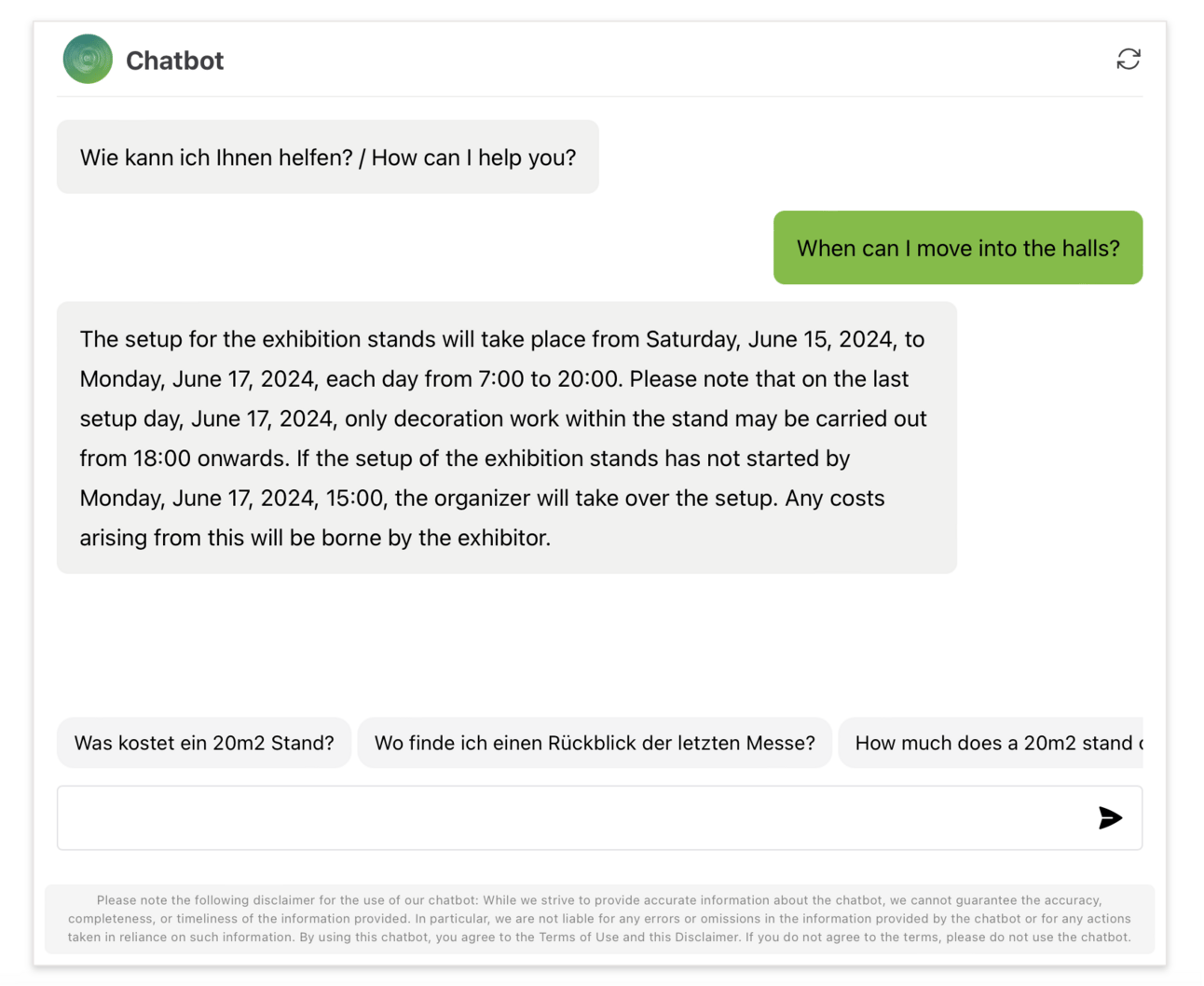

Here’s an example screenshot of a chatbot in action providing first-level support on the website of an exhibition organizer:

A nice side effect is that chatbots like this are multilingual out of the box. For example, even if all of your knowledge documents are only given in German, users can still use the bot in English.

To build a chatbot like this, there are a few options:

You could build entirely custom using open source tools like LangChain. This gives full control but requires more technical expertise.

You could use a no-code open source platform like Flowise. Still self-hosted but abstracts away some complexity.

You could leverage a no-code hosted service like Stack-AI. Quick and easy but less customization.

You could go with a full SaaS solution like Chatbase. The fastest way to get started but least flexibility.

For many use cases, it makes sense going the SaaS route to test things out before customizing further.

Now let's explore each layer in more detail...

User Layer

The user layer focuses on the interface. For our chatbot, this would likely be a simple chat widget that gets embedded directly on the company website.

When users visit the site, they can access the chatbot to ask common support questions and receive automated replies drawing from the available knowledge.

While a web widget may be the starting point, a key advantage of the RAG architecture is that the actual chatbot lives behind an API.

This makes it easy to integrate it into other channels like WhatsApp, Facebook Messenger, or a custom mobile app.

So even as the front-end expands to new touchpoints, the same chatbot brain can scale across them all.

One limitation of the RAG architecture is that the chatbot typically can’t execute support tasks like resetting a password. You could have the chatbot either link to a URL where users can do this, or opt-in for more advanced chatbot software that offers these capabilities.

Data Layer

The data layer focuses on ingesting and preparing the knowledge sources that will power our chatbot.

For a customer support use case, we would want it to connect to materials such as:

FAQ databases - Both public-facing and internal ones.

Support documentation - User manuals, troubleshooting guides, how-tos.

Product guides - Technical specifications, feature descriptions.

Legal agreements - Terms of service, SLAs, privacy policies.

The process involves:

Identifying relevant documentation sources.

Extracting plain text from documents.

Dividing documents into logical chunks/passages based on headings and structure.

Converting these chunks into so-called vector embeddings using the target LLM, enabling semantic search instead of pure keyword-based search.

Storing the chunks and embeddings in a database for low-latency retrieval.

Depending on your use case, this workflow may need to be automated, such as refreshing data every 24 hours or adding new data to the database in real time as it comes in. In my experience, however, manual updates are usually fine because support documents don't change that often.

Analytics Layer

This layer handles conversation processing using our large language model.

When the app backend receives a user input, it will send this input to the LLM.

The LLM first translates it into a search query for the knowledge base.

Relevant passages are retrieved and returned to the LLM, which incorporates the relevant passages into its response generation prompt. This allows the LLM to answer user questions by grounding its responses in the available data.

Chatbot conversations are logged to improve performance. Over time, common questions and pain points which could not be answered become apparent, indicating areas the knowledge base needs improvement.

Result

Chatbots like this not only automate repetitive support queries, but also improve the customer experience through 24/7 availability and fast response times.

One client reported a 30% drop in incoming support emails the first month after launching the chatbot.

On top of this, rich usage data gives us insights to improve our services and free up staff to focus more strategically on customer needs.

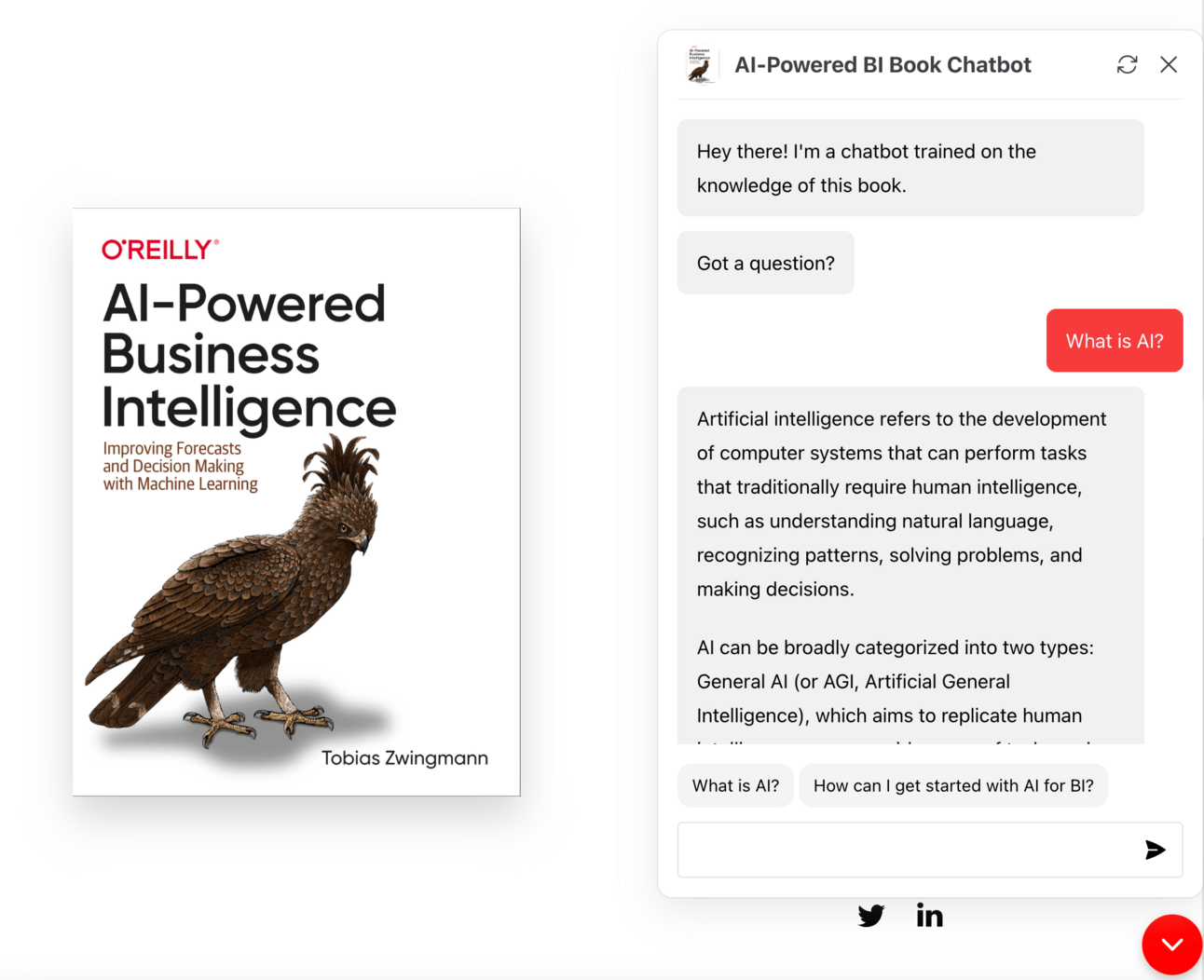

If you want to see this technology in action, feel free to play around with this chatbot I trained on the knowledge of my book!

Conclusion

As you can see, RAG allows us to build conversational interfaces powered by LLMs like GPT-4, while still keeping full control over the data it accesses.

These tools allow businesses to automate simple yet tedious support queries and focus human agents on more complex interactions.

If you want to implement this yourself, I'd be happy to help you get this use case off the ground.

In fact, I'll build your first chatbot prototype for FREE. (Yes, you read that right.)

Just reply to this email by Monday and we'll set up a call.

See you next week!

Tobias

Want to learn more?

Read my book: Improve your AI/ML skills and apply them to real-world use cases with AI-Powered Business Intelligence (O'Reilly).

Book a meeting: Let's find out how I can help you over a coffee chat (use code FREEFLOW to book the call for free).