Hey there,

If you've ever conducted a survey, you know the untold truth about survey design:

“The gold’s in the open answers”.

But historically, analyzing these was the most difficult. Luckily, with the help of AI, we can change the way we approach surveys and make them easier for users and analysts alike.

Let's dive in!

📊 Talking about surveys… let’s run a quick pulse check! 📊

What’s currently your biggest struggle with AI?

(Or reply with your answer)

What’s wrong with most surveys

The way most surveys are designed, is to think backwards from what we as a business want to know without thinking about the user.

This typically results in questions like this:

“How did you like the following 5 topics? Rate them each on a scale from 0 to 5.”

Questions like this shift the workload from the analyst to the user, forcing them to answer in categories that are easy to analyse across many responses.

But questions like this are also the reason why many users hate taking surveys or just tick off any box to proceed to the next question.

If we zoom out a bit, what we actually wanted to ask was probably:

“Which topics did you like most?” or “Which topics didn’t meet your expectations?” and let people answer freely.

So why didn’t we do that?

The problem with these 'open' text questions was that they were virtually impossible to analyze on a large scale.

Until now.

With Large Language Models like GPT-4, we can easily process responses like these and get the insights we want.

To find out how, let’s consider a practical example.

Situation

Let's say we work for a hypothetical B2B consultancy specialising in organisational development. The company has just held its annual conference "Embracing Change". (The title doesn't really matter, but I want to give you the full picture).

After the event, they sent out a post-event survey to all attendees with the following questions:

What is the size of your organization? (Single-choice)

Which industry does your organization operate in? (Single-choice)

What is your role within your organization? (Single-choice)

How would you rate your overall experience of the event? (1-5 scale)

How likely are you to recommend our event to a colleague or a contact within your industry? (0-10 scale)

How did you learn about our event? (Open text)

What did you like or dislike about the event? Please provide specific examples or topics. (Open text)

Here’s a sample of how this survey could look like:

Quick crash course on survey design:

Questions 1-3 are so-called demographic questions. Their goal is to analyze responses by different segments to get deeper insights.

Questions 4-5 are so-called quantitative questions. They are easy to analyze as people respond on a numeric scale. This way, we can easily calculate things like the “average satisfaction” per industry.

Questions 6-7 are qualitative questions. This is where the meat is. These open-ended questions allow us to identify areas of criticism, common themes that customers have highlighted, or generally things that will help us improve.

Today, we will focus on analyzing qualitative questions like 6 and 7 with AI.

Use Case Solution

Suppose we export all of our survey responses to an Excel spreadsheet (I know you're doing it too!).

To keep things simple, we are actually going to use Google Sheets for this exercise, rather than Excel, so that I can share the actual data with you in the resources below.

However, everything we're doing here also works in Excel (check out this webinar to learn how).

Let's draw a simple use case diagram of how things work. As always, we're looking at 3 layers - user, analysis and data layer:

In the data layer, we're importing data tabular data into a spreadsheet software like Excel or Google Sheets.

In the analysis layer, we query a Large Language Model from a plugin (or script) within our spreadsheet.

And everything we do is presented to the user in the same application.

Let's take this step by step.

Step 1: Importing data into Google Sheets

First of all, make sure your data is imported to the spreadsheet in tidy format.

Tidy format means that every row is an observation (a survey response), every column is a variable (a question) and every cell is a value (the response to that question).

Depending on how your survey data is stored, this might need a little preprocessing. Are you stuck doing this? Well, here’s a ChatGPT prompt that will help you.

Step 2: Bringing in AI

Now that our data is organized neatly, we can use AI to analyze it. We will send each cell value to an API, which will analyze the value based on our prompt.

In Google Sheets, you can install a free plugin called GPT for Sheets and Docs. All you have to do is install it, provide your OpenAI API Key and you’re ready to use the following function in Google sheets:

=GPT([prompt], [value])PS: The plugin is also available for Excel.

“But hey, I’m not going to send my sensitive survey data to some random plugin!”

You’re right.

Using a plugin like this is great for testing things out with non-critical data.

For more sensitive scenarios, you might consider deploying a secure version of the LLM like GPT-4 on Azure and then use a custom Google Sheets Function or Python/R in Excel to access the model. (If you need help setting this up, hit reply and let me know!)

But before we worry about these advanced things, let’s test things out first. Because eventually it will be the same model, whether we access it internally or externally.

Step 3: Parsing the responses with AI

Once we’ve set up our plugin, the rest is really easy.

For example, let’s say we want to analyze the question “How did you learn about our event?” and map the outputs to a discrete set of categories such as Social Media, Online Marketing, Word of Mouth, Internal Referral, and Other.

Then our formula would look like this:

When we run this formula, we would see a result like this (in column H):

Congrats - you have just turned a free text input into a categorical variable!

But we can also use an LLM to return numbers, not just text.

Consider the following example for analyzing the feedback question:

As a result, we would get a list of numeric values like this:

Congrats! You have just turned raw text into a numerical variable which we can easily analyze.

Aren’t LLMs nice?

Step 4: Deep-dive for more insights

Now that we have our variables, we can use them to dig for deeper insights.

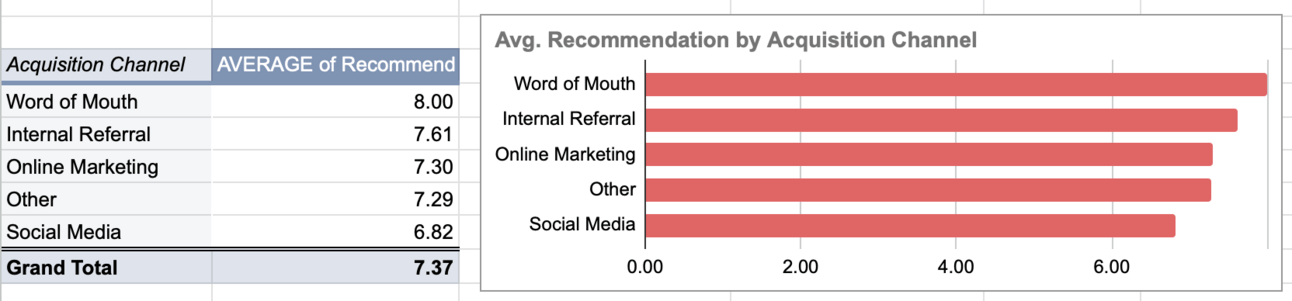

For example, we can make a pivot table that shows the average recommendation based on how customers found us. This will show that word of mouth is the top channel for generating recommendations, even more than internal referrals!

(In a real scenario we would really test if these differences are significant, but for now, you get the idea)

Or we could, take a look at the open feedback answers and get a summary of the sentiment values by Roles. This way we could see that middle management seems to have the highest spread overall while Individual contributors tend to give most positive feedback on average:

Let’s go even further!

Let's look at the feedback from Executive Management attendees and see what key themes they are suggesting. (In Google sheets, simply double click the row in the pivot table and it will create a copy of that data).

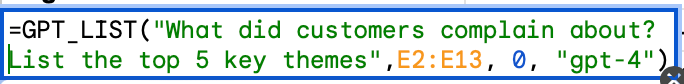

Using the GPT_LIST function (and GPT-4 in this case for better performance), we can pass a whole range of variables (e.g., all responses from a subset) and it returns a list of values as output.

Here’s an example:

Do this for negative and positive comments (using the same open text answers) and you’ll get this as a result:

To put that in context: Even for a medium-sized survey (say <1,000 responses) this would have easily taken us hours or days to analyze. Now, we just did this in 5 seconds.

Note: The number of values you can feed into this prompt is limited by the model’s context limit. For GPT3.5, the limit is 16K tokens (about 11K words). If we exceed this limit, we can either segment the data more, shorten the responses, or use a more capable model like Claude-2 which supports up to 100K tokens. So it’s still a good idea to limit the total number of characters that respondents can use per answer. We want their honest feedback, but they shouldn’t write novels here.

If you like to play around with this data, feel free to use the spreadsheet in the resources below!

Conclusion

We have seen how we can use AI to easily analyze unstructured text data from surveys. I would go so far as saying that this technology should change the default way we approach surveys.

Instead of avoiding open-ended text questions, we should embrace them.

A good survey could be as little as 2-3 well-written questions followed by some demographic information.

I would probably take more surveys then.

Hope you enjoyed today's use case!

See you next week!

Tobias

PS: I’m open for 1 more client until November. If you want me to help you identify and realize AI opportunities for your business, reply “AI”!

Resources

Want to learn more?

Book a meeting: Let's find out how I can help you over a coffee chat (use code FREEFLOW to book the call for free).

Read my book: Improve your AI/ML skills and apply them to real-world use cases with AI-Powered Business Intelligence (O'Reilly).