Read time: 5 minutes

Hey there,

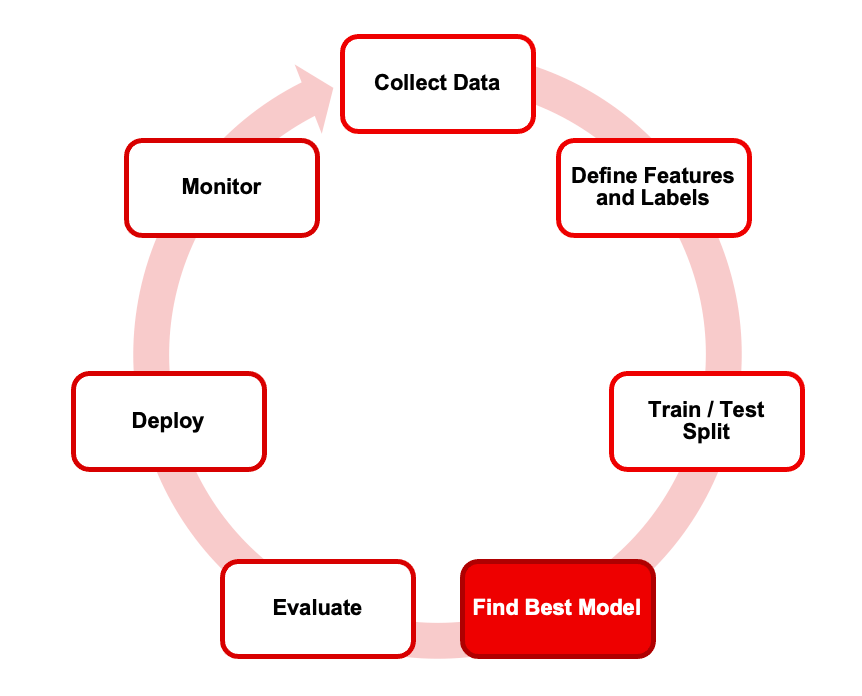

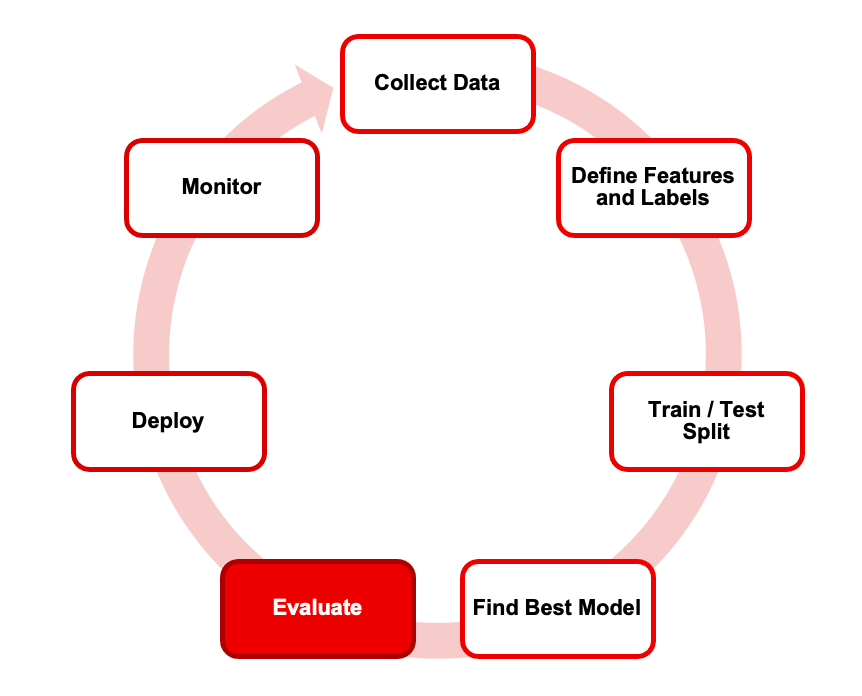

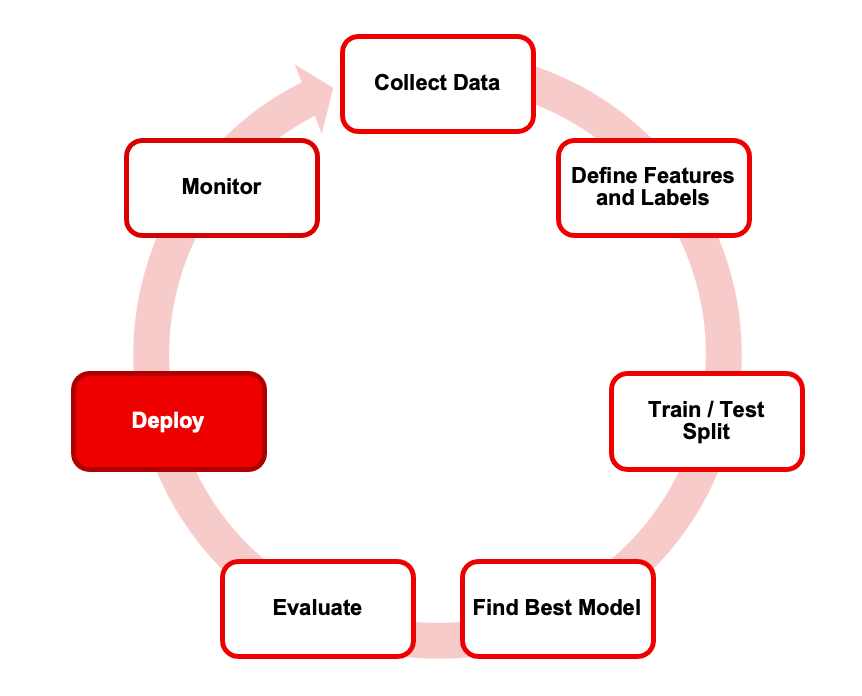

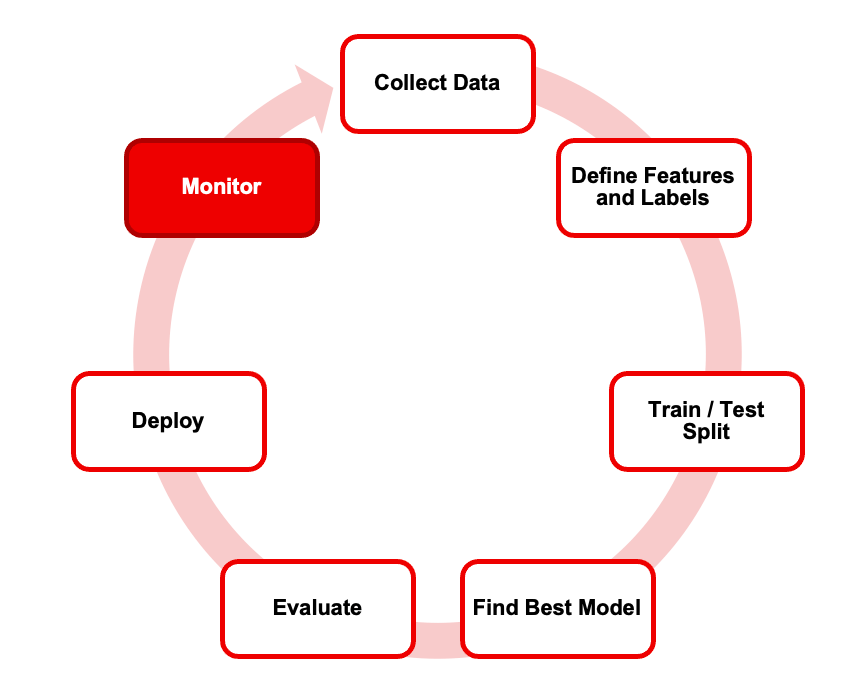

Today I'll give you an overview of the supervised machine learning process so you know the key steps required to build supervised ML models.

Supervised machine learning is the most common type of machine learning in business. Understanding the basics will help you either build ML services yourself or manage ML projects effectively.

However, many people still struggle to get the basics right.

Most ML problems in business are supervised learning problems

The most popular machine learning use cases such as demand forecasting, fraud detection, lead scoring, predictive maintenance, sentiment analysis etc. are all examples of supervised ML.

In supervised learning, the model is trained on labeled data and makes predictions on new, unseen data based on the patterns it learned from the labeled data

As simple as it sounds, supervised machine learning is still a mystery to most people.

Here's why:

They don't know which use cases are a good fit

They don't know how the training data must look like

They are overwhelmed by the different algorithms

They don't know how to evaluate model performance

They find it difficult to improve model performance

Since I talk a lot about use cases in other issues of my newsletter, I want to focus on the other problems today.

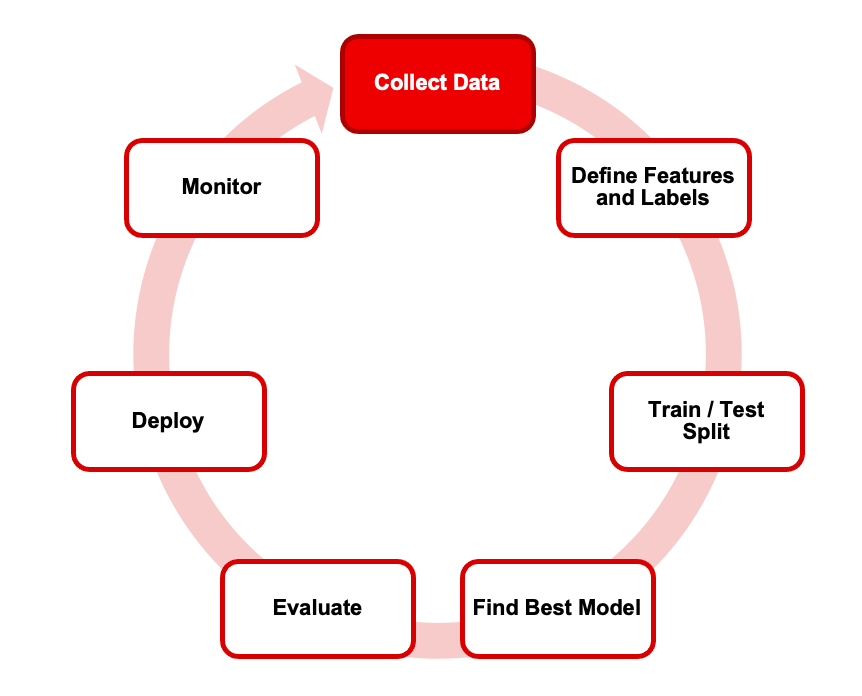

So let's dive in how the supervised ML process works, step by step:

Step 1: Collect and prepare data

To live up to their name, machine learning algorithms need data.

More specifically, tidy data.

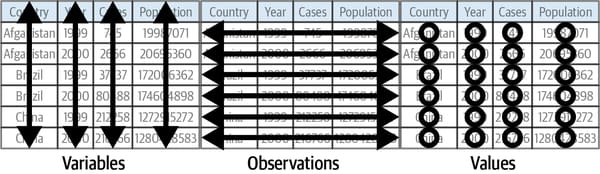

Tidy data has three characteristics:

Every observation is in its own row.

Every variable is in its own column.

Every measurement is a cell.

Organizing and cleaning data is by far the most tedious process in the entire ML workflow.

Example: Let's say you want to build a use case around predicting house prices. Then each row in your dataset could be a separate house and each column could be an attribute for each house (e.g. number of bedrooms, size, age).

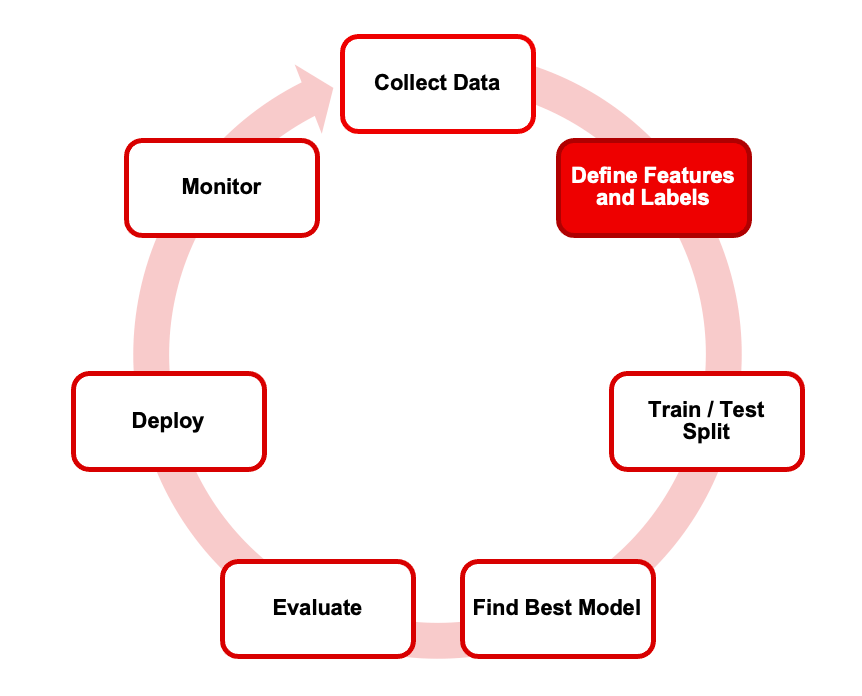

Step 2: Identify features and labels

In supervised ML, the truth of whatever you're trying to predict must be known for historical examples described by some attributes.

This information is called labels and features.

The label (aka target, dependent variable, or just output) is the variable that you want to predict

The features (aka attributes, inputs, or independent variables) are the variables that describe your observations.

All your algorithm is trying to do is build a model that predicts the labels y given the features x.

Example: If you want to predict house prices, the house price would be your label, and any other variables such as bedrooms, size, and location could be your features.

The data type of the label defines your machine learning task.

For numeric labels, the process is called regression. For categorical labels, it's called classification. Both categories involve different algorithms.

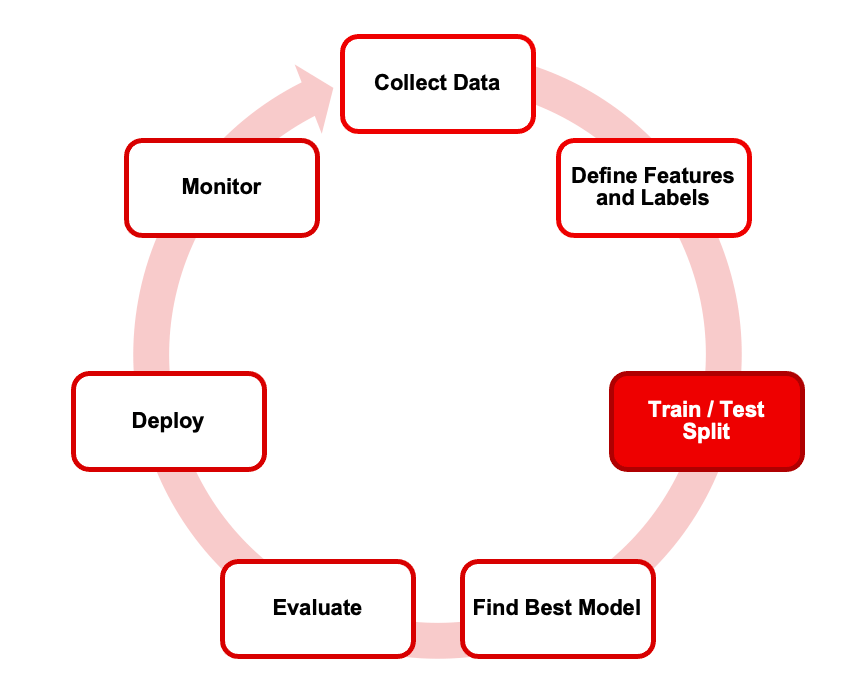

Step 3: Split your data into training and test sets

To build a predictive model, we need to split our data into (at least) two parts:

The training set is the part of the historical data set that will be used to train the ML model (typically between 70% and 80% of the data).

The test set (or holdout set) is the part of your data that will be used for the final evaluation of your ML model.

Splitting the data is important because you want to make sure that the model performs well not only on the data it knows, but also on new, unseen data from the same distribution.

The performance of your model on the test set will be your performance indicator for new, unseen data.

(If you're using AutoML, you usually don't need to worry about this step because the AutoML tool does it automatically.)

Sometimes you'll need to adjust it manually (for example, in the case of time series data).

Example: If you want to predict house prices without considering a time dimension, you could select a random sample from your entire housing dataset and use it as a test set. However, if you want to predict the development of a house pricing trend, you'd probably define a rolling time window (e.g., 1 month) to use as a holdout set.

Step 4: Use algorithms to find the best model

In this step, you try to find the model that best represents your data and has the highest predictive power.

The model is the end result of your ML training process. It's an arbitrarily complex function that calculates an output value for any given set of input values.

Here's a simple example:

y = 200x + 1000

This is the equation for a simple linear regression model.

Given any input value for x (e.g., house size), this formula would calculate the final price y by multiplying the size by 200 and then adding 1,000.

Of course, real ML models are more complex than that, but the idea remains the same.

So, how can a computer, given some historical input and output data, come up with such a formula?

Two components are needed:

First, we need to provide the computer with the algorithm (in this case, a linear regression algorithm). This tells the computer the output must look something like this:

y = b1x + b0

The computer will then use the historical training data to find out the best parameters b1 and b0 for this problem.

This process of parameter estimation is called learning, or training, in ML.

That's the core idea.

We can achieve and accelerate this learning process for big datasets in several ways, but let's ignore that for now.

The only thing you need to know is that you need to define an ML algorithm to train a model on training data.

This is where experts like data scientists or ML experts come in.

Or AutoML, if you prefer.

(Building a model used to be the "exciting" part of data science. Nowadays, however, this area is usually considered solved because there are so many algorithms available. The goal is "just" to pick the right one and find the best set of parameters. The focus is now on getting better quality data).

Step 5: Evaluate the Final Model

Once our training process is complete, one model will show the best performance on the test set.

Different evaluation criteria are used for different ML problems, there are many great resources available online.

(Again, if you're using AutoML, it will usually suggest a good first shot evaluation metric.)

Step 6: Deploy

Once we have our model, we need to deploy it somewhere so that users or applications can use it. The technical term for this is inference, prediction, or scoring.

At this stage, your model isn't learning, it's just calculating outputs as it receives new input values.

How this works depends on your setup.

Often, the model is hosted as an HTTP API that takes input data and returns predictions (online prediction). Alternatively, the model can be used to score a lot of data at once, which is called batch prediction.

Here's a great post on LinkedIn that compares the different approaches:

Example: If we deployed a model to predict house prices, we could give that model some attributes about a new house (which was not in the training data) and the model would return a prediction of the house value based on those inputs.

Step 7: Perform Maintenance

Although the ML model development process is complete after deployment, the process never really ends.

As data patterns change, models need to be retrained, and we need to consider whether the initial performance of the ML model can be maintained over time.

ML models require a significant amount of ongoing maintenance, which you should be aware of before you even start machine learning.

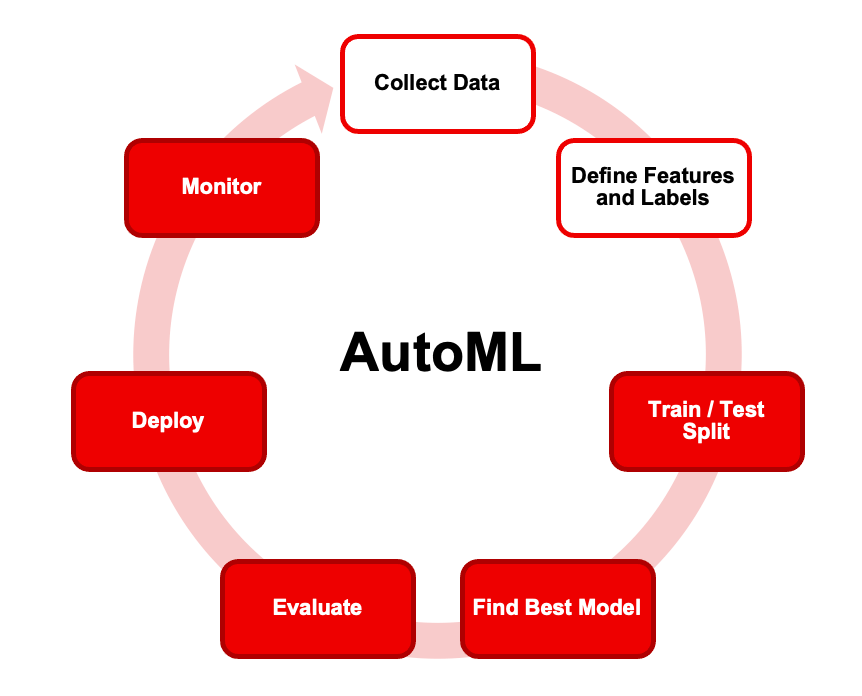

Where does AutoML fit in?

Now that you have a high-level understanding of how supervised machine learning works in general, let's take a quick look at how AutoML fits into the concept.

AutoML abstracts and automates most of the steps in the supervised machine learning process:

Find the best algorithm

Find the best learning parameters

Optimize the features (feature engineering)

Split your data into training & test set

Deploy your model & monitor performance

This leaves you to do the following:

Find the right use case

Collect the right data

Prepare the data

Define features

In short, AutoML is more than model training, but it does not cover the whole process!

So what does this mean?

If you're a 'data expert' in your organisation (hello, BI folks!), chances are you can challenge even seasoned data scientists by leveraging your data and domain expertise combined with very basic machine learning knowledge.

This could easily boost your career and you don't have to wait for anyone's permission.

If you want to learn more about AutoML, check out my quick AutoML Crash Course here.

That's all for now!

As always, thanks for reading!

I hope you enjoyed this short tutorial!

What did you find most useful?

I'll be back again again next week with another exciting AI use case for BI - so stay tuned for that!

See you next Friday,

Tobias

Resources

If you liked this content then check out my book AI-Powered Business Intelligence (O’Reilly). You can read it in full detail here: https://www.aipoweredbi.com