This week, I co-hosted the first-ever Digital, Data, Analytics, and AI (D2A2) Executive Roundtable Dinner in Toronto. Together with Prashanth Southekal, we brought 15+ data and analytics leaders from Canada's leading banks, media, transport, and tech companies in the area.

Besides views and food, I was there to listen and learn. The discussion I moderated was about AI ROI. 80% of the room said they couldn’t track it properly. The 20% who could were mostly running classical ML on data-heavy use cases like ads or fraud.

Why is it so hard to track ROI when the technology has never been more accessible? And what can we do about it?

Let's find out.

What nobody wants to admit publicly

Generative AI really broke ROI measurement. I can hardly think of another technology that was so easy to deploy on one hand, and so hard to quantify on the other.

In the "old" world of AI – Classical ML – the rules were different. Most use cases had a high bar of entry. You had to train a model, which made the ROI calculation a necessity. How else would you justify spending $50K+ on consultants or hiring internal staff to collect data and build a model?

But since ChatGPT, you don't need a $50K budget anymore – just a $20/month subscription to get started.

Which is how the problem began in the first place.

GenAI dropped overnight into fuzzy human workflows where nobody measured the "before".

The tools got way easier, and that's exactly what made measurement much harder.

A lot of companies fell into the "if ChatGPT can do this, I can just automate it a little more and scale the use case" trap.

But the "automate it just a little more" part often turned into a cost sink. A year later, leadership asks "what did we get?" – and nobody had an answer.

What I learned is that this isn’t a measurement problem, but a design problem.

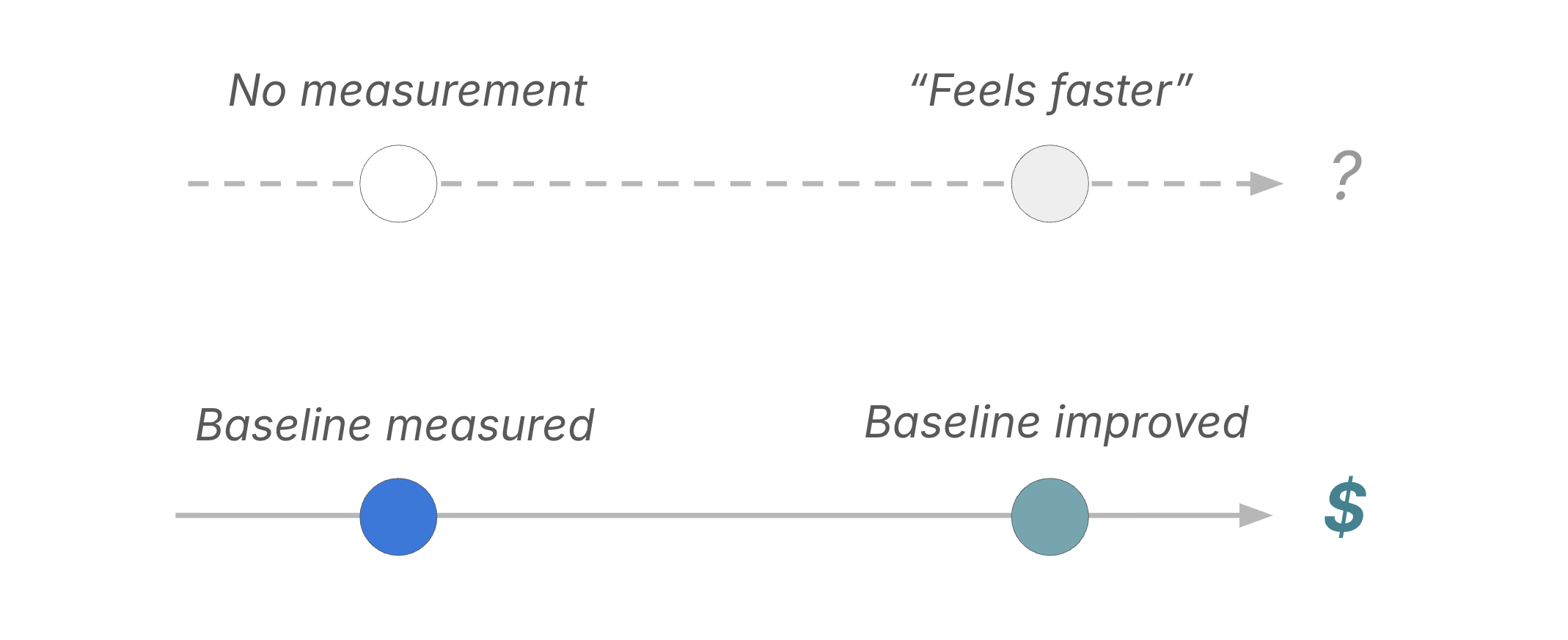

They built before they baselined.

No Baseline = no ROI

Before you ever spend time and money to build your first AI solution, ask three questions first:

What's the default action today, without AI?

Are we getting better with AI in the mix?

How much better – and what do we pay for it?

If you skip the baseline part, you're not measuring, but just guessing (or fabricating metrics post-hoc your stakeholders want to hear). Reverse-engineering value after launch doesn't work. You can't compare "with AI" to "without AI" if you never wrote down "without AI".

Productivity AI breaks the baseline rule

Defining a baseline sounds easy, but in practice it can be incredibly hard. Especially when you do Productivity AI. The honest reason most teams can't measure ROI on Copilot, ChatGPT & co. is that building a solid baseline would require surveilling people. You'd need to track things like:

Time per email

Drafts per hour

Edits per document

Minutes per meeting summary

But nobody wants that infrastructure. Neither ethically, nor technically. In most organizations I work with, it would be forbidden anyway.

So the baseline never exists.

Which means ROI never exists.

This is a category error, not a measurement failure.

As I wrote previously, I see Productivity AI mainly as an operating expense. Like giving out free coffee. You don't run an ROI analysis on coffee.

Engineered AI, however, is a different category entirely. The baseline is already sitting in your systems. Ticket logs. Invoice timestamps. Error rates. Cycle times. Conversion rates. You just have to extract it. Look for it, before you build.

In other words:

If the baseline exists in a system, it's a candidate for ROI measurement. If the only way to baseline it is to surveil humans, it's not.

Examples

Let’s see what "baseline first" actually looks like in practice.

Example 1: Customer Support

Non-baselined approach:

AI assistants in customer support are cool

We gave 5 support agents access to Copilot M365

They say "responding to tickets got way faster and better!"

The problem: Even if the effect is real, it dissolves into the day. Nothing changed in any system you can point to.

Baselined approach:

Count the number of support tickets hitting your inbox per week (the baseline)

Deploy an AI self-service chatbot in front of the inbox – for a month or a % of users

Track two numbers: tickets resolved by the chatbot, and tickets still landing in the inbox

This way you can point to two metrics that actually have an impact on both your cost and value side.

Example 2: Sales Proposals

Non-baselined approach:

AI is great for writing proposals

We rolled out a GPT-based draft tool to the sales team

Reps say "it saves us hours and the quality is better!"

Problem: Same as above.

Baselined approach:

Pull your overall win rate for the last two quarters (the baseline)

Build an AI scoring tool that ranks incoming RFPs into "high" and "low" win probability

Run it in shadow mode for 3 months – reps still respond to everything, but the AI's prediction is logged

Then check: did the "high probability" bucket actually win more often than the "low probability" bucket?

If yes, you have a working filter. You can measure the real ROI: low-probability RFPs filtered out, and time reps now spend on the high-probability ones. If it didn’t work, you've saved yourself from rolling out an expensive tool.

The Exception

Not every use case can (or should) be tracked with ROI.

What came up during our conversations: for some businesses, you just have to do AI to stay relevant. To stay in the game. If your entire market is being reshaped by AI right now, you might just have to move with it.

That's fine.

Just be honest that's the reason. Don't dress it up as a business case when it's actually a survival bet.

For everything else: build the baseline first. Build the AI second.

Conclusion

People measuring ROI aren’t smarter or better-funded.

They just wrote down a number before they built.

That’s the entire trick.

See you next Saturday!

Tobias

PS: To learn more about D2A2, explore our free reports here.

PPS: Baselining is just one piece of a larger system for finding AI that actually drives business impact. If you want it all, check out my book The Profitable AI Advantage.