Read time: 8 minutes

Hey there,

Today you'll learn how to integrate a lead scoring algorithm into your reporting dashboards.

Lead scoring is the process of assigning a numerical value (=score) to a lead, indicating the lead's potential or readiness to convert. These scores can be used to effectively prioritize sales and marketing efforts.

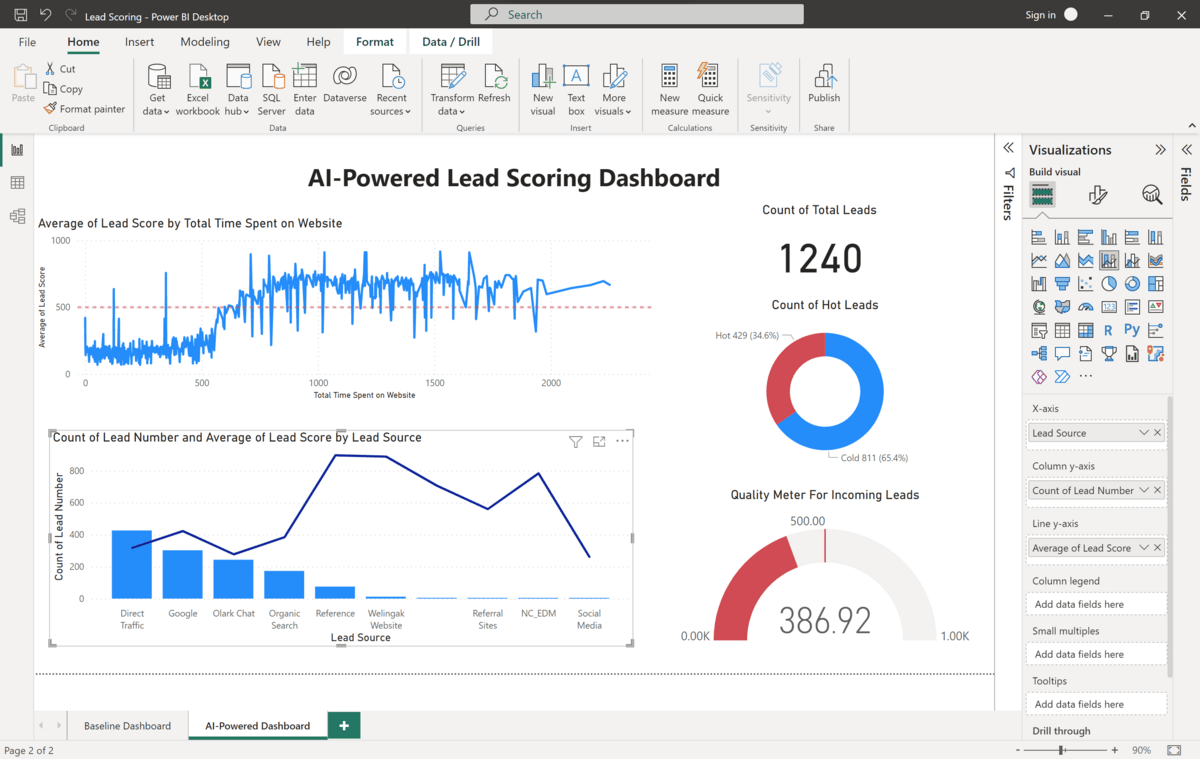

To create these scores, we will use AutoML and ultimately build a dashboard that looks like this:

Are you ready?

Let's go!

Join my upcoming Business Analytics Bootcamp to improve your analytics skills - LIVE with O'Reilly!

Problem Statement

Imagine we're business analysts for an education platform that sells online courses to industry professionals.

The platform acquires leads through various channels such as online ads, search engines, and referrals. When these people fill out a form and provide their email address or phone number, they're classified as a lead.

On average, about 40% of these people end up taking a paid course. That's our lead conversion rate.

To further increase this number, management came up with two strategies:

Improve conversion rates by flagging and following up on "hot leads"

Improve the overall quality of incoming leads by comparing marketing channels against a simple metric

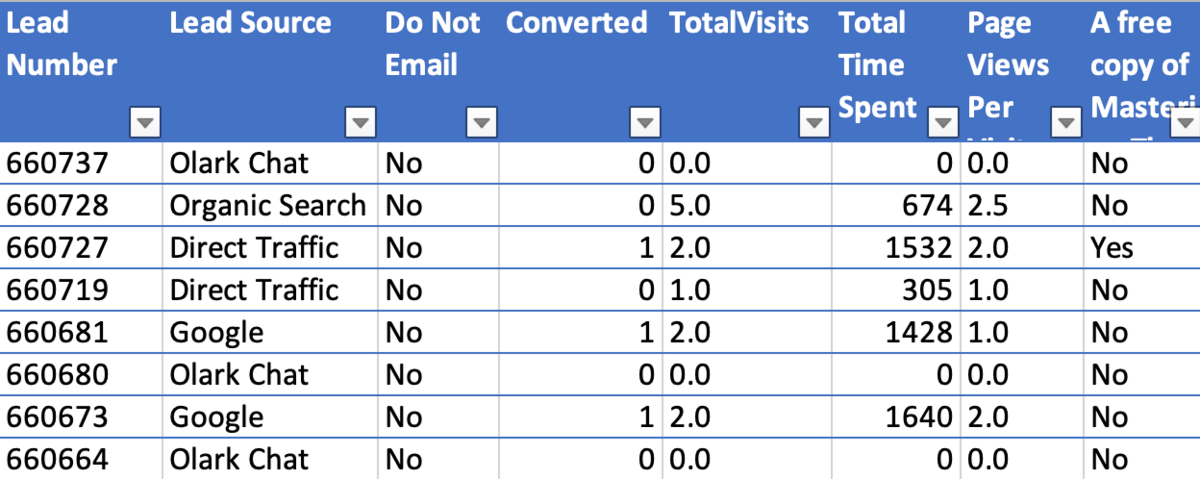

We are provided with a historical dataset which lists the various leads, their respective attributes such as the lead source or website usage data, and a label of whether or not that lead has converted in the past.

Here's a snapshot of what the data looks like:

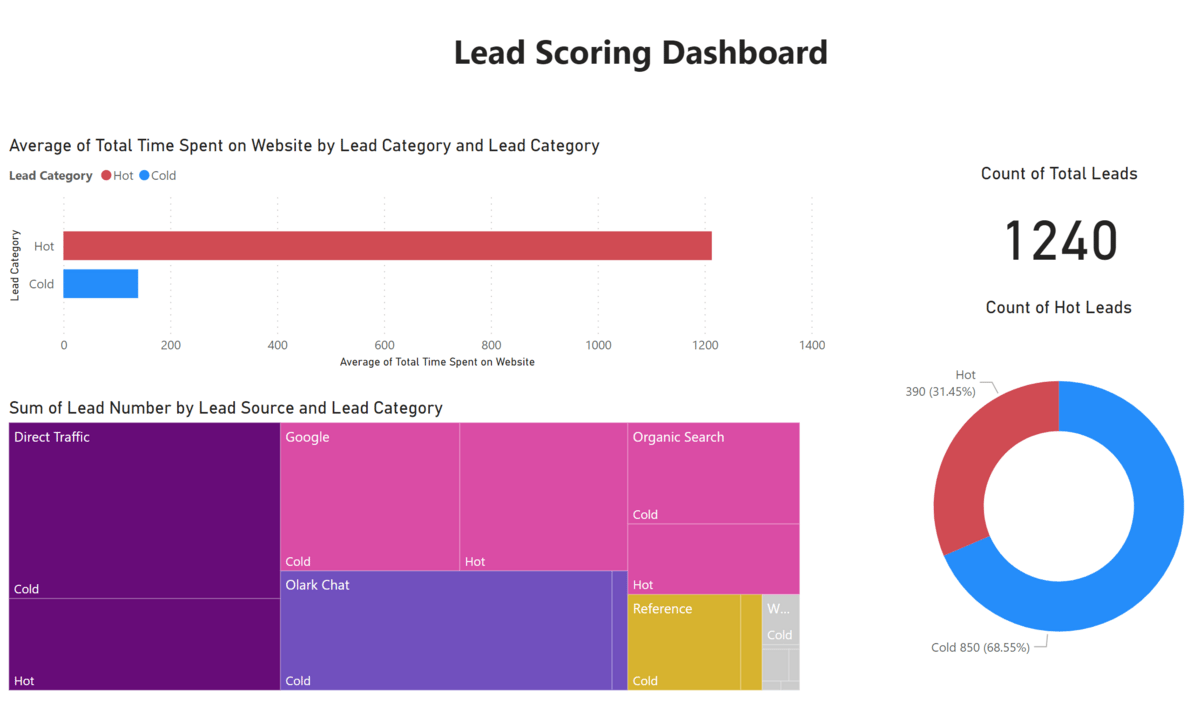

Right now, we have a dashboard in place that flags all leads that have spent more than 600 seconds on a website as hot leads. (Let's say this number was set years ago in another data analysis.)

Here's what the current (old) dashboard looks like:

As you can see, this dashboard puts a lot of emphasis on the average time spent on the website by lead category (top left), as this is currently the only criteria used to define hot leads. We also see a breakdown of the different marketing channels and the percentage of hot and cold leads generated through those channels. As you can see, it's pretty hard to draw any insights from this.

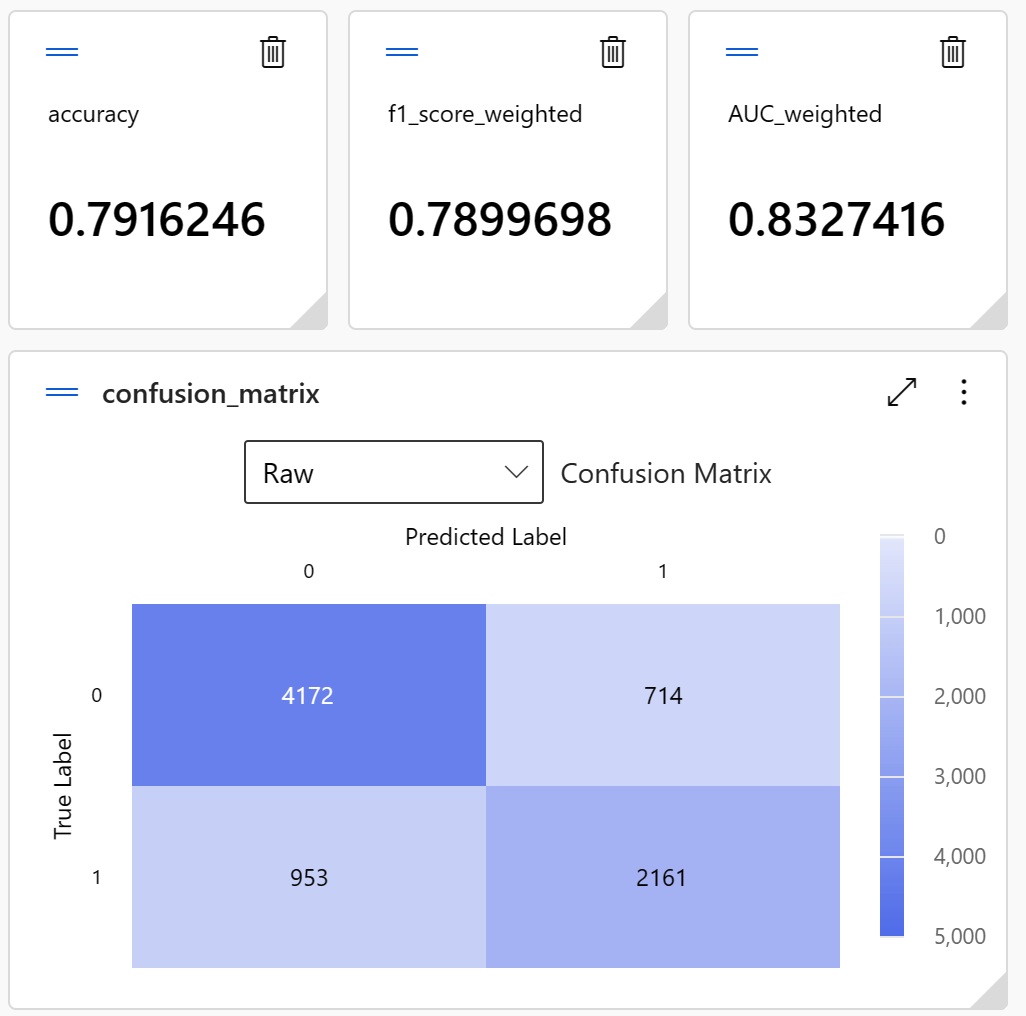

That being said, the current logic behind "hot leads" isn't really a good predictor of whether a lead will actually convert. The accuracy of this approach is 73% and the F1-score is only 62%.

These aren't particularly good values, considering that 60% of all leads don't convert.

How can we do better?

Solution Overview

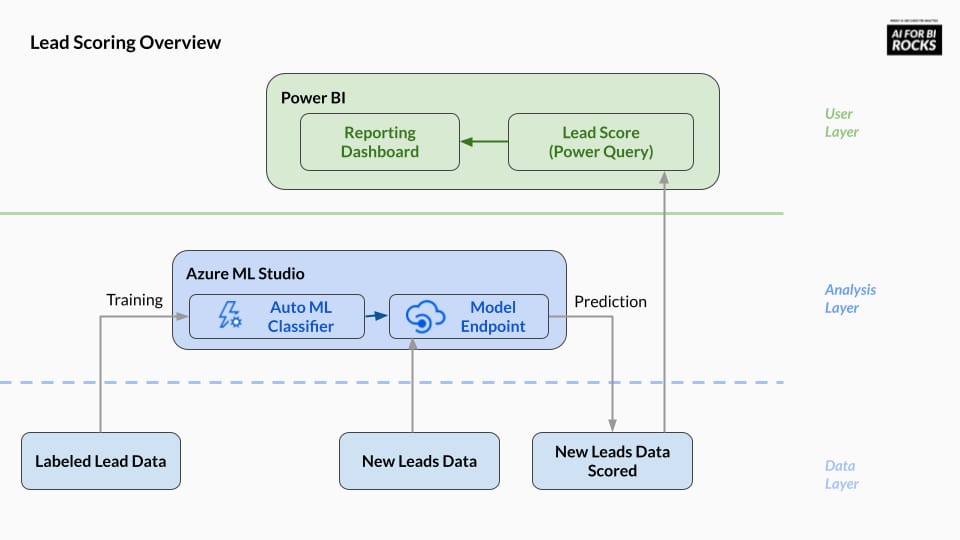

Here's a high-level overview over our anticipated solution:

Solution Walkthrough

Let's walk through this implementation step by step!

Data Layer

We have two data sets. One dataset is a collection of historical leads with their respective attributes (source, time spent on website, etc.) as well as their label - a flag whether they converted or not.

The other dataset is a collection of fresh leads. We don't know yet if they'll convert or not, but we know their attributes (source, time spent on website, etc.)

The historical data will be used to train our Auto ML model. The new data - enriched with predictions from the AutoML service - will be reported in our BI.

The predictions are done outside our BI tool (batch prediction).

Analysis Layer

Our goal is to predict the variable "Converted" given all other attributes. This is known as a classification task which we can solve using AutoML.

For example, we can use Azure ML Studio to create such a model:

Simply upload the historical data, and select the following columns as candidate features in our schema.

'Lead Source'

'Do Not Email'

'Converted'

'TotalVisits'

'Total Time Spent on Website'

'Page Views Per Visit'

'A free copy of Mastering The Interview'

'Lead Category'

The column 'Converted' is our target column which we want to predict.

After a while, the AutoML job will find a voting ensemble model that gives the best predictive performance for this taks.

As we can see from the model evaluation metrics, the performance of Auto ML is much better than that of our baseline solution (62% F1-score):

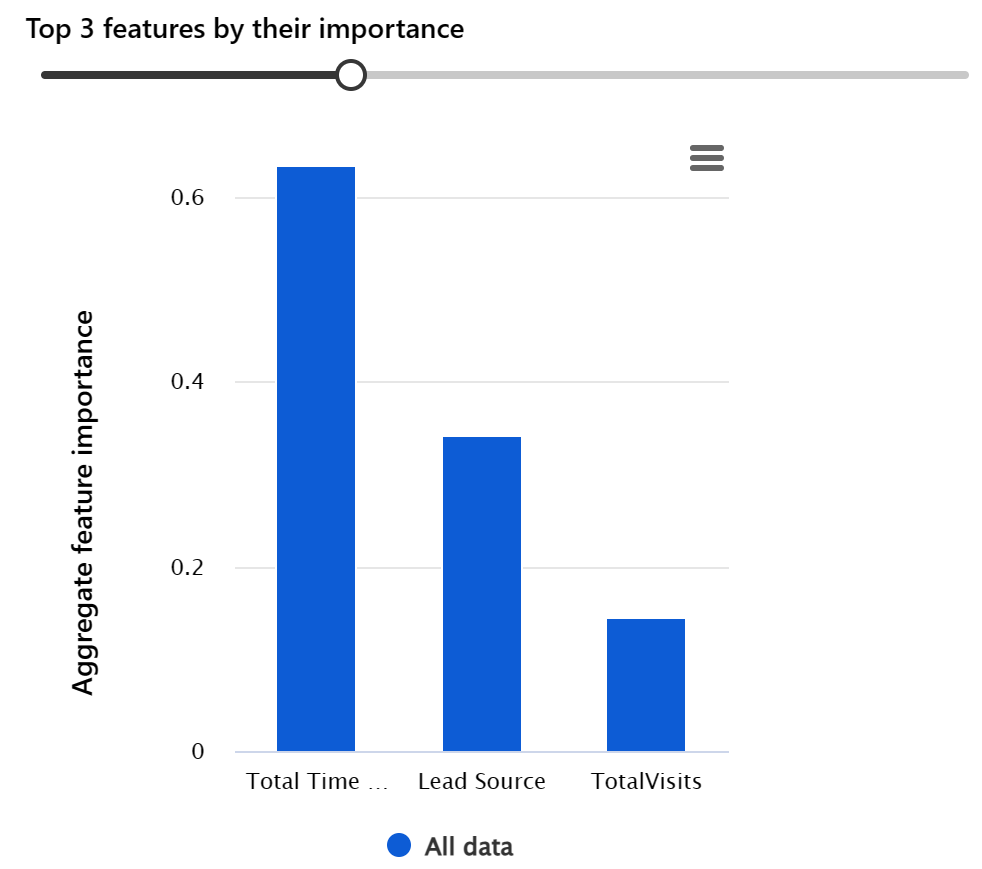

When we look at the explanation of the model, we see that the three main factors that influence the likelihood of lead conversion are 'TimeSpentOnWebsite', 'Lead Source' and 'TotalVisits'.

We'll track these three variables accordingly in our final dashboard to assess the quality of incoming leads.

In addition, the model predicts not only the class label, but also the class probability, which can be interpreted as the likelihood of converting a lead. This will come in handy in our report, as we'll see in a moment.

Now we can use this model as an HTTP endpoint.

In this case, I opt for batch prediction, which means I simply send all new leads to the API at once using a small Python script, retrieve the results, and store them in a flat file (or SQL database, if that were our data source) along with the original data.

Our data is now ready to be consumed by our BI tool.

User Layer

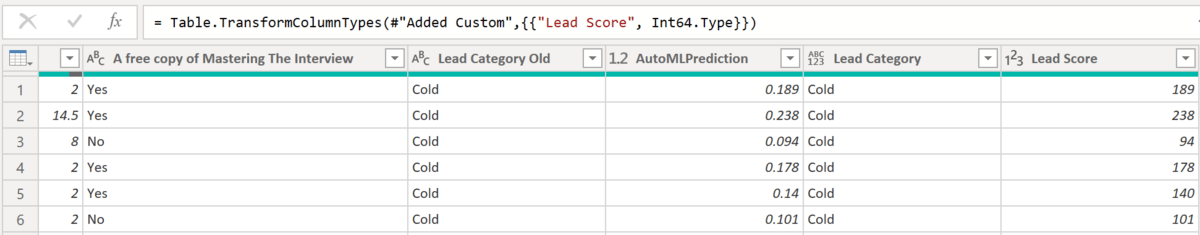

Since the AutoML model provides the lead conversion probabilities, we can easily use these values to create our lead scores.

In this case, I simply rounded the prediction probability to three digits and multiplied the value by 1,000 to get a score between 0 (lowest score) and 1,000 (highest score) per lead.

Here's how that looks in Power Query:

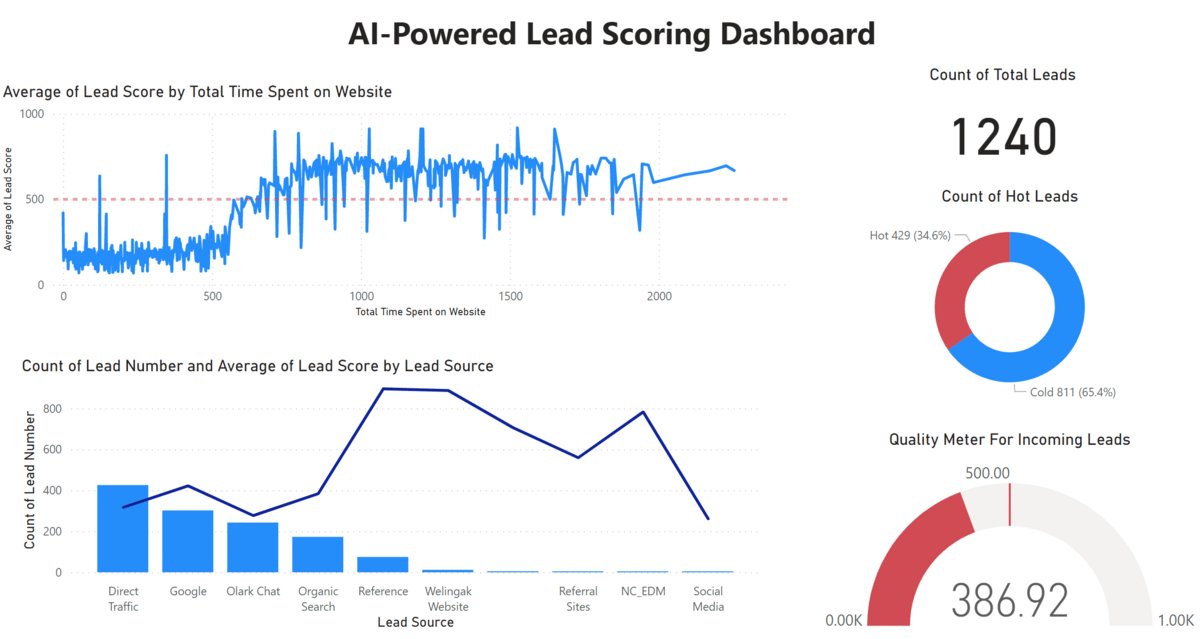

With this granular scoring data we can now take our dashboard to the next level and create something like this:

There are four components in total - let's briefly run through them!

Total Count

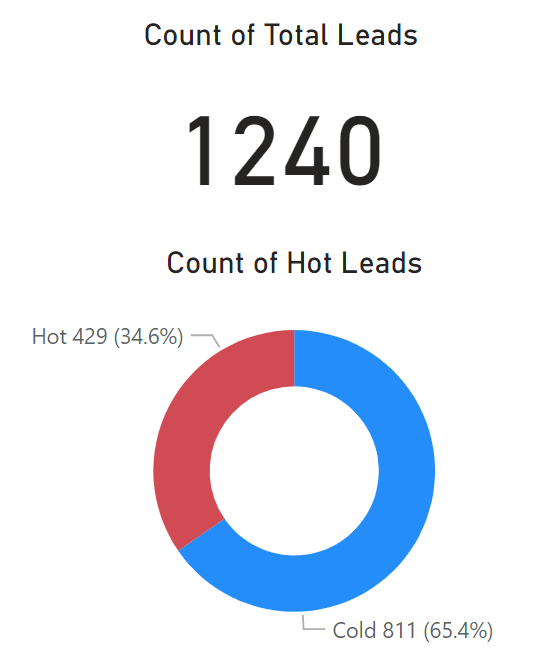

This visual gives the total number of all leads (same number as in the old dashboards) as well as the number of "hot leads" (this number changed).

Instead of using the arbitrary value of 600 seconds spent on the website to define "hot leads", I classified all leads with a lead score of more than 500 as "hot leads".

As a result, we now get 429 leads that we should follow up on (34.6% of all leads). Originally, this number was slightly lower.

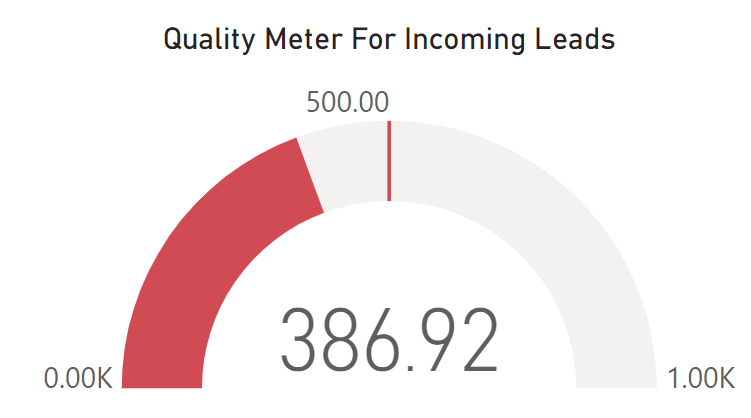

Quality Check 1: Quality Meter

We can use the average lead score across all leads to track the overall quality of incoming leads.

As the chart here shows, we currently have an average lead score of 386.92. This could need improvement and the first step could be to aim for an average lead quality of 500+ (50% chance of lead conversion). This goal is indicated in the performance chart below:

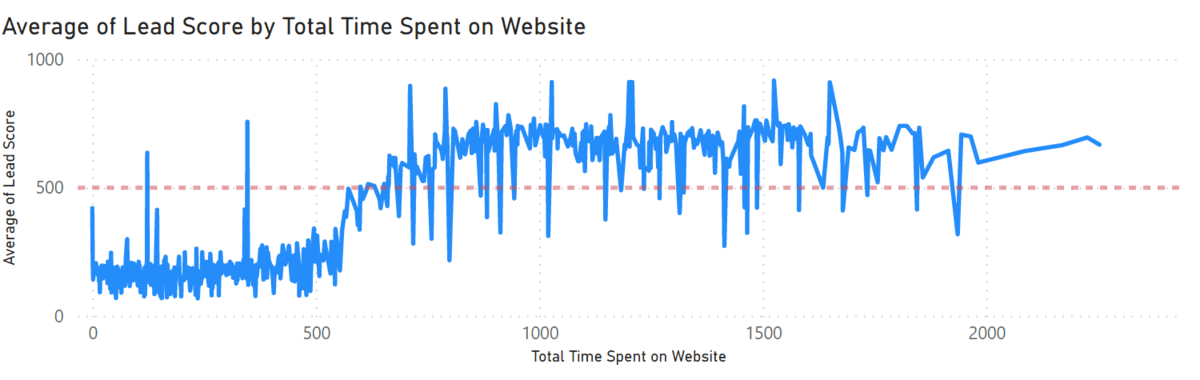

Quality Check 2: Time Spent on Website

As we learned from the previous model explanations, the variable 'Time Spent on Website' had the greatest impact on lead conversion probability.

The chart below compares the total 'Time Spent on Website' against the average lead score. We can see that the 500 mark (dashed red line) is reached at around 650 seconds spent on the website.

After that, the average lead score doesn't increase significantly. So our goal should be to get website visitors past the 10-minute mark by offering them engaging content so that the likelihood of them becoming paying customers increases significantly.

Quality Check 3: Traffic Sources

The second most important feature was traffic source.

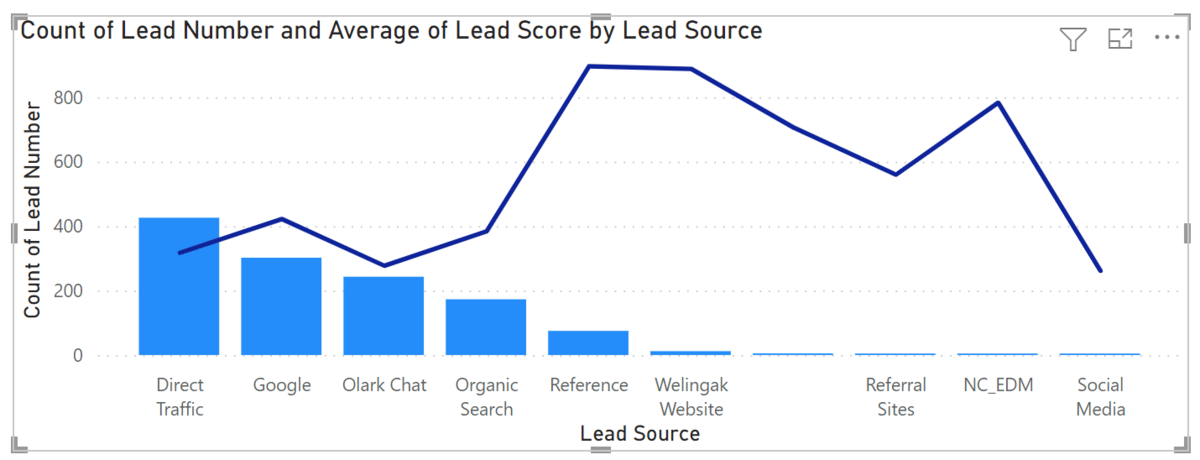

The chart below compares the absolute number of leads per traffic source (bar chart) to the average lead score for that channel (line chart).

As we can see, the channels that bring the highest traffic are also the ones that bring the lowest quality leads - a phenomenon that isn't uncommon.

However, in this particular case, we can see that the 'Reference' channel, in particular, generates a significant amount of traffic while delivering leads with the highest score. We should try to get more traffic from this source.

It's also worth investigating how the quality of leads behaves when we get more traffic from social media, which is still underrepresented at this point.

We could add another chart showing the third most important feature, 'TotalVisits," but I'll leave that as an exercise for you.

Conclusion

There you go!

We've built an AI-powered lead scoring dashboard that clearly informs our stakeholders about the quality of incoming leads and enables them to follow up on the 'really' hot leads.

I hope this inspires and encourages you to try it out for yourself. You can find all the practice material in the resources below.

How did you like today's use case? Hit reply and let me know! I look forward to receiving and answering to your feedback!

See you again next Friday!

Best,

Tobias

Resources

Whenever you're ready, here are 2 ways I can help:

If you want to further improve your AI/ML skills to build better reports and dashboards, check out my book AI-Powered Business Intelligence (O'Reilly).

If you want to pick my brain for a project or an idea you're working on, book a coffee chat with me so we can discuss more details.