Read time: 5 minutes

Hey there,

Large language models (LLMs), such as GPT-4, work great for a variety of use cases out of the box, but sometimes they are just not specific enough.

Today, I'll introduce you to two important concepts: fine-tuning and few-shot learning. Both allow you to customize an LLM to your needs. We'll cover their advantages and disadvantages, and when to use them.

By the end of this post, you'll have a good understanding of both techniques and how you can use them to fit your specific needs, even if you're just getting started with LLMs.

Let's dive in!

Wait, I Can Customize GPT-4?

Short answer: Yes! You can customize the behavior and knowledge of a model like GPT-4 for your own use case. However, many people are afraid to do so because they believe that:

Don't have enough computing power

Don't have adequate skills

Don't have enough data

While the process does require some technical knowledge and resources, the barriers for customizing a large language model like GPT-4 are lower than you might think. Once you understand this, you can unlock many exciting use cases.

Let's get straight to the first point.

Few-shot Learning: Maximum Output With Minimal Data

Few-shot learning is an approach that allows models to generalize from just a few examples (”few shots”).

LLMs like GPT-4 are already pretrained on a wide range of tasks, which means few-shot learning might work better than expected.

To implement few-shot learning, you provide high-quality examples of the task you want the model to perform directly in the prompt.

The model will then extrapolate from these examples to solve the problem at hand.

How it works:

Provide a system message or base prompt that describes the task.

Give a few high-quality examples ("shots") that illustrate the desired input-output pattern.

Use the model to generate outputs based on the provided examples and instructions.

Examples

If you're building a model to recognize the sentiment of customer reviews, provide it with a few instances of positive and negative reviews and their corresponding labels in the prompt.

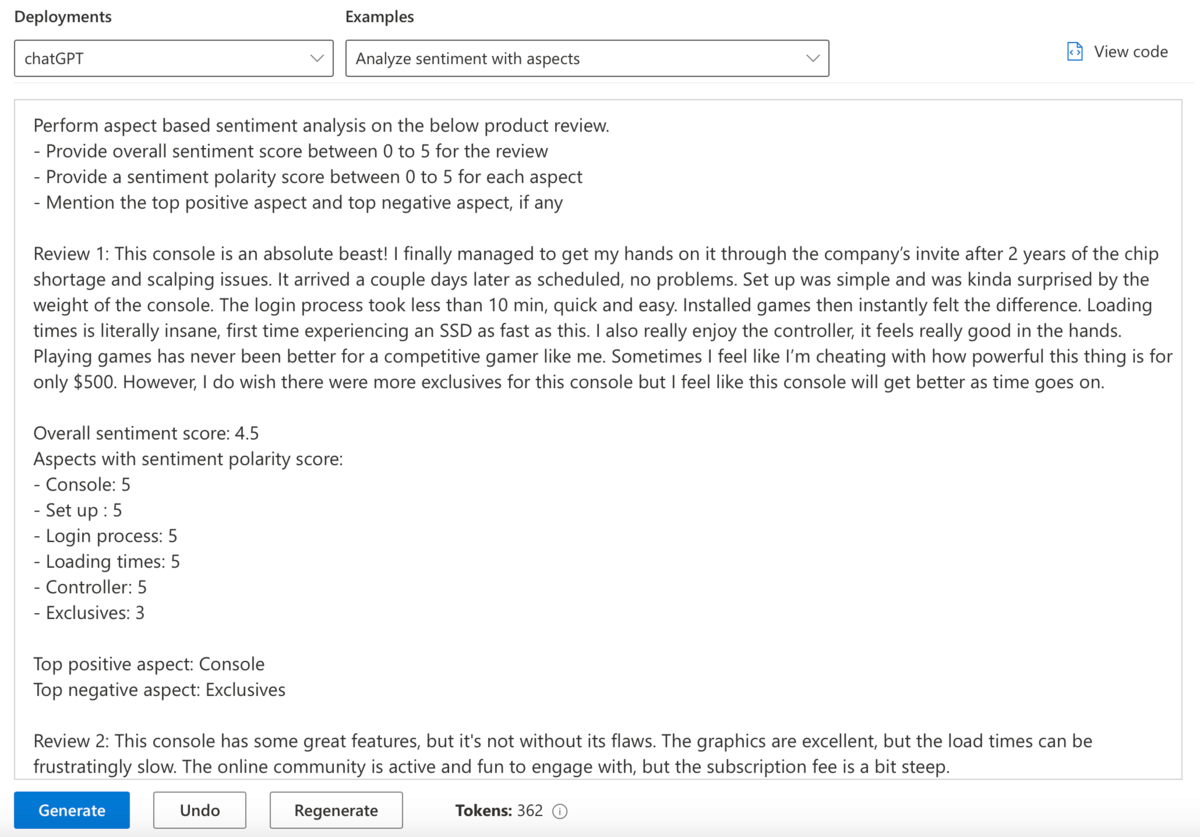

Alternatively, you can use more advanced and detailed prompts, as demonstrated in this example from the Azure OpenAI Playground:

Advanced prompt for few-shot learning in Azure OpenAI Studio

Pros:

Low data requirements: Few-shot learning can be effective with only a few high-quality examples, which is great for when you don't have much training data.

Faster adaptation: This method can be set up quickly because it does not require a lengthy training process.

Versatility: The same model can be adapted to different tasks by providing different examples, making it highly flexible for various use cases.

Cons:

Limited customization: The model's behavior is dictated by the provided examples and may not always produce the desired results.

Context constraints: Every few-shot example in the base prompt will count against your context limit. For example, if your maximum context length is 8,000 tokens and you use 4,000 tokens for the few-shot examples, you have just 50% of your total context capacity left.

Takeaway: Few-shot learning can be effective with only a small number of high-quality examples. This makes it useful in situations where there isn't much training data or the label predictions have low complexity (such as sentiment analysis with only positive or negative labels).

Fine-tuning: Teach Your Model New Tricks With New Data

Fine-tuning is a process that involves adjusting a pretrained model's weights to better fit your specific data or task. This is different from few-shot learning, as it actually trains a new model on your custom data.

Technically, you typically fine-tune a model by providing a custom training dataset to a fine-tuning API. This is usually done online, but you can also fine-tune a LLM on your own premises if the pretrained model weights are available open-source. That’s a big deal for data security, since your training data would never leave your company in this case.

How it works:

Select a pretrained base model that you want to fine-tune (e.g. OpenAI’s davinci).

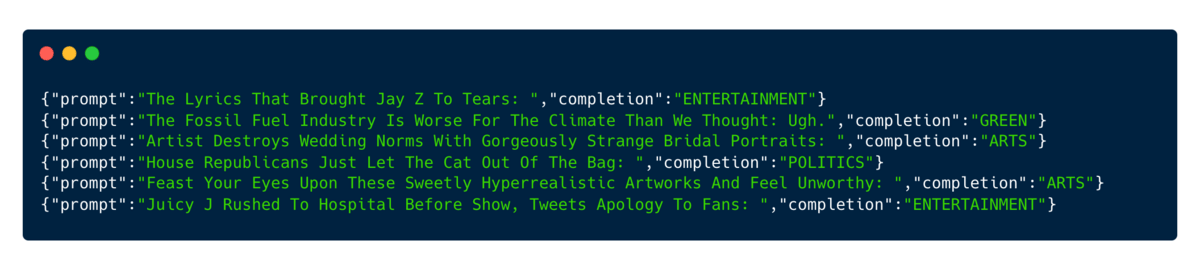

Provide high-quality training data for fine-tuning, ideally in JSONL format or as a CSV file with columns for "prompt" and "completion” (here’s a tutorial for Azure OpenAI Studio).

Provide a validation set containing new examples not present in the training data.

Fine-tune the model using the provided data and review the validation metrics.

Deploy your fine-tuned model and use it in your applications.

Examples

Let's say you run a news outlet and want to classify news articles automatically based on your custom topic taxonomy. In this case, you could provide a set of existing articles as the prompts and their respective news categories as the completion as shown here:

Generally, the more high-quality examples you can provide, the better.

Here are some additional real-life examples where fine-tuning could be useful:

A legal firm could fine-tune a model to better understand legal jargon and draft contracts.

A marketing agency could fine-tune the model to generate promotional content in their unique brand voice.

A customer service center could fine-tune the model to better understand customer inquiries and provide accurate responses to frequently asked questions.

Pros:

Improved performance: Fine-tuning can lead to higher quality results compared to few-shot learning.

More capabilities: With fine-tuning, you can handle more complex tasks which would not fit into a single prompt.

Lower latency: A fine-tuned model will mostly respond faster to a request compared to a model with few-shot examples in the prompt.

Cons:

Cost: Fine-tuning can be expensive. For example, consuming a custom fine-tuned Davinci model is six times more expensive than using the out-of-the-box model.

High data requirements: Fine-tuning requires large amounts of high-quality training data, which can be difficult to get for some use cases.

Longer training time: The fine-tuning process can take a long time, especially for complex models like GPT-4.

Overfitting: Fine-tuning can lead to overfitting if the model is not validated correctly, leading to poor performance in the wild.

Takeaway: Fine-tuning can provide better results tailored to your needs - but it requires high-quality training data and can become expensive quickly.

Conclusion

Now that you're more familiar with both few-shot learning and fine-tuning, you should be better equipped to tailor LLMs to your needs and bring your own use cases to life.

Remember, few-shot learning offers a low-data, versatile solution, while fine-tuning provides improved performance and specialization at the cost of higher data requirements and computational resources.

Weigh the pros and cons of each approach to find the one that's best suited for your use case.

Try to get hands on and experiment with the technology.

Currently, that’s the best way to learn it.

Thanks for reading today's newsletter.

As always, do not hesitate to hit reply and share your thoughts.

I’d love to hear from you!

See you next Friday!

Best,

Tobias