Hi there,

If you want to do things like AI-powered chatbots, search, or recommendations in your business, you need an AI that knows your business.

But out-of-the-box systems like ChatGPT (fortunately) don't have your internal data. So what do you do?

The good news is: You don't have to start from scratch, and you don't even need a deep technical understanding to make it work.

Let's explore the key strategies for adapting AI to your business.

Custom AI isn't about building from the ground up

By the way, when I talk about "AI" today, I'm talking about Generative AI. For the other AI archetypes, such as classical supervised machine learning, computer vision, natural language processing, or audio/speech, there are other methods.

But customizing Generative AI services turns out to be the easiest of all.

Why is that?

Because the way that Generative AI works, and especially large language models like GPT-4 or Google's Gemini, is that their behavior and knowledge can be heavily influenced by what we put in the prompt - literally just some text that tells the model what to do.

That's why you can leverage all the knowledge that the AI already has - and add your existing business knowledge on top of that.

So you have two ways to do that:

Option 1: Tailoring with Context

The most straightforward way to "inject" your business knowledge into the AI is directly using the prompt. Imagine a chat where, before typing your actual question, you would first provide all the relevant context about your business in the chat message. In practice, you don't do this in the message, but in what's called the system prompt - a fixed set of instructions that always stays on top of the conversation you're having with the AI.

The system prompt, the user query, and the AI's response together make up the context window. It's basically the amount of information the AI can process at one time - similar to your computer's RAM.

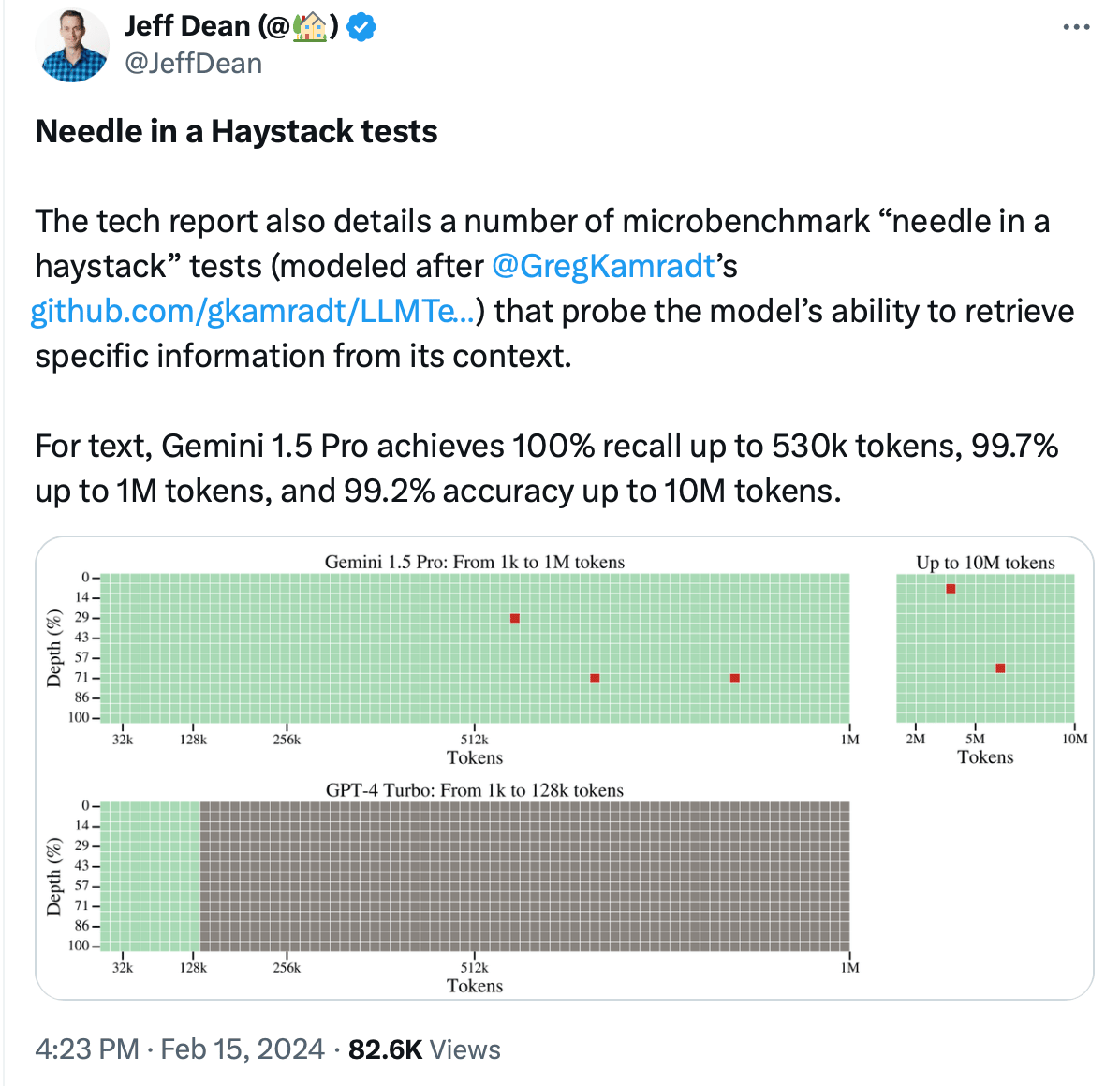

Modern AI systems have increasingly large context windows:

GPT-3.5: around 30 pages of text

GPT-4 Turbo: around 300 pages of text

Gemini 1.5: around 2,500 pages of text

And the context window doesn't just get longer, it gets more accurate too:

The advantage of this context window is that any information you enter here is directly accessible to the AI, and you can control exactly what and how that information is brought in.

On the negative side, putting all your knowledge into your query is (obviously) limited by the context window, and it will also significantly increase your cost per message, since you will always be sending the full instructions to your model - with every message. To give you a ballpark figure, a GPT-4 query that takes up the entire 128k context window can easily cost ~$1 per chat message.

Besides cost, performance is also affected. The more information you put into the prompt, the longer it will take for the AI to get back to you. Gemini 1.5 takes about 30 seconds to come back with an answer if you use the full context.

So if you want to stuff the query with your business knowledge, you either need to find a way to limit the amount of information, or find a use case where $1 per query looks cheap.

Example

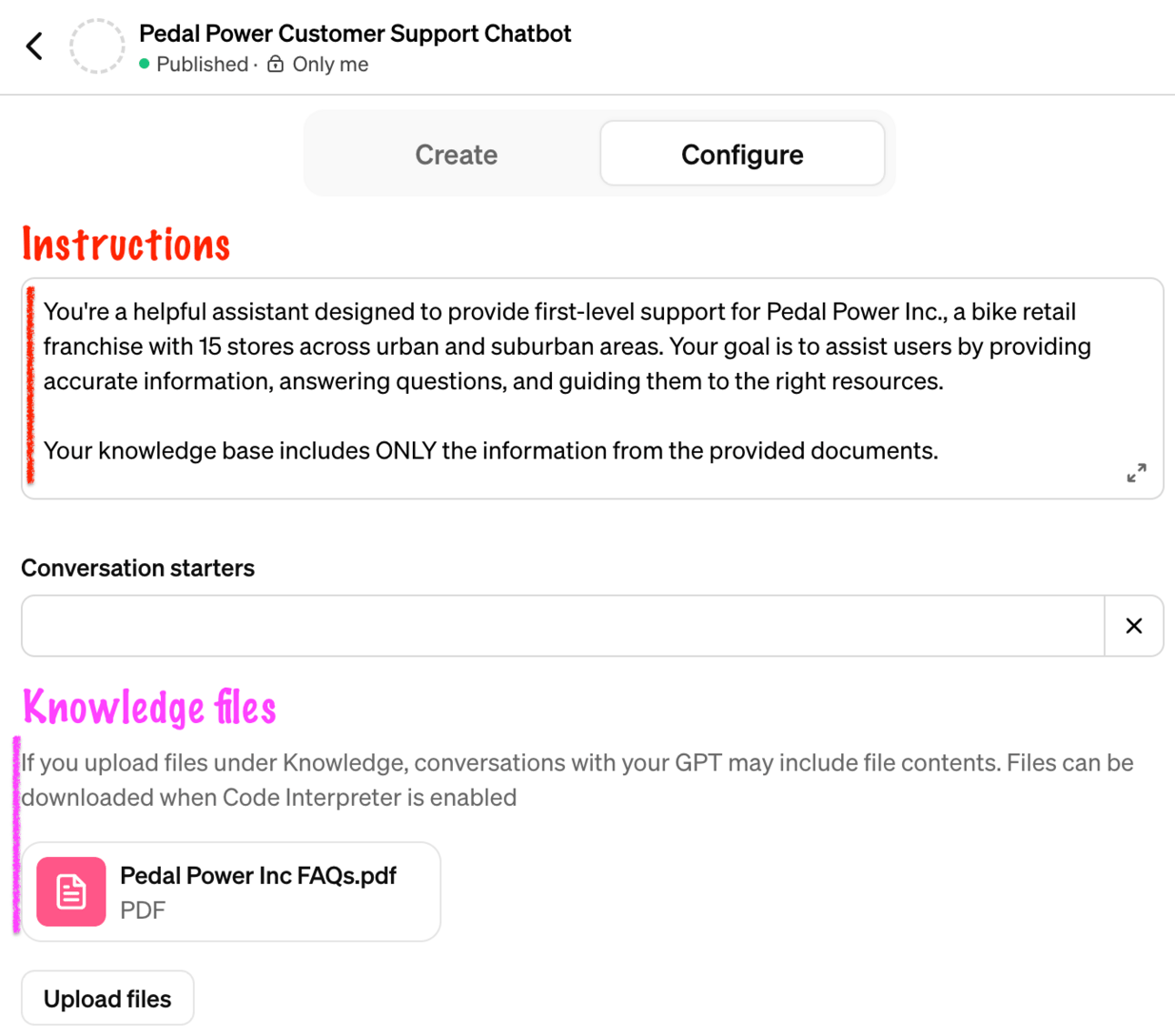

Let's say we're building a customer support chatbot for a local bike store. We could include all of our customer FAQs (and maybe even our entire help desk manual) in the instructions. That way, your chatbot would be able to answer a lot of customer questions right out of the box, with zero overhead.

Here's an example of what this would look like using a custom GPT in ChatGPT:

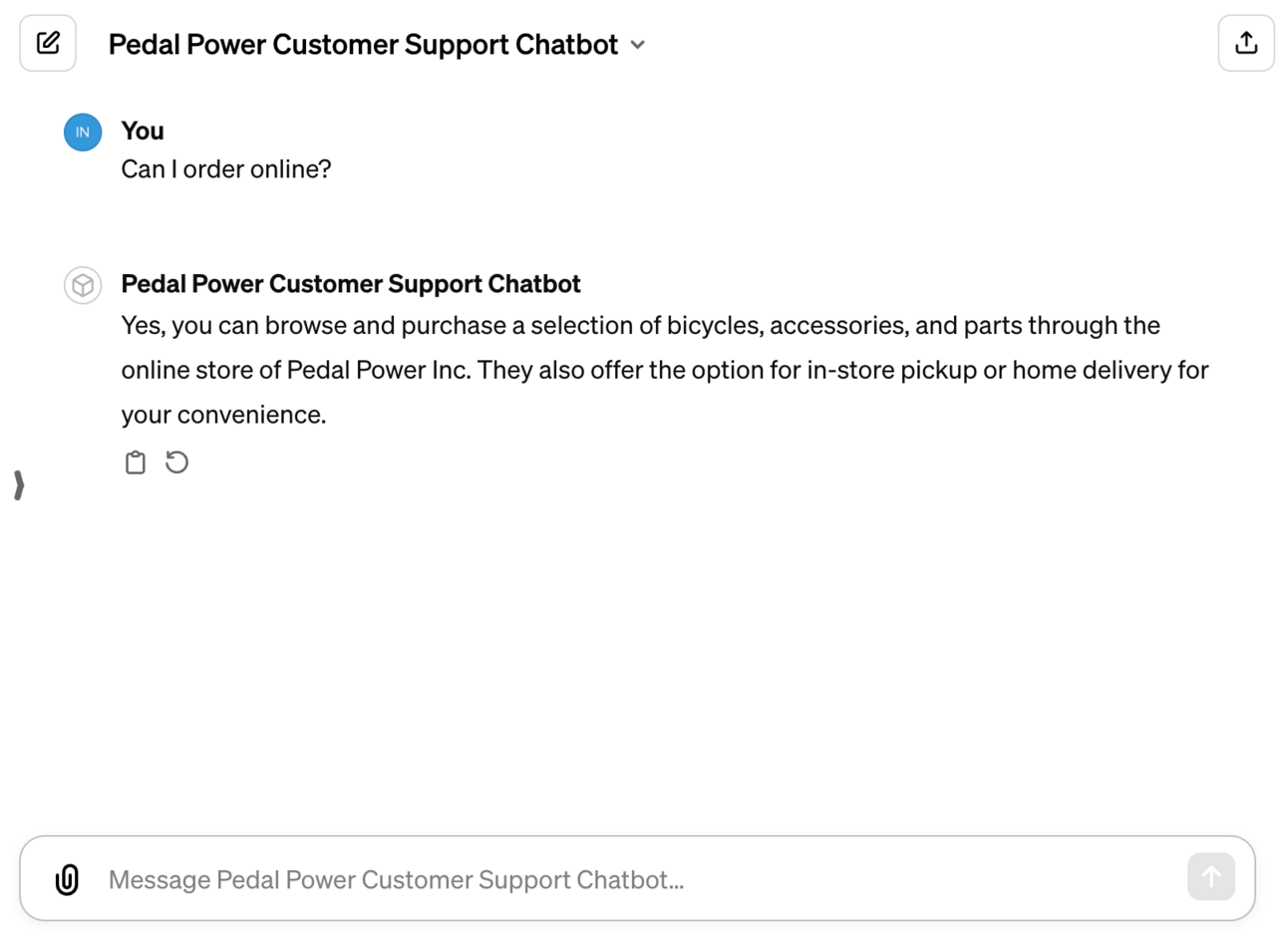

And here’s how the response would look like:

As you can see, the chatbot can answer questions it knows about, but for other questions, it will say it doesn't know the answer.

Option 2: Retrieval-Augmented Generation (RAG)

Spoiler: RAG is basically the same as option 1, but with a trick.

The trick is that the information in the context isn't static, but is brought in dynamically based on the user's query.

Think of it like a librarian who, given a user query, goes out and fetches the most relevant books, reads the relevant sections, and gives you an answer based on that - in real time.

If you're curious, read this guide to RAG to learn more:

The benefit of RAG is that you can access a virtually unlimited amount of data that can be updated in almost real time (because the data storage is decoupled from the AI service). At the same time, RAG can be extremely difficult to get right at scale. Figuring out which document to fetch and which piece of information to retrieve is a non-trivial task that comes with a lot of overhead. Enterprise-scale RAG systems can easily exceed six figures in annual maintenance costs.

Another huge advantage of RAG is that we can actually cite the source where the information came from. This is not possible with information in the prompt. Essentially, RAG gives us some "truth" outside the (non-deterministic) LLM realm to fall back on.

Example:

In the customer support use case, you could take your FAQs out of the instructions and instead make them accessible as a document using RAG. In ChatGPT this is equivalent to uploading a document under "knowledge" to your custom GPT:

If you now ask the chatbot a question, it will get this knowledge from the attached document:

Again, it's trivial in this case, but imagine having multiple documents that might even have conflicting information (because there's a document that says the online store is currently down for maintenance), things can get complicated.

Option 3: Combine Both

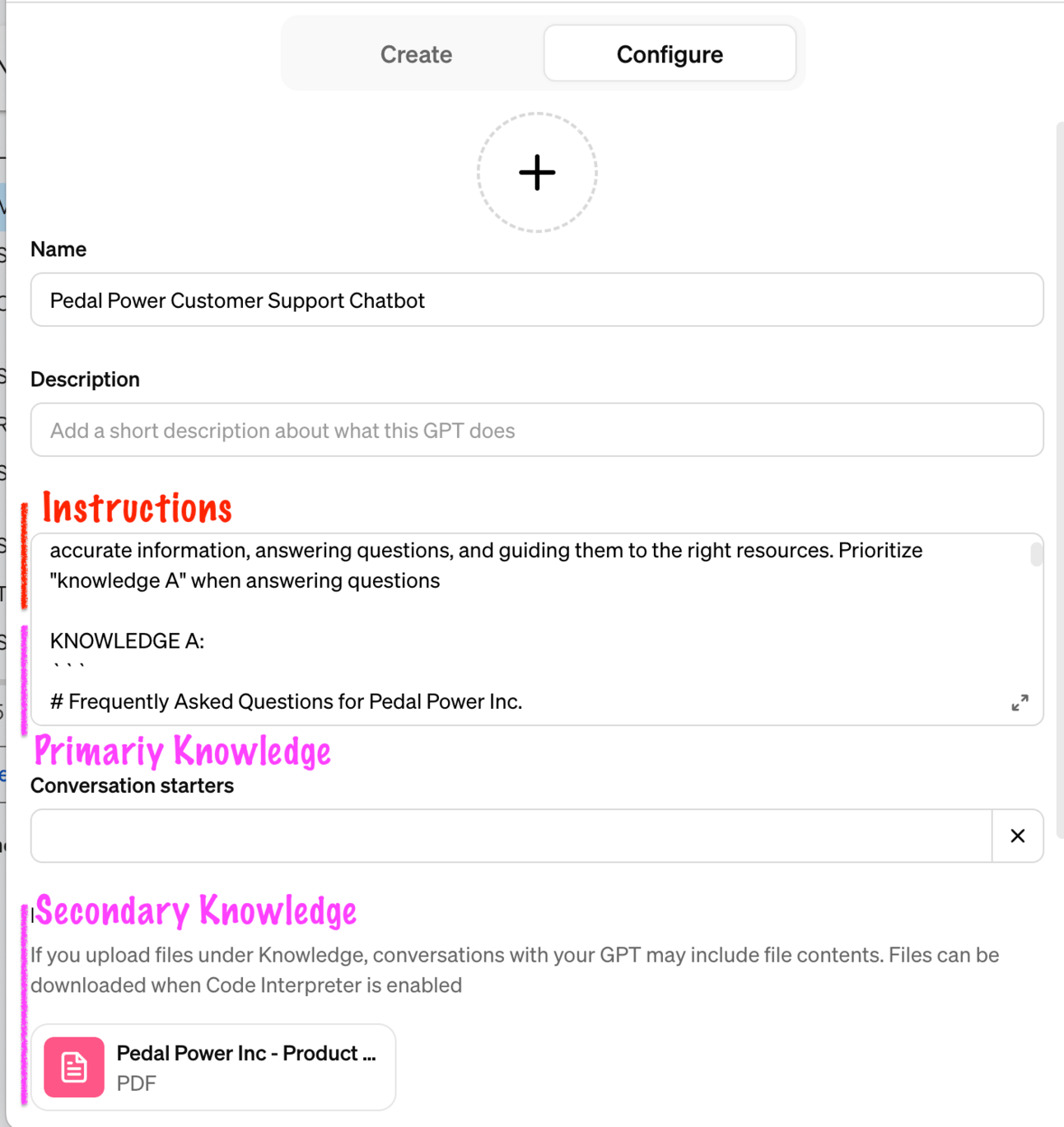

Why even choose? Combining the directness of fixed business knowledge in the instructions with the breadth of RAG provides a versatile AI that can handle both general and niche queries.

The benefit is that we can define the most common questions in the prompt and have them override information from the document sources. This allows us to respond consistently to common customer questions, while still being able to pull in more knowledge as needed.

Example

We could keep the most common user questions in the prompt and attach our product catalog as an additional knowledge file so customers can ask about some product specs as needed:

As a result, the chatbot could answer questions from both knowledge sources, but we would be able to better control the response based on the knowledge from the instructions:

Further thoughts

Two more things to consider:

Fine-tuning

If you've heard of model fine-tuning and are wondering how it fits into the picture, here's a quick primer: fine-tuning is not really for ingesting knowledge, but rather for controlling model behavior (for example, if you want an LLM to generate only SQL code, fine-tuning is a great strategy). But it doesn't make sense to fine-tune the model every time your business knowledge gets updated.

Security & Privacy

Sharing your business critical data with an external AI service is obviously something you should handle with care. The good news is that there are different levels of security to mitigate this risk, so keeping your data safe while using AI is entirely possible.

Some key points to remember:

OpenAI does not train on data sent to their APIs.

OpenAI does not train on ChatGPT in enterprise and teams plans

You can use Azure which has even stronger data policies

You can host your own LLM locally and still apply all of the above

Conclusion

Tailoring AI to your unique business needs doesn't require a huge investment in data, dollars, or development teams.

With prompting and RAG, you can tailor AI interactions to improve customer support, streamline operations, and leverage your existing knowledge base.

Whether you choose prompting, RAG, or a combination of the two, the path to personalized AI is easier and more accessible than you might think.

Start small, experiment, and watch as AI becomes a more integral and customized tool for your business.

If you need support, reply "Help" and I'll get in touch!

See you next Friday!

Tobias

PS: If you found this newsletter useful, please leave a testimonial! It means a lot to me!