After having shipped dozens of customer support chatbots to production with the best hitting 82% automated resolution rate, I thought it was time time for a quick reality check.

Because AI chatbots for customer support are among the lowest hanging fruits you could grab with Generative AI. But they can also turn quickly into a money pit, just moving resources from your customer support team to your IT department without contributing anything significant to the bottom line. Worst case I've seen: worse customer experience AND higher cost.

To avoid that situation, it's important to understand the decision criteria of when an AI customer support chatbot is really a low-hanging fruit (and when it's just a foul pea you wouldn't touch.)

Let’s dive in!

The Reality about AI Customer Support Chatbots

Google "biggest Generative AI case study" and you'll quickly come across Klarna.

They made big waves in February 2024 when they announced that their AI customer support chatbot was doing the equivalent work of 700 full-time agents – supposedly handling two-thirds of all customer service chats in its first month. This came at a time when everyone was looking for case studies with actual tangible results from Generative AI.

This was it. Except it wasn't.

About a year later, Klarna's CEO admitted the company had gone too far. The AI was cheaper, but also delivered lower quality. Customers complained about generic responses and by mid-2025, Klarna was rehiring humans, with the CEO conceding: "We focused too much on efficiency and cost."

What can we learn from that? The route to autonomous customer support might be more bumpy than it looks at first. Even with the best AI in the world and a ton of engineering budget, going all-in without understanding the tradeoffs can backfire.

So what do customer support chatbots actually look like in the real world?

First – forget the "starting at $49/month" pricing you see on SaaS landing pages. That gets you a widget on your website. It doesn't get you a system that actually resolves customer issues.

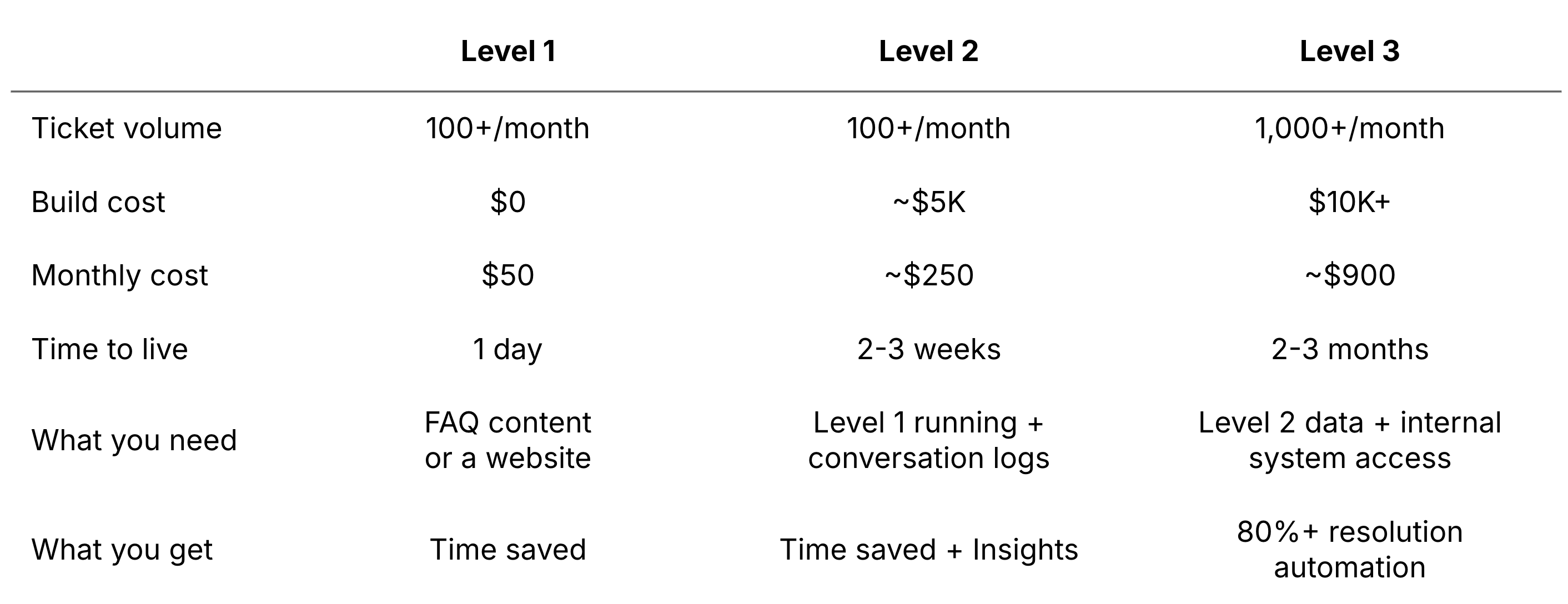

I’ve experienced three different levels of capability when it comes to AI customer support chatbots. Here's a brief overview of what you get at each:

Level 1: A Basic Q&A Chatbot

This is the kind of chatbot that answers common questions from a more or less structured knowledge base – it can be your website, a single FAQ page, or some internal documents. A chatbot like this can be set up in a day with any chatbot platform of your choice. The tool cost is near zero or a few hundred dollars per year.

But "set up in a day" assumes you already have your answers documented somewhere. If you don't, that's your first job, and this typically takes longer than the chatbot itself.

Level 2: A Chatbot With Insights

At this level, the chatbot can not only answer common questions, but also categorizes incoming requests, logs what people ask, and helps you understand your actual support patterns. Systems like this typically run at roughly $200-300 per month including infrastructure.

In my experience, this is where the fun (meaning: ROI) starts. Because this layer reveals whether a bigger investment in AI customer support is actually worth it. Without the data from Level 2, you're guessing about what to automate next. With it, you know exactly which question types drive volume and whether they require simple answers or system access to resolve.

That makes Level 2 more of a decision layer, instead of a pure capability upgrade.

Level 3: A Full Agentic System

This is the end goal, because this level moves from informing to acting. Imagine AI support workflows that route questions to the right people, pull data from internal systems, and actually take actions on behalf of customers – like tracking order status, resetting passwords, or processing shipping requests. Systems like this easily start at $10K+ to build and $1K+ per month to run. These are lower baselines and costs that are mainly driven by manual system integration needs and the monitoring overhead. Just because these systems can run autonomously doesn't mean no one is watching them.

Level 3 is where 80%+ actual support resolution rates become feasible. It's also the level where companies usually start, and that's the mistake.

AI has inverse economics. Your costs grow after launch, not before. And for a Level 3 system, you need clear ownership and responsibilities. Who maintains the knowledge base? Who reviews what the chatbot gets wrong? Who updates it when your products, services, or policies change? If that person doesn't exist in your organization, the chatbot degrades within months, perhaps weeks – and you're paying for a system that embarrasses you a little bit more every day.

1st Question: How Many Tickets Do You Handle?

A lot of the clients I've worked with that wanted a customer support chatbot didn't even know how many support tickets they were handling on average per year.

This has to be fixed first. You need to have a "problem size" baseline — no matter how rough it might be.

Because only once you know this baseline, you can run the math.

Example:

One department alone handles 15,000 support emails per year

People in that department earn $60/h on average

One ticket resolution takes an average of 5 minutes

That translates into about $5 per ticket and $75K in total support cost per year (rough cut). About half a full-time employee spread across a few people who all spend part of their day answering the same questions.

You can now backtrack this number and see if it’s plausible. Does that match what you're seeing on the ground? If so, that can end up being a clear business case – apply the 10K Rule.

Problem Baseline vs. 10K Gain

But not every business has this math.

Here's the rough framework:

Under 100 repetitive support requests per month: The economics usually don't work – even for the cheap SaaS version. You'll spend more time maintaining the system than you save. A good FAQ page and a responsive team member will outperform any AI at this volume.

100 to 500 per month: This is where a basic Q&A chatbot usually makes sense – the condition being that the questions are repetitive enough and the answers already exist somewhere. At this level, you're looking at a low-cost setup that takes pressure off your team. Important not to over-invest. Start simple, see what happens.

500+ per month: The business case is almost certainly there. At this volume, the question shifts from "should we build one" to "how fast can we get this live". And more importantly, how far can we take the automation.

If you don't even know how many support requests you handle per month, that's your first action item: track it for 30 days or review your support@ inbox. You can't make a business decision without a number.

Getting to 80%+ Resolution

Let's look at what separates a chatbot that answers questions from one that actually resolves them:

The system has to evolve in stages. The 82% resolution rate I mentioned didn't happen on day one. It started as a simple Q&A chatbot that expanded over a logging layer to understand what people were actually asking and which questions the chatbot couldn't handle. That data told us which areas to automate next. Only then did we build the agentic system with custom integrations that could take action, not just respond.

Knowledge base quality matters more than model quality. The chatbot is only as good as what it knows. Two hundred well-structured source documents beat two thousand dumped PDFs. This is unglamorous work which is still necessary.

There have to be top question clusters (and you need to know them). If every question coming in requires high touch and deep personalization, even the best AI support system won't cut it. Or it will be very expensive to build (you might as well just hire another human). But if your incoming cases revolve around 5-10 core areas, that's where targeted automation becomes feasible at a manageable build cost.

People around the chatbot matter as much as the chatbot itself. If the support staff reviewing chatbot outputs feel threatened by the system, they'll find reasons it "doesn't work." I've seen really good chatbots that were killed because it lacked buy-in from the people who were supposed to oversee them. This is not a training, but an alignment problem. So before you build, make sure the people whose workflow changes are part of the decision.

And finally: 80% is the right target. Chasing 95% accuracy means you never ship. 80% resolution with a smooth human handoff for the remaining 20% is where the ROI lives. That remaining 20% is often the complex, judgment-heavy stuff that humans should handle anyway. Ship at 80%, let humans catch the exceptions, and you're already profitable.

The Bottom Line

Chatbot projects often fail because they try to start at the end. The spec states a complex, integrated system before anyone even has any idea of what questions are coming income in or whether the volume justifies the build. Then six months and $50K later, you have an over-engineered solution to a problem that was never properly defined.

Don't be that project. Know your numbers, and start at Level 1. Let the data tell you when (if) to move up.

And if the math doesn't work – don't build one just because your competitors have one. That’s not profitable AI. That’s just AI FOMO.

See you next Saturday,

Tobias