Read time: 6 minutes

Hey there,

Today you'll learn how to avoid common machine learning pitfalls to increase the chances that your next ML project will be a success.

Machine learning can be a highly effective tool to solve many modern business problems. However, many people don't know how to approach ML correctly and make the same beginner's mistakes over and over again.

As a result, they waste time, money, and effort on projects that don't produce the desired results.

Why most machine learning projects fail

Here are five reasons why so many machine learning projects fail:

Lack of clear goals

Wrong expectations

Poor data quality

Inability to deploy models

Poor use acceptance

While I can't help you overcome all of these challenges within a single newsletter, I'd like to briefly discuss the most common pitfalls that beginners make so you don't run into them.

Here are the 7 machine learning pitfalls I see beginners fall into most often:

Pitfall 1: Using Machine Learning when it's not necessary

For beginners, this is the most important idea to understand.

Once you learn how to use a hammer, everything suddenly looks like a nail.

So the worst thing you can do is take your newfound machine learning skills and look for business problems that seem like a good fit.

Instead, do it the other way around:

Identify relevant business problems first, and only then consider whether machine learning could help you solve them.

And even if you've identified a relevant business problem that seems like a good fit for machine learning, don't jump right into training your first model.

In any case, start with a simple (non-ML) baseline.

Why?

This baseline gives you a performance benchmark that the machine learning algorithm must exceed.

For example: If you have never done churn analysis before, you should not start with an ML-based approach, but rather use simple heuristics or hard-coded rules such as:

predict all A-customers will stay, all C-customers joined last month will churn

use last week's mean for a time series forecasting problem

etc.

These rules have very low complexity and are easy for business stakeholders to understand. They also force you to think:

What are you going to do with these predictions?

Once you have clarity about your baseline and the desired outcome, you can experiment with ML models and see if they perform significantly better than your baseline solution.

Pitfall 2: Being too greedy

Don't try to push the performance of your machine learning model to the maximum at the beginning. This will likely result in poor performance on new data.

Instead, be more conservative when training, especially on smaller data sets, accept 'poorer' accuracy, and instead try to get your model into production and learn / improve on the go as you collect new data.

This approach is known as Occam's Razor, meaning that a simple solution is often better than a complex one.

Pitfall 3: Building overly-complex models

This goes hand in hand with the previous point: to achieve high accuracy, inexperienced people tend to create overly complex models, such as neural networks.

Complex models have two disadvantages:

They are more difficult to maintain and debug in production. If something goes wrong or your model predictions are off (which will happen), highly complex models are harder to fix than simpler models.

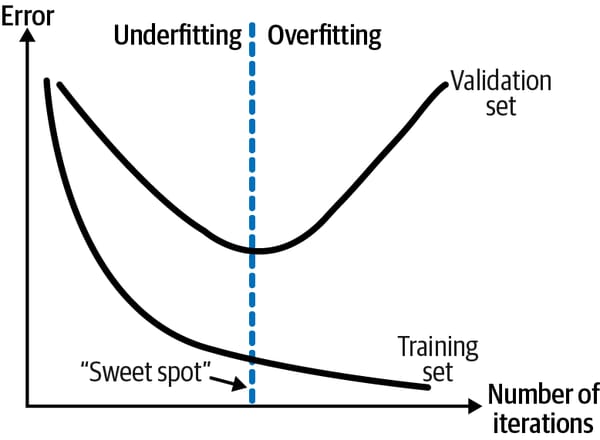

Complex models can more easily lead to a phenomenon called overfitting. Overfitting occurs when your model performs very well on your training data but poorly on new data. Predictive analysis is all about finding a sweet spot (see figure below) where the prediction errors in training (historical data) and testing (new data) are approximately equal. To avoid overfitting, you should always monitor the performance of your model on new data as well as the complexity of your model.

Pitfall 4: Not Stopping When You Have Enough Data

Make no mistake: Getting clean, labeled data ready for machine learning is an expensive process. It takes time, effort, and sometimes hard cash in the case of external data labeling or data acquisition.

Our machine learning intuition tells us that the more data (observations) we have, the better.

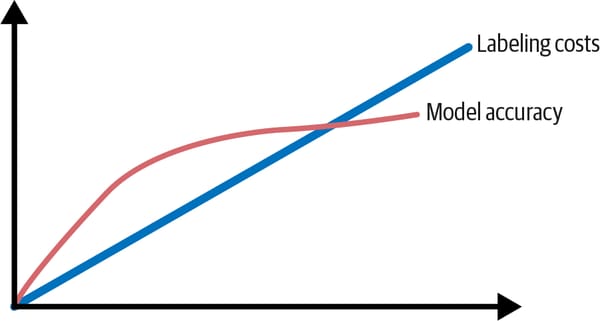

However, experience shows that ML algorithms reach a plateau at some point, where additional training examples do not significantly increase accuracy.

This effect is shown here:

When you reach this point, it is simply not cost-effective to spend more money on data labeling.

For this reason, it is important to have a clear goal in mind.

How accurate does your model need to be for you to be useful?

The threshold can range from "anything better than baseline" to "99.99% accuracy required," such as in high-risk applications.

Knowing your goal and what you want to achieve will help you avoid high costs.

Pitfall 5: Falling for the Curse of Dimensionality

While "the more data, the better" works to some extent for observations (rows), it can be counterproductive for features (columns).

Consider the following example:

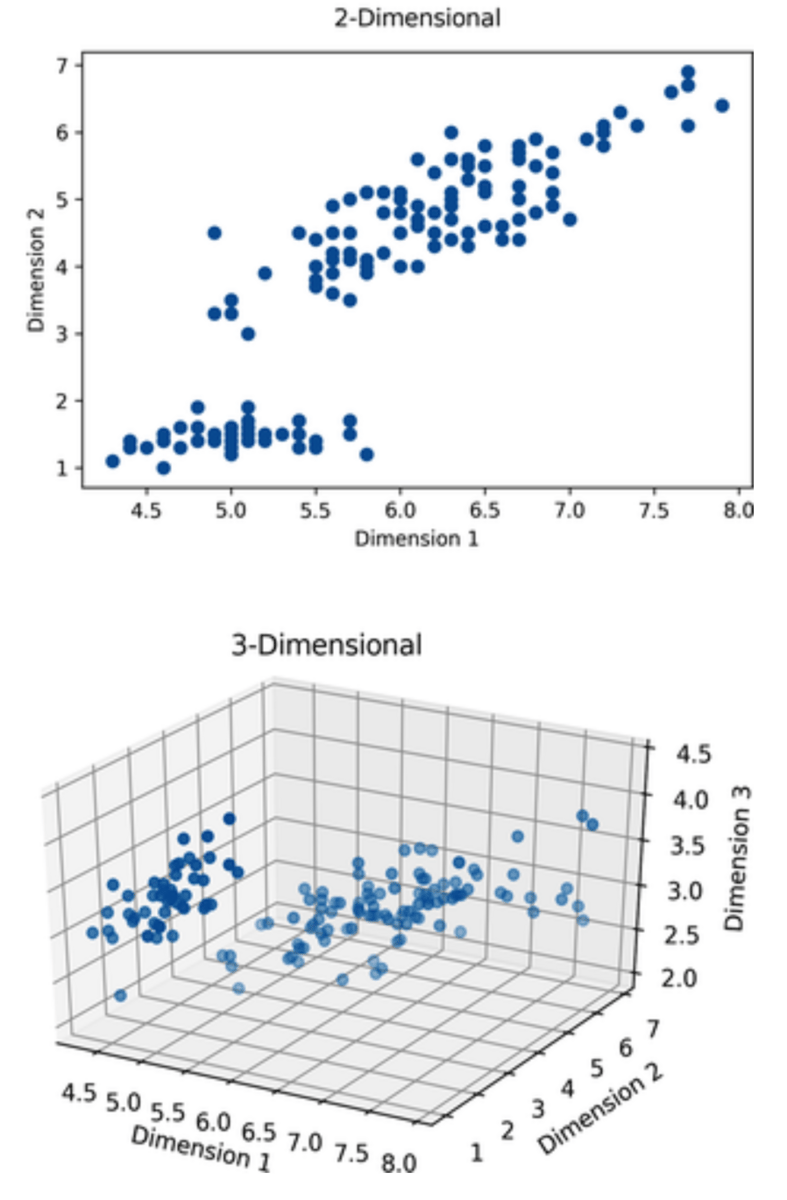

You want to predict US house prices based on the variables size, bedrooms, and location (zip code).

Size and bedrooms can be modeled as two separate features (columns), increasing the dimensionality of your dataset by only one dimension, as shown in the figure below:

However, once you start using zip codes, most ML algorithms require that you encode this categorical variable into as many features (columns) as there are unique categories.

In the case of zip codes, this would increase the dimensionality of the data not just by an order of 1, but by an order of 41,692 (possible US zip codes encoded as separate columns with values of 0 or 1).

Given this high dimensionality, most ML algorithms will struggle to find actual patterns in the data because the data is too sparse.

Therefore, be careful when adding new variables (features) to your data set - especially when they are categorical.

Don't hesitate to remove redundant or irrelevant variables.

Pitfall 6: Ignoring Outliers

Outliers are data points that are far above the average of your data set.

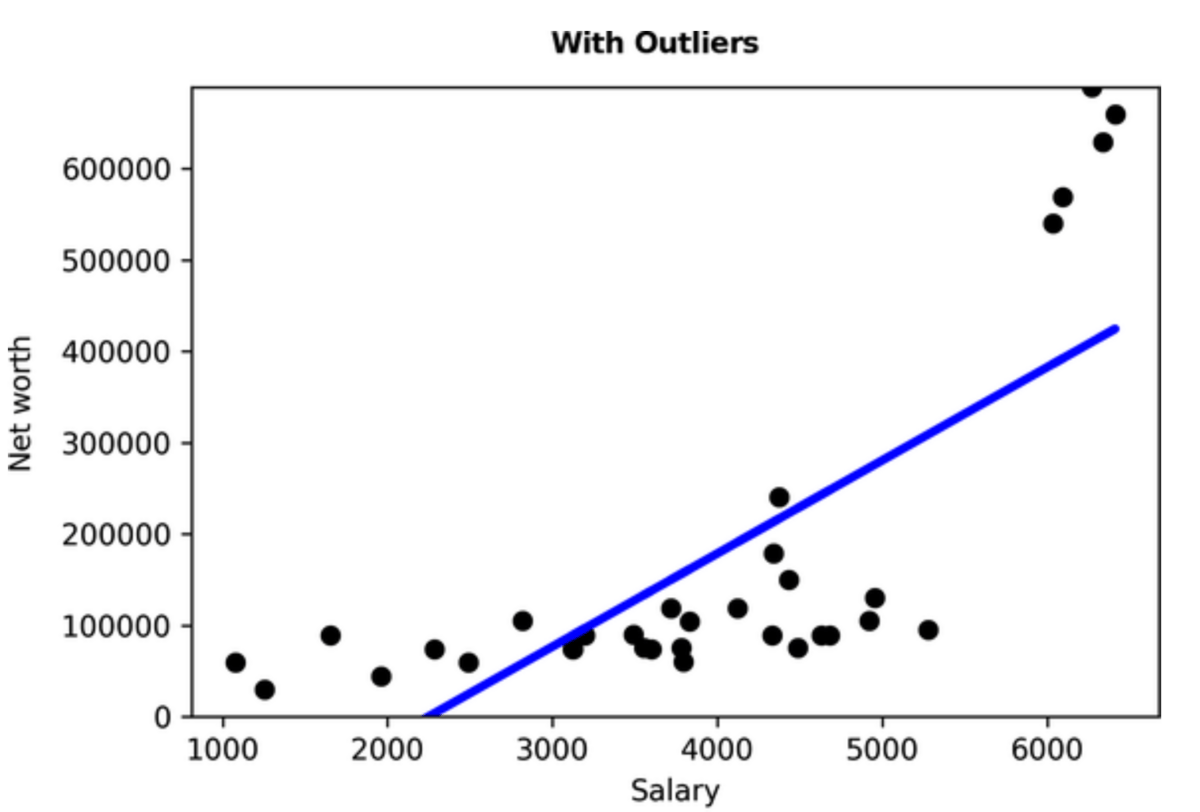

For example, consider a data set that contains people's salaries and net worth.

When you plot the data, it might look like this:

You can see that a few data points are very far away from all others (people with exceptionally high net worth).

The regression line (blue) in this case is skewed toward these outliers, resulting in a poor fit for all other data points.

Once we remove this group of high-net-worth individuals our regression model fits the remaining points much better:

The impact of outliers can be huge for many simpler algorithms such as linear regression.

So pay attention to detecting outliers in your dataset!

Pitfall 7: Taking Cloud Infrastructure for Granted

In my blog posts, I typically make the bold assumption that you have access to cloud computing for both model training and model inference.

But let's face it: Many organizations are not there yet.

I suggest experimenting with cloud computing, such as AIaaS or ML platforms, for non-critical data prototyping, even if it is not yet a standard practice in your organization.

This can give you a quick head start and expose you to modern ML workflows and tools to understand what is possible. It will also allow you to discuss with your team how cloud computing could benefit your overall AI/ML strategy.

Keep in mind, however, that migrating workflows from offline to cloud can quickly turn into a larger discussion than you initially anticipated.

That’s it!

Remember, machine learning is a powerful tool that can help you solve many modern problems, but it's important to use it correctly.

Start with the problem, keep it simple in the beginning, and pay attention to the data you have - this will immensely increase the chances of making your next ML project a success.

As always, I welcome your feedback and comments!

Feel free to reach out anytime!

See you next Friday,

Tobias

Want to learn more? Here are 3 ways I could help:

Read my book: If you want to further improve your AI/ML skills and apply them to real-world use cases, check out my book AI-Powered Business Intelligence (O'Reilly).

Book a meeting: If you want to pick my brain, book a coffee chat with me so we can discuss more details.

If you liked this content then check out my book AI-Powered Business Intelligence (O’Reilly). You can read it in full detail here: https://www.aipoweredbi.com